Inspiration

As students, we noticed a massive gap in the home-fitness market. Most people can't afford a personal trainer, and while YouTube tutorials are great, they don't talk back. You can't tell if your back is arched or your squats are shallow until you’re already injured. We wanted to build a "digital pair of eyes" that not only watches your form but understands the nutritional fuel required to sustain your lifestyle.

What it does

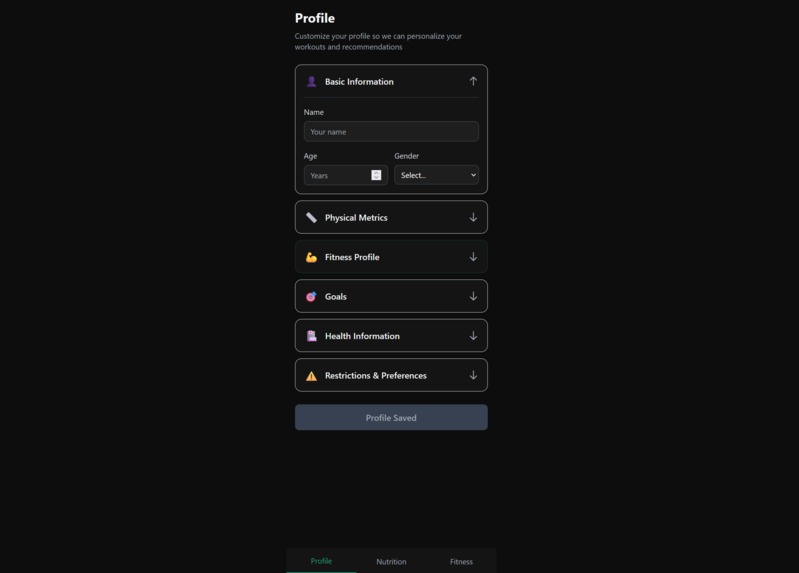

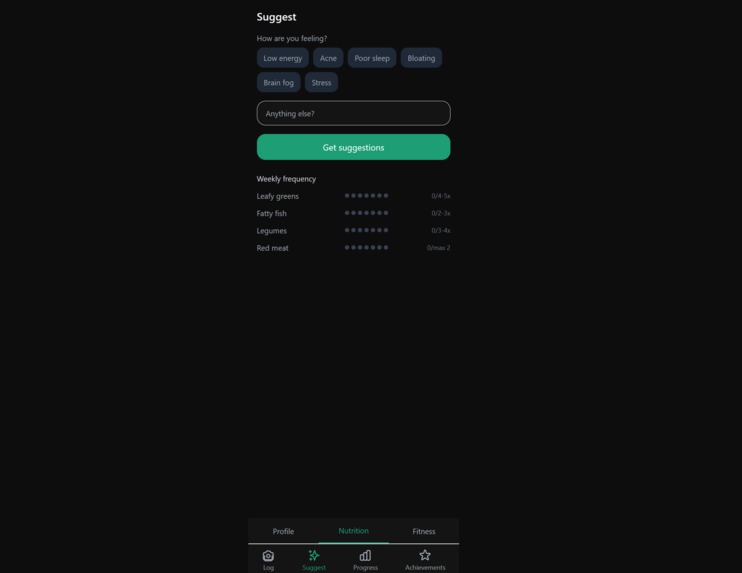

Dieta is an AI-powered fitness and nutrition ecosystem. It uses a webcam feed to perform real-time pose estimation, identifying 17 key body joints to provide immediate feedback on exercise form (e.g., "Straighten your back!"). Simultaneously, it bridges the gap between the gym and the kitchen by generating personalized meal plans are personalized to the intensity and volume of the workouts tracked by the AI.

How we built it

The core of Dieta is a YOLOv8-pose model trained on a public custom dataset of workout postures. We implemented a computer vision pipeline in Python that calculates joint angles using the Law of Cosines. For a given set of keypoints (Shoulder A, Hip B, and Knee C), we calculate the interior angle θ to verify posture: \(\theta = \arccos \left( \frac{a^2 + c^2 - b^2}{2ac} \right)\)

The frontend is built with TypeScript for the dashboard, the backend is built using Flask and Python, while the nutrition engine leverages the Gemini API to correlate calories burned with macronutrient requirements.

Challenges we ran into

The biggest hurdle was data normalization. Converting raw pixel coordinates from different camera resolutions into a consistent format for angle calculation led to many "clumped" data points. We also struggled with the "occlusion problem" when a limb disappears behind the body during a side-profile pushup, causing the model to lose track. We solved this by implementing a temporal smoothing algorithm that predicts the joint's position based on previous frames.

Accomplishments that we're proud of

We are incredibly proud of achieving real-time inference on a standard laptop CPU without needing a massive dedicated GPU. Seeing the "Form Score" bar turn from red to green the moment someone corrected their posture was a hype moment for the team.

What we learned

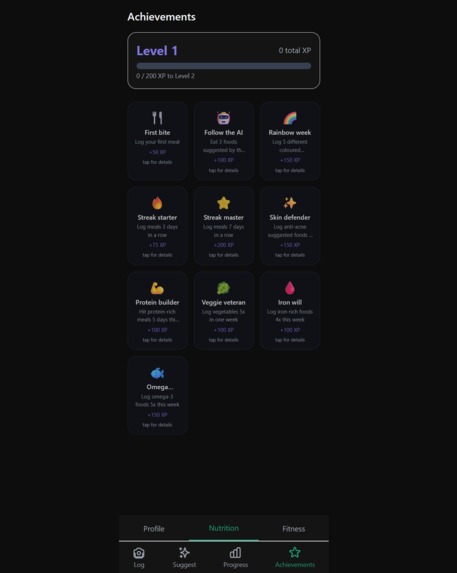

We learned that Data Cleaning > Model Architecture. We spent hours wrestling with messy CSV files before realizing that a smaller, high-quality augmented dataset in Roboflow outperformed a massive, noisy one. We also gained deep experience in deploying Agentic AI, making sure the nutrition advice felt like a conversation, not just a spreadsheet.

What's next for Dieta

We plan to expand our "Exercise Library" to include weightlifting as well as yoga poses. We also want to implement multi-person tracking for competitive partner workouts and integrate with wearable APIs (like Apple Health) to pull in heart rate data for even more precise meal planning.

Log in or sign up for Devpost to join the conversation.