Inspiration

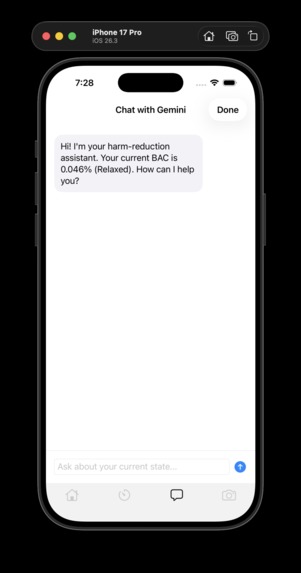

Alcohol consumption often impairs a person’s ability to accurately judge their own level of intoxication. This creates dangerous situations where individuals may unknowingly make unsafe decisions such as driving under the influence or putting themselves at risk. Our team wanted to explore whether modern wearable technology and AI could help provide an objective estimate of intoxication level.

The inspiration came from observing how frequently people rely on subjective judgment when drinking. Most individuals say things like “I feel fine” or “I’m not that drunk,” even when their coordination or speech suggests otherwise. We wanted to build a system that could analyze real-world signals such as speech, motion, and biometrics to give users a better understanding of how impaired they may actually be.

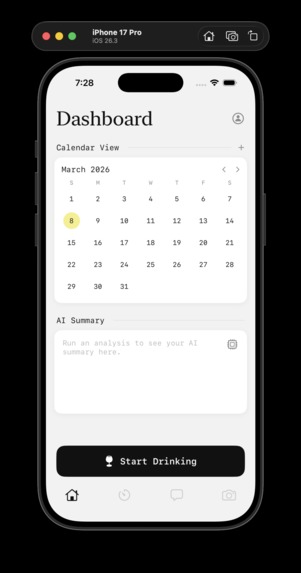

Additionally, we recognized that alcohol misuse and addiction are major public health challenges. By allowing users to track their intoxication patterns over time, our project could also serve as a tool for awareness and behavioral change.

What It Does

Our application estimates a user’s level of intoxication using multiple data signals collected from both a smartphone and wearable device. These signals include:

Speech analysis to detect potential slurring

Motion data to identify instability or loss of coordination

Heart rate data from an Apple Watch

Environmental context such as ambient noise levels

These inputs are combined to produce an intoxication score displayed as a simple color-based indicator that communicates the user's state:

🟢 Sober

🟡 Mildly impaired

🟠 Intoxicated

🔴 Highly impaired

This allows users and their friends to quickly understand whether someone may need to slow down, call a ride, or be monitored for safety.

How We Built It

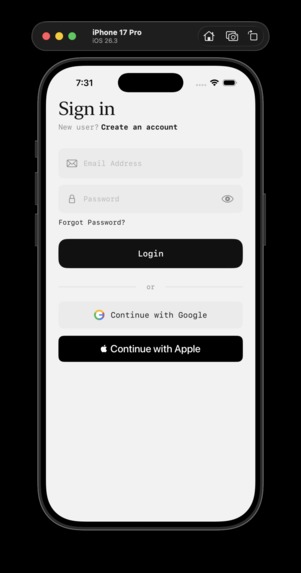

The project was built as an iOS application using Xcode and Swift.

The main technical components include:

Speech Analysis We implemented a voice recording system that captures short audio samples and analyzes them for potential slurring using AI-based speech processing through ElevenLabs.

Wearable Sensor Data Motion and heart rate data are collected from the Apple Watch, which provides continuous biometric and motion sensing capabilities.

Backend Processing To process audio securely and connect to AI services, we deployed a backend server using Vultr. The server handles requests from the app and processes speech data before returning analysis results.

Scoring System Each signal contributes to a weighted intoxication score.

This multi-signal approach allows the system to produce a more reliable estimate than relying on a single indicator.

Challenges We Faced

One of the biggest challenges was working with multiple data sources simultaneously. Combining wearable sensor data, speech analysis, and environmental information required careful design to ensure the system remained responsive and accurate.

Another challenge was integrating external APIs and backend infrastructure. Setting up a server and securely connecting the mobile app to AI services required debugging networking issues and ensuring that audio data could be transmitted correctly.

Finally, ensuring that speech analysis worked reliably in different environments was difficult. Background noise, microphone quality, and recording conditions can all affect voice detection. To address this, we incorporated environmental noise measurements from the Apple Watch to help adjust the confidence of the speech analysis.

What We Learned

Through this project, we learned how to integrate AI services, wearable sensors, and mobile development into a single application.

We gained experience in: Building iOS applications using Swift

Working with wearable devices and biometric data

Integrating third-party APIs

Deploying backend infrastructure

Designing multi-signal decision systems

Most importantly, we learned how modern consumer devices can be combined with AI to create tools that enhance personal safety and awareness.

Built With

- avfoundation

- elevenlabs

- swift

- vultr

- xcode

Log in or sign up for Devpost to join the conversation.