Inspiration

It started with a wardrobe full of clothes and nothing to wear. The problem wasn't a lack of clothing — it was a lack of connective tissue. Individual pieces that refused to become outfits. And the solution, every time, was the same: open a shopping app and buy something new.

The environmental cost of that reflex is staggering. The fashion industry causes 8–10% of all global greenhouse gas emissions — more than aviation and maritime shipping combined. It is the second-largest industrial consumer of water, and 20% of global industrial water pollution comes from textile dyeing alone. 85% of all textiles end up in landfill or incineration.

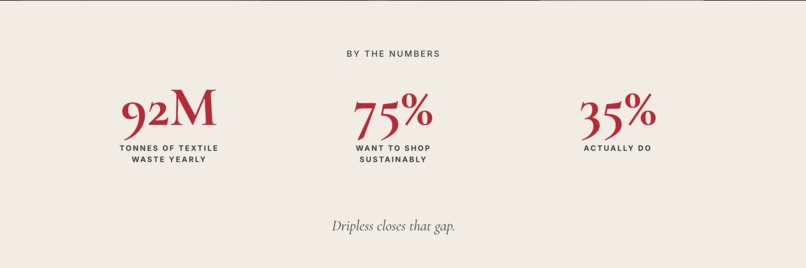

The data doesn't suggest people don't care. Research shows a 40% gap between wanting to shop sustainably and actually doing it. That gap isn't a values problem. It's a friction problem. Dripless is built to close it.

What It Does

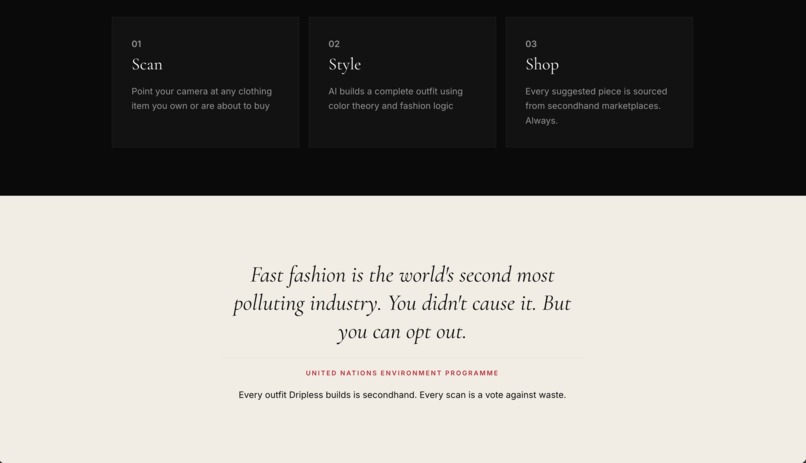

Dripless is an AI-powered styling tool that helps you build complete outfits around clothing you already own — or are considering buying. Every suggested piece is sourced from secondhand and sustainable alternatives.

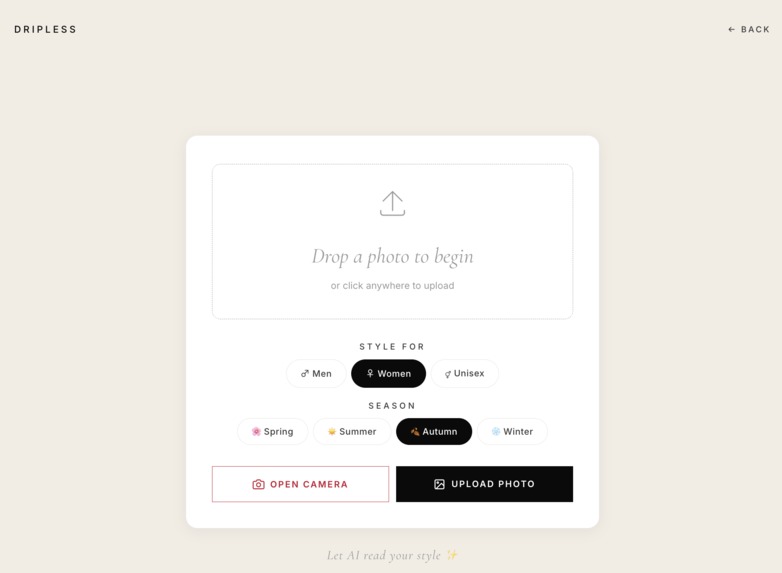

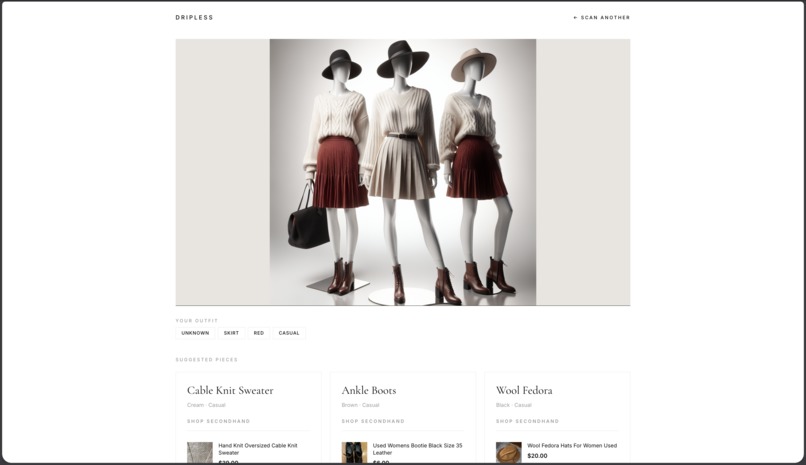

The flow is simple: point your phone camera at any clothing item. Dripless identifies it, suggests three complementary pieces to complete the outfit, finds real purchasable secondhand alternatives for each piece, and generates a visual of the complete styled look — all in under 30 seconds.

How We Built It

Dripless is a React single-page application styled with CSS, developed entirely inside the Antigravity IDE. Under the hood, it runs a four-stage AI pipeline where each tool has a single, well-defined responsibility — the output of each stage becoming the structured input to the next.

Stage 1 — Item Identification: GPT-4o Vision When a user photographs a clothing item, the image is sent to GPT-4o via the OpenAI API with vision capabilities enabled. GPT-4o Vision analyses the image and returns a structured item description — garment type, color, fabric cues, and style category — precise enough to drive both the recommendation and search stages downstream.

Stage 2 — Outfit Recommendations: GPT-4o The item descriptor is passed back into GPT-4o as a text prompt, alongside the user's gender preference and current season. The model returns three complementary pieces needed to complete the outfit. We engineered the prompt to treat the output as an explicit schema — requiring item type, color palette, and style descriptor for each piece — so the response could feed the next two stages without additional parsing or transformation.

Stage 3 — Secondhand Sourcing: SearchAPI Each recommended piece is passed to SearchAPI, which runs a filtered Google Shopping query targeting pre-owned listings. Query strings are constructed programmatically from GPT-4o's structured output, with secondhand filters applied consistently. SearchAPI returns product name, price, source platform, and a direct purchase URL for each item — surfaced cleanly in the UI.

Stage 4 — Outfit Visualisation: DALL-E

In parallel with the SearchAPI calls, the actual products returned by SearchAPI are compiled into a single image generation prompt and sent to DALL-E. Crucially, the visualisation is anchored to the real recommended items — not generated independently — so what the user sees reflects the outfit that was actually built. The prompt specifies invisible mannequin, pure white background, studio lighting, and a full body shot to produce clean, editorial-style output consistently. To prevent DALL-E's temporary image URLs from expiring before the frontend could render them, the server immediately fetches the raw image bytes via axios with responseType: 'arraybuffer', converts to base64, and returns a data:image/png;base64,... string directly in the JSON response.

The SearchAPI and DALL-E calls run concurrently via Promise.all, keeping end-to-end latency under 30 seconds. Camera input is handled via the HTML capture="environment" attribute, optimised for mobile — the primary demo device.

Challenges We Ran Into

Finding a secondhand API that actually worked. Our original sourcing plan fell apart in stages. Depop had no public API — we found that out mid-planning, after already pitching it as our primary source. ThredUp had an API, but it couldn't search by item similarity, which was the entire feature we needed. eBay Browse API ended up being the right call: free, well-documented, and with far more inventory than either platform. Sometimes the obvious choice isn't the first one you reach for.

Keeping DALL-E grounded in the actual outfit. Early versions of the outfit prompt produced images that had nothing to do with the items we'd recommended — random clothing, inconsistent styling, no relationship to the search results. We had to rethink the approach entirely: rather than prompting DALL-E independently, we anchored image generation directly to the products SearchAPI returned. We also had to refine the prompt itself — early outputs included visible mannequin faces and hands, cluttered backgrounds, and inconsistent lighting. The fix was explicit: "invisible mannequin," "pure white background," "no face, no hands," "studio lighting," "full body shot." Prompt engineering for image generation turned out to be its own discipline.

DALL-E image URLs expiring before the frontend could render them.

DALL-E 3 returns a temporary URL hosted on OpenAI's servers that expires within minutes. When the frontend tried to render the image directly, it would sometimes fail silently or return nothing — with no obvious error. The fix was to intercept the image on the server immediately after generation: fetching the raw bytes via axios with responseType: 'arraybuffer', converting to base64, and returning a data:image/png;base64,... string directly in the JSON response. The frontend renders it as a static string with no expiry risk.

SearchAPI's monthly quota running out mid-development.

All 100 free searches burned through during testing, causing product lookups to silently return empty arrays. There was no error — just "products": [] on every request, which initially looked like a code problem. Silent failures are the hardest kind to debug. We also discovered that SearchAPI's google_shopping engine returns links to Google Shopping pages rather than direct product listings — functional for the demo, and something to route around in a future build.

A teammate's branch deleted the entire frontend.

One team member's "Fix camera functionality" commit accidentally deleted App.jsx, index.html, package.json, and every other frontend file. For a team using GitHub for the first time, this was a significant moment. We skipped the merge entirely, applied the one-line capture="environment" fix directly to main, and started keeping local backups of every working version from that point forward. Version control is only as safe as the people using it.

The video file breaking Vite's build pipeline.

The 45MB hero video caused Vite to fail during build when stored in /src/assets. Moving it to /public so it could be served statically — bypassing the bundler entirely — fixed the build. Then a stale src path in the component meant the video still didn't display, resolved by updating the import to /fashion-video.mp4. Two separate bugs, one file move.

Port 5000 quietly blocked by macOS AirPlay. The backend server wouldn't start on a Mac, with no clear error. The culprit was AirPlay Receiver, which silently occupies port 5000 on modern macOS versions. Switching the backend to port 3001 resolved it instantly — but it took longer than it should have to find, because nothing in the error output pointed to AirPlay.

Scroll animations that never triggered.

IntersectionObserver was attaching before the DOM had finished loading, so elements never registered as entering the viewport. Fixed with a double requestAnimationFrame to defer observer attachment — a small change with an outsized effect on the feel of the page.

Accomplishments We're Proud Of

We're a team of three to four relatively early-stage developers. No one on this team had built a multi-model AI pipeline before. That context matters for what follows.

The pipeline is what we're proudest of. Chaining GPT-4o Vision, GPT-4o, SearchAPI, and DALL-E into a single coherent flow — where each stage hands structured data to the next — required deliberate design at every boundary. The result is a system that goes from a phone camera photo to a fully styled outfit with real purchasable secondhand links and a generated visual, consistently, in under 30 seconds.

We're proud of the URL generation system. Rather than returning generic search pages, SearchAPI delivers filtered links pointing directly to pre-owned listings — making the sustainable option the path of least resistance, not a detour.

We're proud of the personalisation layer. Gender and season inputs propagate through GPT-4o's recommendations and into the DALL-E prompt, so results feel considered rather than generic.

We're proud of the base64 image pipeline. Solving the DALL-E URL expiry problem server-side — rather than papering over it on the frontend — meant the visualisation works reliably every time, not just most of the time.

And we're proud of the UI. It stays brief and uncluttered throughout — no onboarding friction, no visual noise. The product speaks for itself.

Most of all: we hit real technical walls, and we worked through every one of them. Dripless isn't a demo that works under controlled conditions. It's a product that works.

What We Learned

The hardest problems weren't the AI integrations — they were the edges. A video losing its source reference after a file move. An observer firing before the DOM existed. A server port blocked by a system process that had nothing to do with our code. A teammate's branch silently deleting the entire frontend. The pattern was consistent: at the boundaries between tools, between stages, between teammates, assumptions break. The discipline we built was validating everything at every handoff.

We learned that separating concerns across models is more robust than asking one model to do everything. Each tool in the pipeline — GPT-4o Vision, GPT-4o, SearchAPI, DALL-E — does one thing and does it well. A failure in any stage is isolated and debuggable, rather than tangled and opaque.

Prompt design turned out to be real systems engineering. The GPT-4o output needed to simultaneously satisfy two downstream consumers — a search query and an image generation prompt — with different format requirements. That constraint forced us to treat the model's output as an interface contract, not just a response. That shift in framing made the entire pipeline more reliable and easier to iterate on.

And the most transferable lesson: working through problems methodically — actually understanding each bug rather than patching around it — compounds. Each issue we fully understood made the next one faster to solve. As a team of early-stage developers, that was probably the most valuable thing we built.

What's Next for Dripless

Size-aware sourcing. SearchAPI currently returns secondhand results without size filtering. The next step is capturing size at the start of the flow and passing it as a hard constraint into every query — so every suggested alternative is something the user can actually wear, not just find.

Wardrobe memory. A persistent wardrobe model would let Dripless understand which pieces a user already owns, identify genuine gaps, and suggest only the items that expand outfit coverage most efficiently. This addresses the original problem at a systemic level: not just "what do I wear today," but "what does my wardrobe actually need."

Sustainability scoring. A per-item score based on resale platform, estimated shipping distance, and fabric composition — giving users a concrete environmental comparison between buying new and buying secondhand. The goal isn't to add friction back in. It's to make the better choice visible.

Direct platform integrations. Moving beyond SearchAPI's Google Shopping layer to direct integrations with Depop, Vinted, and ThredUp would surface richer product metadata — condition ratings, seller reputation, exact measurements — and enable true cross-platform search rather than web scraping.

Seller tooling. The same pipeline that helps buyers find complete outfits can help secondhand sellers show how a single listed item fits into multiple looks — increasing discoverability and making it easier for sustainable inventory to move.

The problem Dripless is solving — the gap between sustainable intent and sustainable action — is real and measurable. The 40% is not a ceiling. It's a starting point.

Built With

- docker

- ginwebframework

- golang

- gorm

- javascript

- mysql

- openaiapi

- searchapi

Log in or sign up for Devpost to join the conversation.