Inspiration

Our inspiration started with a simple observation: our teammate Jayden had significantly lower screen time than the rest of us because he kept his phone in grayscale. We realized this was a manual defense against a massive, systemic issue, and we wanted to scale it into a system that actively fights back.

The Problem Social media algorithms rely on hostile architecture. They are predatory by design, optimizing for outrage, variable rewards, and hyper-stimulation to maximize screen time at the expense of mental health. Users currently have zero agency. They are trapped in passive consumption loops, making it nearly impossible to escape brain-rot or echo chambers once the algorithm categorizes them.

The Legal Reality Recent lawsuits prove these platforms intentionally deploy these dark patterns as defective, dangerous products. Just days ago, on March 25, the K.G.M. v. Meta & YouTube trial in California resulted in a landmark $6 million verdict. The jury explicitly found the platforms liable for being "addictive by design," pointing directly to features like infinite scroll and dopamine loops. Current digital wellness apps are just simple timers that completely fail to address this underlying algorithmic manipulation. This verdict highlights the urgent need to use software to directly fight a software problem.

The Solution FixMyFeed is a client-side algorithmic bodyguard that flips the power dynamic. It treats the host platform's recommendation engine as an adversarial network that must be aggressively tamed. Operating locally as a browser extension, it intercepts the DOM in real-time, using heavy backend NLP processing to evaluate incoming videos before the user even registers them.

It actively weaponizes the platform's own retention metrics to forcefully rewrite the user's feed:

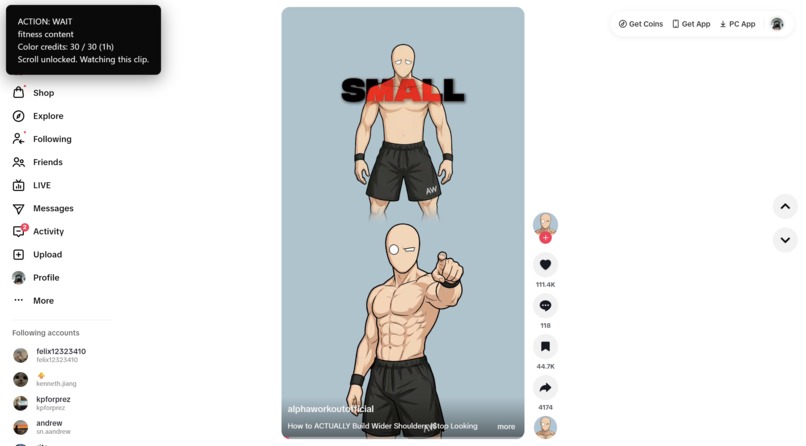

- Zero-Retention Punisher: When the backend scores a video as toxic, rage-bait, or off-topic (

SKIP), the extension instantly blocks the UI and triggers a forced programmatic scroll. This starves the host platform, feeding it a "0-second retention" metric that actively penalizes and down-ranks that content type. - Algorithmic Lock-In: When the scanner detects high-value, user-approved content (

LIKE_AND_STAY), it forces a high completion rate (and optionally auto-likes). This explicitly trains the host's backend to serve more of that exact niche. - ToS-Compliant Execution: By operating purely through client-side DOM manipulation and local event listeners, it acts as an accessibility layer rather than a bot network, keeping the user's account completely safe from bans while completely disrupting the platform's data collection.

What it does

FixMyFeed is a behavior-aware social media filter that intercepts content on TikTok and Instagram Reels. A Chrome extension evaluates video text in real time and assigns one of three actions: SKIP, WAIT, or LIKE_AND_STAY. If a video is marked to skip, the extension blocks the screen and automatically scrolls past it.

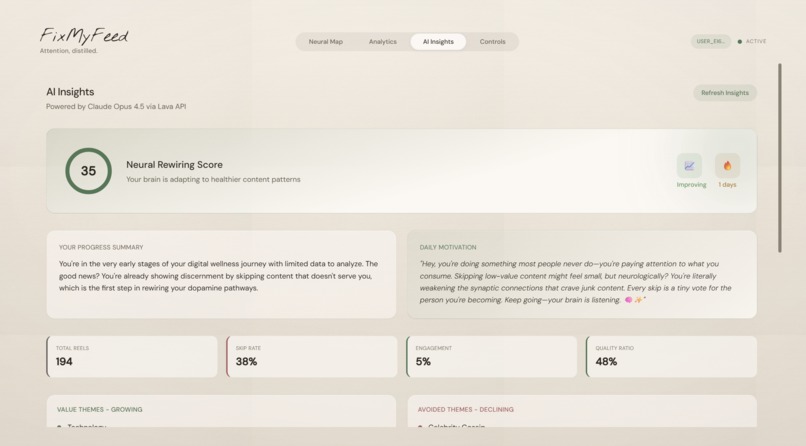

We treat color as a limited budget. Users consume credits as they watch content. As credits deplete, the page gradually shifts into grayscale to add visual friction. A dashboard logs these watch events, creating charts and coaching insights to help users track their habits.

How we built it

The project is split into an extension, a backend, and a dashboard.

- Chrome Extension: Built with content scripts that read visible text and handle the blocker UI using an IntersectionObserver. A background worker manages API calls and tracks the color credit state.

- Backend API: Written in Python using FastAPI. We set up a three-agent pipeline using Lava-hosted endpoints. The Gatekeeper agent makes fast action decisions, the Deep Analyzer runs background evaluations, and the Insight Generator creates coaching data.

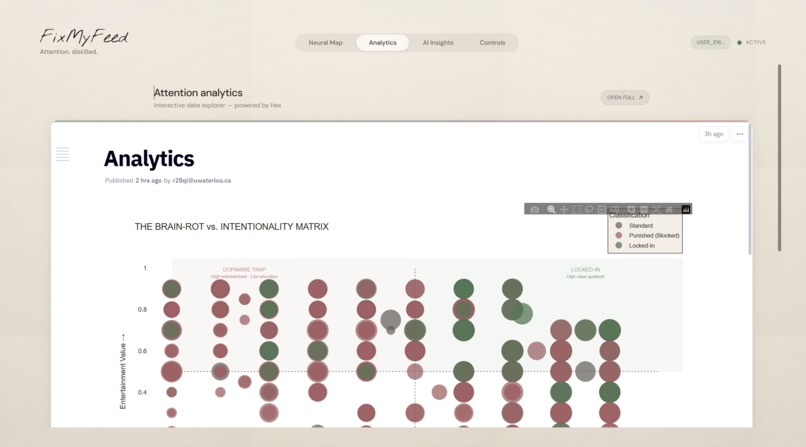

- Data and Dashboard: We utilized Hex as our primary collaborative data science platform to investigate neural dopamine trigger patterns and iterate on our unsupervised clustering logic. By connecting our Supabase instance directly to Hex, we were able to rapidly prototype our 3D visualization design tokens and behavioral research before implementing them into the React dashboard.

How we used Lava

- The Nervous System: Utilized the Lava API as the core infrastructure for real-time content interception.

- Parallel Inference Pipeline: Orchestrated three distinct Large Language Models simultaneously to power our specialized agent architecture.

- Millisecond Triage: Used the Gatekeeper Agent (Llama 3.1 8B) to block toxic content before visual registration.

- Deep Cognitive Synthesis: Powered the Deep Analyzer Agent with GPT-4o and the Behavioral Insight Agent with Opus 4.5 to run parallel background threads that evaluate complex psychological patterns and generate coaching data.

- High-Volume Asynchronous Load: Relied on Lava's ultra-low latency to handle these three agents firing at 30-60 frames per second across thousands of DOM mutations without bottlenecking the browser.

How we used Hex

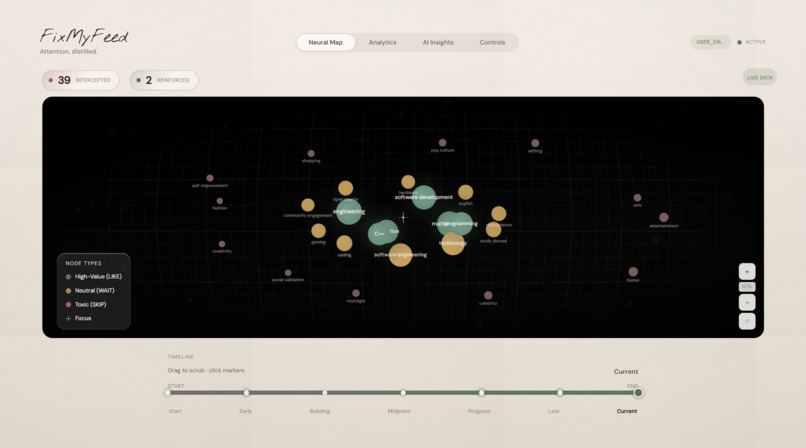

- Collaborative R&D Environment: Used Hex notebooks to engineer FixMyFeed’s "Cognitive Map."

- Unsupervised Clustering: Connected our live Supabase instance directly to Hex to perform K-Means Clustering on thousands of recorded dopamine trigger events.

- 3D Coordinate Mapping: Translated chaotic social media data into a structured, human-readable format.

- Visual Prototyping: Engineered the specific design tokens (tracking, glow fall-offs, and vector grids) that now power our React dashboard, seamlessly bridging the gap between raw JSON backend logs and the high-fidelity UI.

Challenges we ran into

Handling Chrome's Manifest V3 background workers was difficult, especially dealing with wake-up races and extension context invalidation. We had to write custom retry logic around our messaging pipeline so the extension wouldn't crash. Making the auto-scroll fire reliably without getting stuck on blocked videos also took a lot of trial and error with pacing and retry attempts.

Accomplishments that we're proud of

We got a multi-agent pipeline executing fast enough to intercept and block real-time video feeds. Turning "grayscale as friction" into a functional, credit-based visual constraint required tight coordination between the backend event logs and the frontend DOM. Setting up the Lava endpoints to output strict action rules instead of ambiguous scores made the whole system much more reliable.

What we learned

We learned how to manage complex state transfers between Chrome content scripts and service workers. We also found that prompting models for strict classification actions (Skip/Wait/Stay) works far better for real-time application logic than asking for numerical sentiment scores.

What's next for FixMyFeed

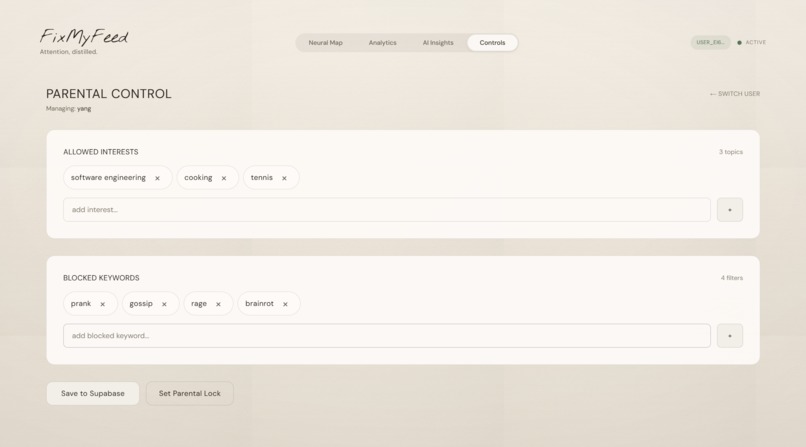

We plan to improve the classification policies for our Gatekeeper agent to handle edge cases and uncertain content better. We also want to expand beyond Instagram and TikTok, clean up the dashboard UI, and add stricter parental lock features.

Built With

- css

- fastapi

- hex

- html

- javascript

- lava

- node.js

- python

- react

- scikit-learn

- supabase

- tailwind

- typescript

- vercel

- vite

Log in or sign up for Devpost to join the conversation.