Inspiration

Matt and Allen have both participated in the build in public X community while launching projects. During this process, they discovered that a lot of times you would get user feedback through X. Matt also talks to the AI voice assistants quite frequently when he's driving just to learn about new topics. So we decided to combine the two to create a product that someone could use every single day that enhanced their work effectiveness and allowed them to learn more deeply about the users that they're serving. Aziz wanted to get information from X without having to go through a pre set feed and wanted things easily presented.

What it does

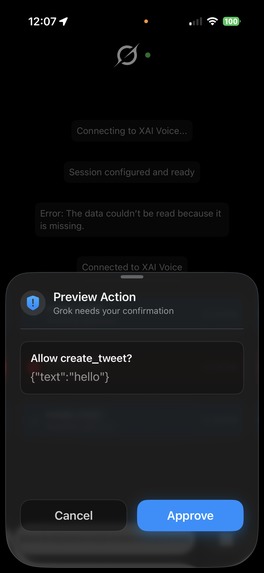

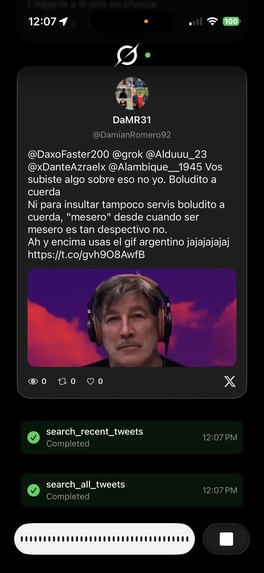

Grok Mode X-Ecutive Assistant allows you to interact with Twitter by just using your voice. It gives you an effective interface and way to be able to:

- Search through Twitter

- Search through people's specific posts

- Reply to people's posts

- Quote tweet their posts

- Make your own posts

- Make linear tickets based on whatever you discover in this conversation The primary use case of this assistant is to be able to give product owners whose users primarily reside on a platform like X the ability to have real-time understanding of what their users are experiencing and give you a method to be able to quickly gather the context and jump right into the problem. This works even if you're on-the-go.

How we built it

We built Grok Mode X-Ecutive Assistant as an iOS app in SwiftUI. We also used the real-time voice speech to speech API for Grok. We built some tools for that that utilized the Linear API and also the X API to be able to do all sorts of functions via voice. Most of our code actually exists just on the local side because we wanted to be able to prototype effectively during the hackathon.

Challenges we ran into

Sometimes our tool calls would inexplicably fail or there was some level of latency, buffering, or other issues with the web socket. We also tried to implement a custom voice, but we were not able to get it to work with speech-to-speech as it seems like it was only working for text-to-speech.

We also had some issues with echoing on the speech-to-speech assistant on mobile. Where it would just start talking to itself. We ended up being able to mitigate these. We also ran into some really interesting issues around the actual response cut off. So when you would interrupt the assistant sometimes it would have more context thinking it said more than it did. We found a trim parameter for the web socket that was not documented that was able to fix our problem.

Accomplishments that we're proud of

We are very proud that we were able to create a functional, full-stack SwiftUI app during the duration of this hackathon with a very smooth UI and features that our team found personally very useful.

What we learned

We learned how to work with speech-to-speech voice agents on mobile. We learned the audio processing techniques around making sure they don't have echoes, and we learned about trimming and all these other interesting things. We also learned how to connect a social media platform to tools that an agent could use, which none of us had ever done before.

What's next for Grok Mode: X-ecutive Assistant

We are going to be ready to be judged at 12:30.

Built With

- swiftui

- voice-agents

Log in or sign up for Devpost to join the conversation.