-

-

Hydration is a key issue, especially at TreeHacks!

-

GIF

GIF

Here's a sample recording of a finger. If you look closely, you might be able to see the blood flow!

-

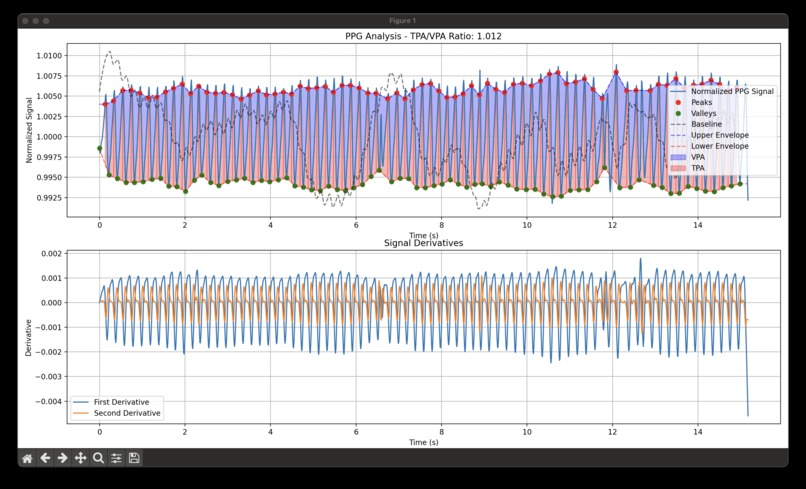

Our calculation method

-

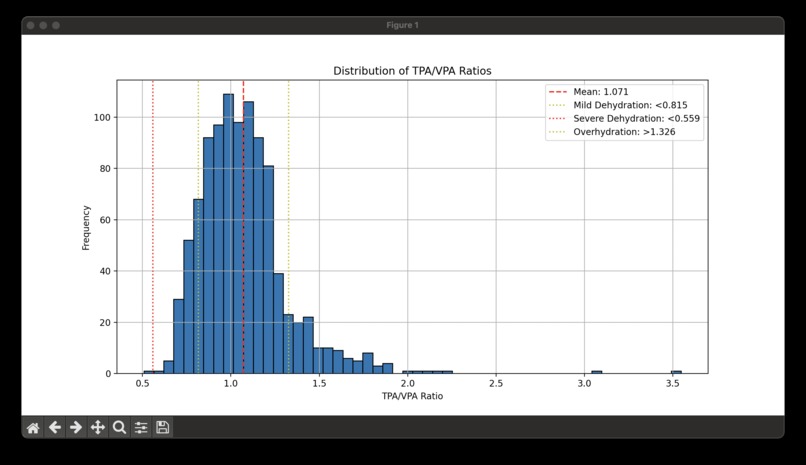

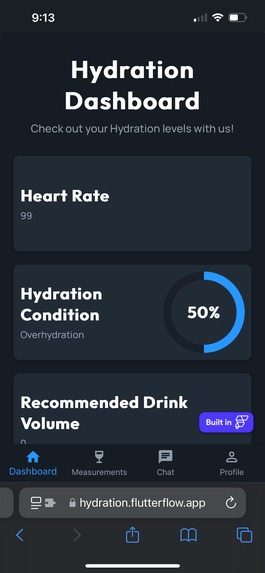

Our analysis of the Terra dataset's hydration levels

-

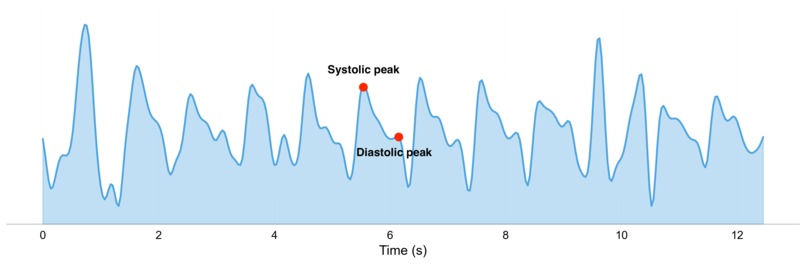

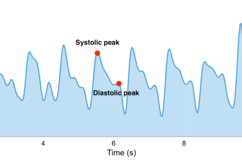

The PPG trace you can get from your mere phone camera!

-

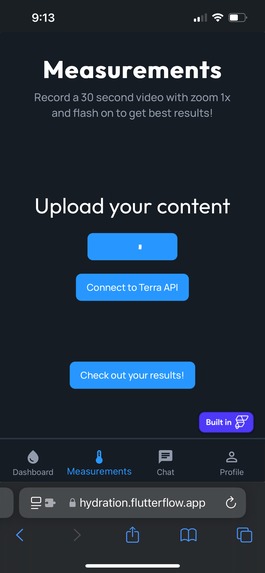

Upload your video

-

Get results!

-

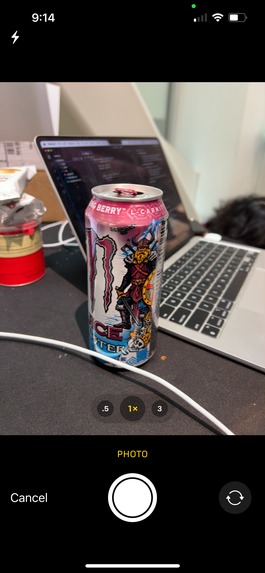

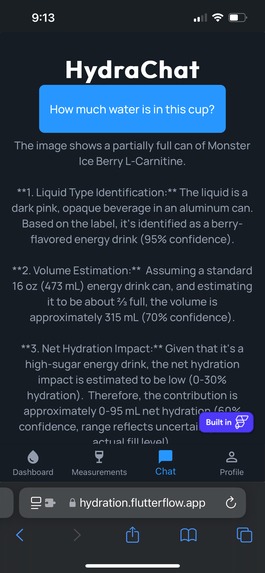

A monster that you might think of drinking to supplement your hydration levels...

-

And a response from our bot to see if it's worth it!

Inspiration

Our project enables users to capture a video of their fingers to extract a PPG signal, analyze hydration levels, and notify them if they need to drink more water! Dehydration is a widespread problem, with studies showing that 75% of people are chronically dehydrated (source: National Academies of Sciences, Engineering, and Medicine). Even mild dehydration can impair cognitive performance, causing issues like reduced focus, fatigue, and memory problems. In fact, research has shown that just 2% dehydration can significantly reduce cognitive function, which affects decision-making and concentration. We were inspired to address this issue through our own experiences with dehydration, especially during hackathons where long hours of focus and limited hydration led us to feel drained and unfocused. With our app, we aim to empower users to monitor their hydration levels in real-time, helping prevent these negative effects and promote overall well-being. By providing immediate feedback on hydration status, we make it easier for people to stay hydrated and avoid the health risks associated with dehydration.

What it does

Our app helps users monitor their hydration levels in real-time using just their phone’s camera. By analyzing a short video of their fingers, the app extracts a PPG signal (photoplethysmogram), which reflects changes in blood volume that are influenced by hydration status.

The process is simple:

- Record a video of your finger using your phone’s camera. To the naked eye, it just looks red like this.

- The app extracts and processes the PPG signal to assess hydration levels. It's amazing how you can get such a clear signal with the characteristic features of a PPG with just a phone camera!

- Based on the analysis, the app will provide feedback on whether you need to drink more water to stay hydrated.

With the data collected from the video, we analyze key PPG features that correlate with hydration, and based on this, the app will notify you if you're at risk of dehydration and need to hydrate.

By offering this real-time feedback, our app solves a significant problem: hydration management. Research shows that dehydration is a common issue that impacts cognitive performance, focus, and overall well-being. Our solution empowers users to maintain optimal hydration and avoid the physical and mental consequences of dehydration, whether they’re at work, during a workout, or just going about their daily lives.

How we built it

We built our app using a combination of modern tools and technologies:

- FlutterFlow for rapid frontend development.

- Firebase for backend data storage and seamless communication between the frontend and backend.

- Python for backend algorithm development and signal processing.

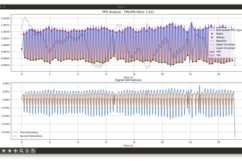

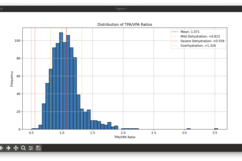

For our core algorithm, we used the Terra Ultrahuman Ring dataset from Regular Rhythms to develop hydration classification based on physiological data. This dataset helped us establish "physiological" hydration ranges, which were then used to classify our vision dataset for hydration levels. Below are some graphs displaying our analysis on this data. We applied robust signal processing methods like bandpass filtering, color channel separation, and peak detection to extract meaningful information from the video data.

We also collected data by recording videos of our own fingers and friends’ fingers, with the phone camera flash on, to capture clear PPG signals. To verify the accuracy of our heart rate measurements, we compared the signals with heart rate data from Apple Watch users.

This approach made our system: (1) Interpretable, (2) Robust to variations, and (3) Efficient. By using these techniques, we ensured that our app could reliably monitor hydration and heart rate with just a phone camera.

Challenges we ran into

Finding a good hydration metric: We initially struggled to identify an effective hydration metric, but we solved this by using Perplexity to locate Google research, which helped us approximate hydration levels based on TPA/VPA. We referenced this research to inform our algorithm.

Integrating FlutterFlow with a custom Python backend: FlutterFlow typically allows integrations with pre-existing API connections, but we encountered issues when trying to connect it with our custom Python backend. This required us to explore alternative integration methods.

FlutterFlow crash and project recreation: We found a bug in FlutterFlow that caused the repo not to render properly. Unfortunately, we had to recreate the entire project from scratch, which delayed our development process.

Processing PPG signals fast enough: One of the biggest challenges was filtering and processing the PPG signals quickly enough for real-time user feedback. The phone camera, while easily accessible, captures noisier and less precise data, making it difficult to achieve fast, accurate analysis. We had to optimize our signal processing pipeline to handle this issue and deliver results efficiently.

Accomplishments that we're proud of

Accessible hydration and heart rate monitoring: One of our proudest achievements is the ability to use just a phone camera—a device everyone already has—to calculate both hydration levels and heart rate. By leveraging such an accessible tool, our solution becomes available to everyone, regardless of resources or location. It’s exciting to think that a device most people use daily for simple tasks can now offer such advanced capabilities. This breakthrough makes our project not only innovative but also practical, allowing people to monitor their health without needing specialized equipment.

Custom backend integration: Another major accomplishment was successfully integrating FlutterFlow with a custom Python backend, despite the platform typically not supporting such integrations. We overcame this challenge by using Firebase functions as a bridge, which allowed us to seamlessly connect our frontend with the backend algorithms responsible for processing the PPG signals. This required creative problem-solving and a deep understanding of both platforms, and we’re proud to have made it work. This integration ensures that our app functions smoothly, providing real-time hydration and heart rate feedback, and was a key step in achieving our project’s goals.

What's next for hydRation

We want to expand to heart rhythm monitoring and other biomarkers. The core concept of using just a phone camera for monitoring hydration and heart rate is not only innovative but has the potential to be expanded into a platform for accessible health monitoring. We plan to build on this by applying the same principles to monitor other critical biomarkers, such as blood oxygen levels and heart rhythm. Ultimately, we aim to provide an easy, non-invasive way to track cardiovascular health, potentially even predicting conditions like arrhythmia and hypertension, all with nothing more than your iPhone camera.

While we’ve made great strides using the Terra dataset to establish our hydration measurement approach, we recognize the need for larger, more diverse data to truly validate our system. Currently, we’re relying on signal data (not video) and assuming the hydration schema still holds. The next step is to conduct large-scale data analysis to refine our hydration measurement methodology and prove its accuracy across different demographics and environmental factors. By gathering real-world data from a broad user base, we aim to enhance the reliability of our hydration scoring and expand its applicability to other health markers.

Log in or sign up for Devpost to join the conversation.