🧠 Inspiration

We wanted to recreate Iron Man's but make it easily accessible for anyone, without having to buy any clunky AR glasses. Yes, right. You can do this on your mobile browser!

🤖 What it Does

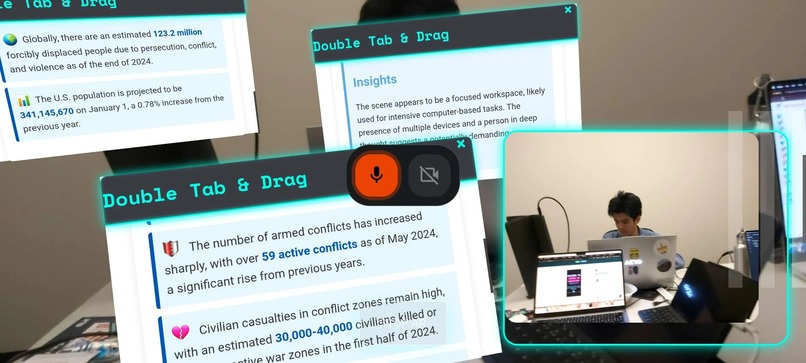

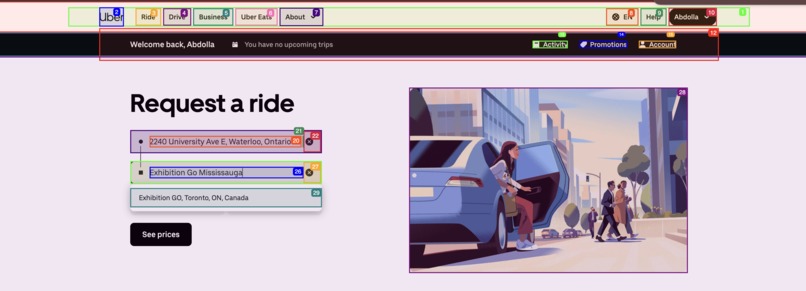

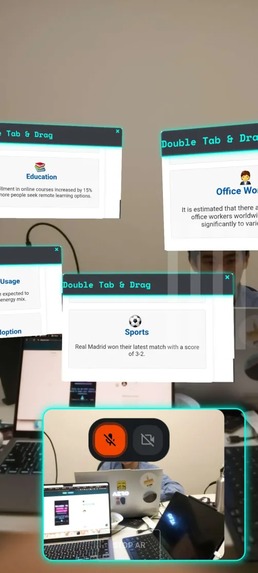

Jarvis is an AI assistant that listens to your voice, understands your intent, and visualizes interactive Augmented Reality (AR) HTML pages in response to your query, and for more complex stuff uses the Browser Use agent to pretty much do anything for you on the web.

You can ask Jarvis to:

- 🍔 Order food

- 📝 Fill out forms

- 🔍 Browse the web for you

- or anything else really; the sky is the limit

It uses Google Gemini’s live API for speech-to-speech interaction and can even process video input. When a task requires browser automation, Jarvis routes the request to a backend agent that controls a real browser, making it possible to automate almost anything online.

🛠️ How We Built It

Frontend:

- Built with React and TypeScript

- Uses the Gemini live API for real-time speech and context

Backend:

- FastAPI server that receives tasks

- Controls a browser session using an agent (with LLM-powered task cleaning)

Tool Routing:

- The frontend defines tool functions to generate HTML pages, use the Browser Use agent

- Gemini’s intent detection triggers backend execution depending on the prompt

AR Integration:

- UI designed to work in augmented reality

⚠️ Challenges We Ran Into

- 🧩 Fixing up the UI for Augmented Reality was a major challenge — cross-platform AR (especially on iOS) can be tricky.

- 🔄 Figuring out how to use Gemini’s tool function system to reliably trigger browser automation.

- 🧹 Ensuring the agent could handle a wide variety of tasks robustly with proper task cleaning.

🏆 Accomplishments That We're Proud Of

- 🔗 Seamless integration between live speech, AR, and browser automation.

- 🧰 Building a flexible tool routing system that lets Gemini decide when to trigger the agent.

- 🤯 Making the assistant capable of handling complex, multi-step web tasks from just a spoken command.

📚 What We Learned

- How to use Google Gemini’s live API for real-time, multimodal interaction.

- How to process and route user intent to the right backend tools.

- The ins and outs of browser automation and LLM-based task cleaning.

- The quirks of building AR experiences for the web and iOS.

🔮 What's Next for Jarvis

- 🌎 Expanding the range of supported AR interactions and devices.

- 🔌 Integrating with more web services and APIs.

Built With

- ar

- browseruse

- fastapi

- gemini

- react

- three.js

- typescript

- webxr

Log in or sign up for Devpost to join the conversation.