Inspiration

We love to cook for and eat with our friends and family! Sometimes though, we need to accommodate certain dietary restrictions to make sure everyone can enjoy the food. What changes need to be made? Will it be a huge change? Will it still taste good? So much research and thought goes into this, and we all felt as though an easy resource was needed to help ease the planning of our cooking and make sure everyone can safely enjoy our tasty recipes!

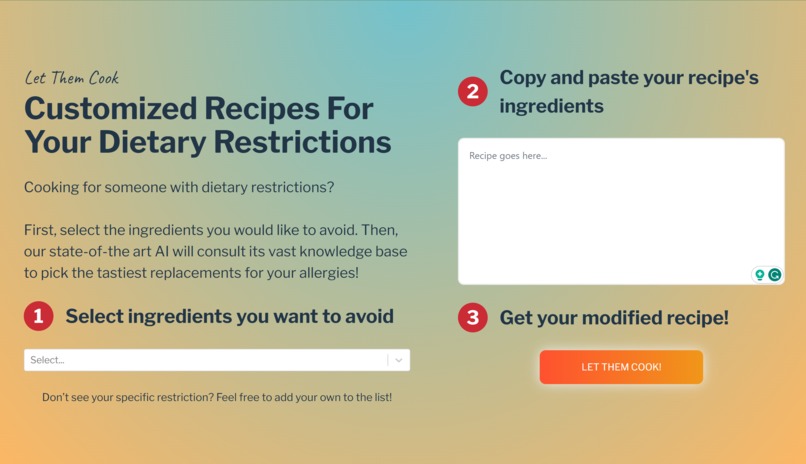

What is Let Them Cook?

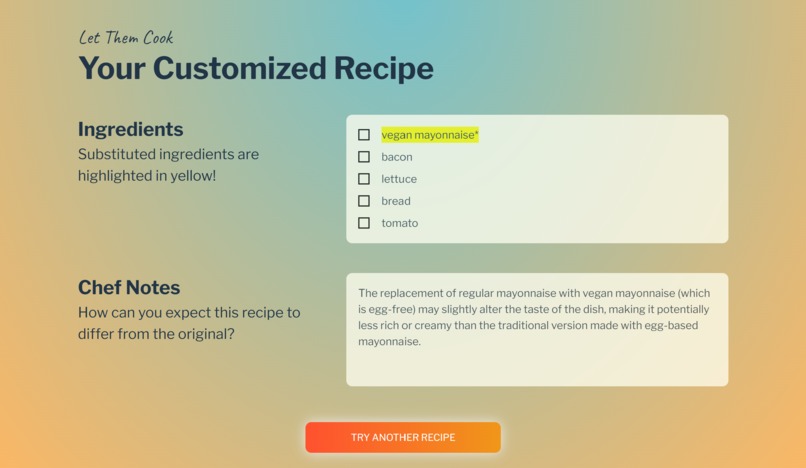

First, a user selects the specific dietary restrictions they want to accommodate. Next, a user will copy the list of ingredients that are normally used in their recipe into a text prompt. Then, with the push of a button, our app cooks up a modified, just as tasty, and perfectly accommodating recipe, along with expert commentary from our "chef"! Our "chef" also provides suggestions for other similar recipes which also accomidate the user's specific needs.

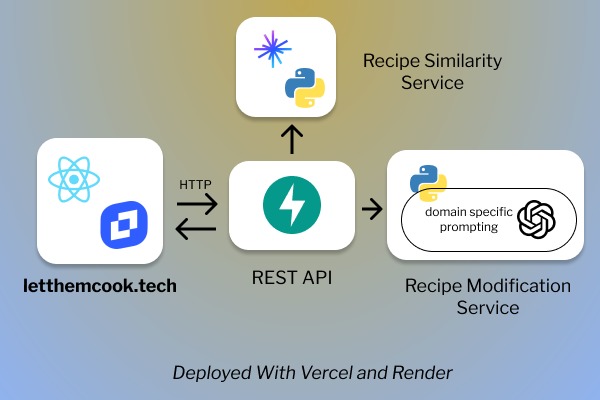

How was Let Them Cook built?

We used React to build up our user interface. On the main page, we implemented a rich text editor from TinyMCE to serve as our text input, allong with various applicable plugins to make the user input experience as seamless as possible.

Our backend is Python based. We set up API responses using FastAPI. Once the front end posts the given recipe, our backend passes the recipe ingredients and dietary restrictions into a fine-tuned large language model - specifically GPT4.

Our LLM had to be fine-tuned using a combination of provided context, hyper-parameter adjustment, and prompt engineering. We modified its responses with a focus on both dietary restrictions knowledge and specific output formatting.

The prompt engineering concepts we employed to receive the most optimal outputs were n-shot prompting, chain-of-thought (CoT) prompting, and generated knowledge prompting.

UI/UX

User Personas

We build some user personas to help us better understand what needs our application could fulfil

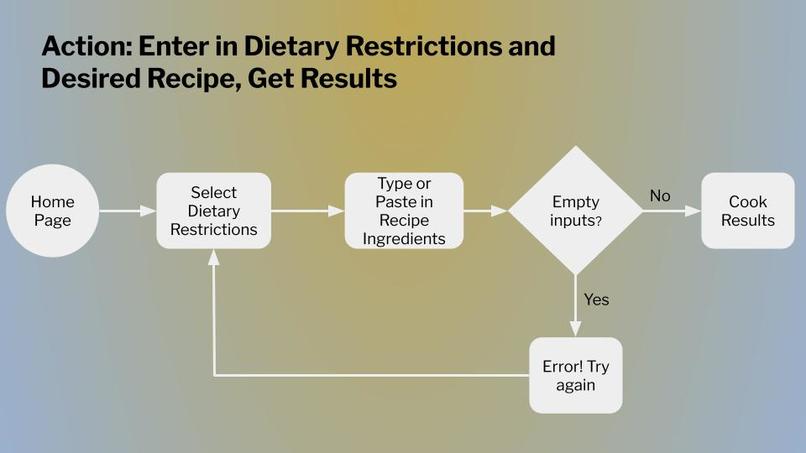

User Flow

The user flow was made to help us determine the necessary functionality we wanted to implement to make this application useful

Lo-fi Prototypes

These lo-fi mockups were made to determine what layout we would present to the user to use our primary functionality

Hi-fi Prototypes

Here we finalized the styling choice of a blue and yellow gradient, and we started planning for incorporating our extra feature as well - the recipe recomendations

Engineering

Frontend: React, JS, HTML, CSS, TinyMCE, Vite

Backend: FastAPI, Python

LLM: GPT4

Database: Zilliz

Hosting: Vercel (frontend), Render (backend)

Challenges we ran into

Frontend Challenges

Our recipe modification service is particularly sensitive to the format of the user-provided ingredients and dietary restrictions. This put the responsibility of vetting user input onto the frontend. We had to design multiple mechanisms to sanitize inputs before sending them to our API for further pre-processing. However, we also wanted to make sure that recipes were still readable by the humans who inputted them. Using the TinyMCE editor solved this problem effortlessly as it allowed us to display text in the format it was pasted, while simultaneously allowing our application to access a "raw", unformatted version of the text.

To display our modified recipe, we had to brainstorm the best ways to highlight any substitutions we made. We tried multiple different approaches including some pre-built components online. In the end, we decided to build our own custom component to render substitutions from the formatting returned by the backend.

We also had to design a user flow that would provide feedback while users wait for a response from our backend. This materialized in the form of an interim loading screen with a moving GIF indicating that our application had not timed out. This loading screen is dynamic, and automatically re-routes users to the relevant pages upon hearing back from our API.

Backend Challenges

The biggest challenge we ran into was selecting a LLM that could produce consistent results based off different input recipes and prompt engineering. We started out with Together.AI, but found that it was inconsistent in formatting and generating valid allergy substitutions. After trying out other open-source LLMs, we found that they also produced undesirable results. Eventually, we compromised with GPT-4, which could produce the results we wanted after some prompt engineering; however, it it is not a free service.

Another challenge was with the database. After implementing the schema and functionality, we realized that we partitioned our design off of the incorrect data field. To finish our project on time, we had to store more values into our database in order for similarity search to still be implemented.

Takeaways

Accomplishments that we're proud of

From coming in with no knowledge, we were able to build a full stack web applications making use of the latest offerings in the LLM space. We experimented with prompt engineering, vector databases, similarity search, UI/UX design, and more to create a polished product. Not only are we able to demonstrate our learnings through our own devices, but we are also able to share them out with the world by deploying our application.

All that said, our proudest accomplishment was creating a service which can provide significant help to many in a common everyday experience: cooking and enjoying food with friends and family.

What we learned

For some of us on the team, this entire project was built in technologies that were unfamiliar. Some of us had little experience with React or FastAPI so that was something the more experienced members got to teach on the job.

One of the concepts we spent the most time learning was about prompt engineering.

We also learned about the importance of branching on our repository as we had to build 3 different components to our project all at the same time on the same application.

Lastly, we spent a good chunk of time learning how to implement and improve our similarity search.

What's next for Let Them Cook

We're very satisfied with the MVP we built this weekend, but we know there is far more work to be done.

First, we would like to deploy our recipe similarity service currently working on a local environment to our production environment. We would also like to incorporate a ranking system that will allow our LLM to take in crowdsourced user feedback in generating recipe substitutions.

Additionally, we would like to enhance our recipe substitution service to make use of recipe steps rather than solely ingredients. We believe that the added context of how ingredients come together will result in even higher quality substitutions.

Finally, we hope to add an option for users to directly insert a recipe URL rather than copy-and-pasting the ingredients. We would write another service to scrape the site and extract the information a user would previously paste.

Built With

- ai

- fastapi

- javascript

- llm

- openai

- python

- react

- tinymce

- zilliz

Log in or sign up for Devpost to join the conversation.