-

-

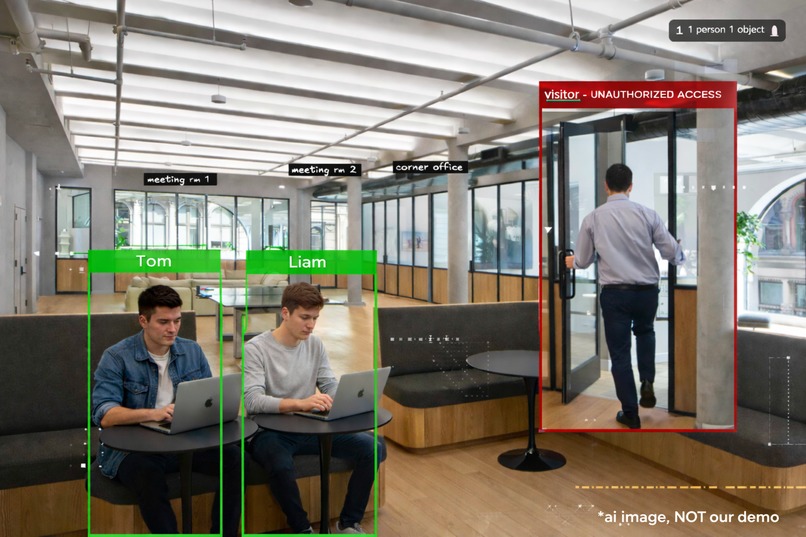

Utilizing YOLOV8's model to track entities

-

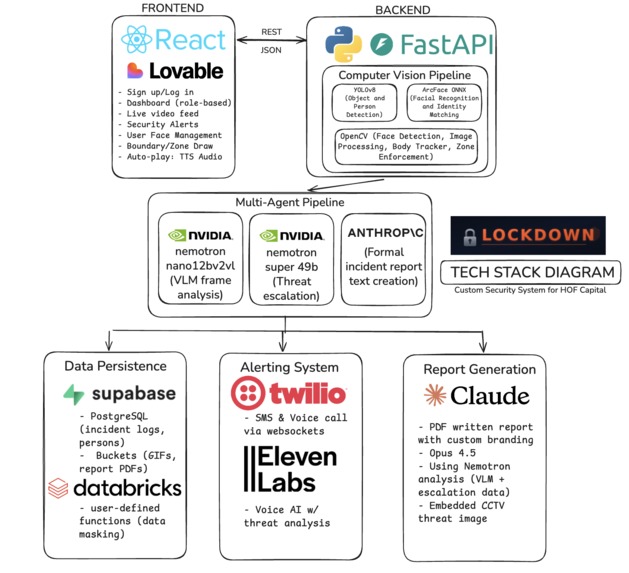

Lockdown's tech stack diagram

-

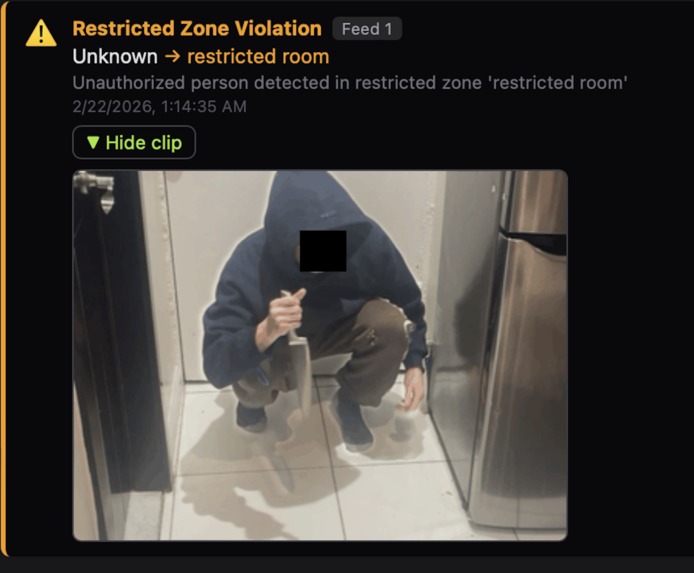

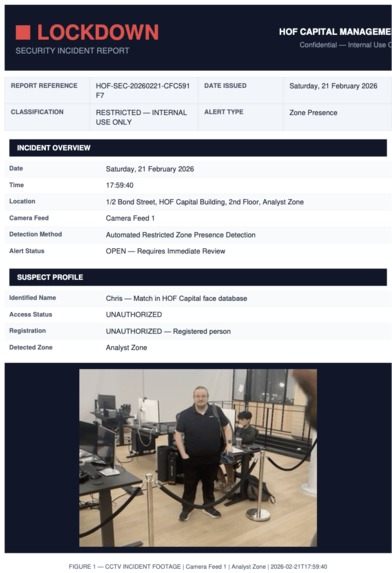

An unknown person has been detected by CCTV footage in a restricted part of the building!

-

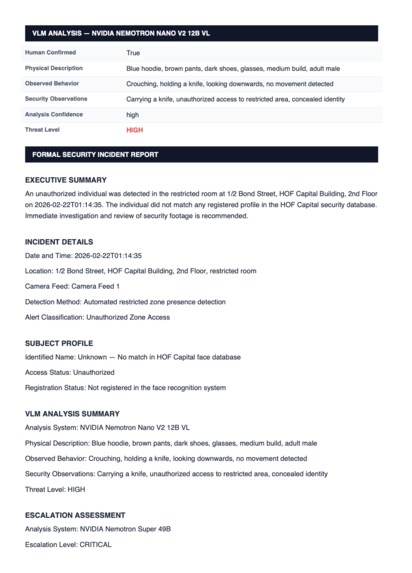

This is the generated document for records from the previous slide

-

Generated document for records, providing a snapshot of the culprit and necessary descriptions from VLM

-

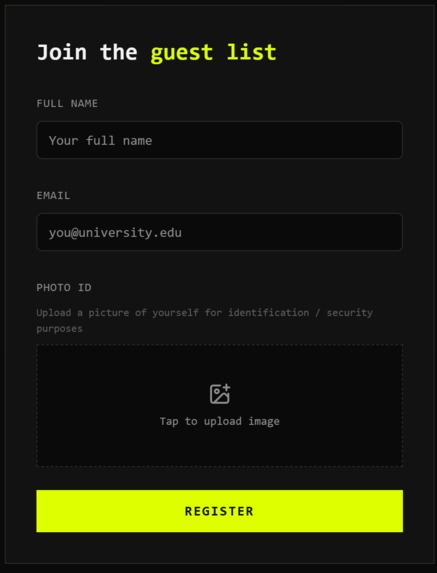

Loveable interface for collecting user faces

-

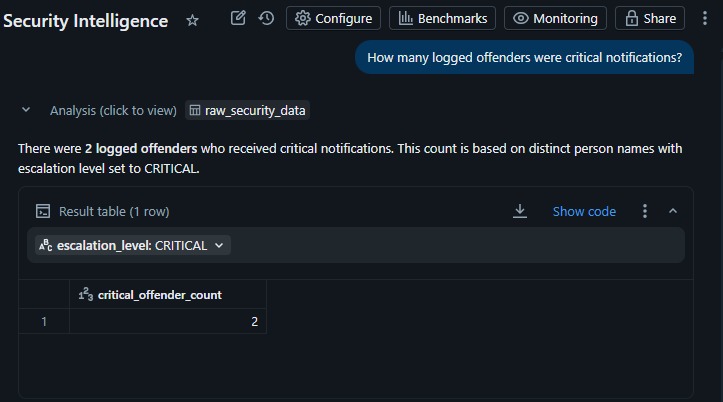

Databricks Genie model to answer questions about our data with contextual grounding.

-

Databricks privacy feature - masking names and columns without admin login with UDFs in databricks notebooks

Tech Stack video:

https://youtu.be/37bierR_kTo << SEPARATE VIDEO DISCUSSING TECH STACK

What it does

Lockdown is a real-time AI-powered physical security platform that turns existing CCTV infrastructure into an intelligent, autonomous security layer. It monitors 4 simultaneous camera feeds with facial recognition, restricted zone enforcement, and door access control, all from a single dashboard.

When an unauthorized person is detected, a multi-agent AI pipeline kicks in: NVIDIA Nemotron Nano 12B V2 VL visually analyzes the threat, Nemotron Super 49B cross-references the person's history in Supabase and decides the escalation level (ROUTINE / URGENT / CRITICAL), and Anthropic Claude writes a formal incident report rendered into a branded PDF. The system simultaneously fires off Twilio SMS + ElevenLabs Voice AI calls to security personnel, plays an alert on the dashboard.

Operators can draw restricted zones and boundary lines directly on camera feeds, register faces with roles, and configure door access, all through the React dashboard. Role-based access gives C-Level full visibility and highest clearance while Analysts see alerts only.

How we built it

Frontend: React + Vite with a custom dark-themed dashboard. Camera feeds capture frames every 400ms and send them to the backend for real-time analysis. We built interactive canvas overlays for drawing restricted zones (polygons and boundary lines) directly on live feeds. Backend: FastAPI (Python) handling face recognition (OpenCV DNN + ArcFace ONNX embeddings), door detection (YOLOv8), and zone enforcement (ray-casting for polygons, side-tracking for line crossings). AI Pipeline: Three-agent escalation. Nemotron VLM for visual threat analysis, Nemotron Super for context-aware escalation decisions (querying Supabase for prior incidents), and Claude for formal report generation. Integrations: Twilio for SMS and ElevenLabs for voice AI calls and dashboard TTS alerts, Supabase for persistent incident storage and file uploads, ReportLab for branded PDF reports.

Challenges we ran into

Getting real-time face recognition to work reliably across 4 simultaneous camera feeds without lag was a major hurdle. We had to optimize frame capture intervals, implement sticky label tracking so identities persist even when faces temporarily leave frame or when the face is not visible but the body still is, and use efficient cosine similarity matching against ArcFace embeddings. Setting up the Twilio + ElevenLabs Voice AI call integration was really a pain because we it required setting up a toll free number and that did cost money and we did not receive ElevenLabs credits.

Accomplishments that we're proud of

- A three-agent AI escalation pipeline where each model handles what it's best at: Nemotron VLM for vision, Nemotron Super for reasoning with historical context, and Claude for professional writing.

- Supabase-aware escalation. The Nemotron Super agent queries a person's prior incidents, registration status, and visitor history before deciding escalation level, making it context-aware rather than purely reactive.

- Interactive zone drawing directly on live camera feeds with real-time violation detection using ray-casting and line-crossing algorithms.

What we learned

We learned how to orchestrate multiple AI models in a sequential pipeline where each agent's output feeds the next. Designing the handoff between Nemotron VLM's visual analysis, Nemotron Super's escalation reasoning, and Claude's report writing taught us how to decompose a complex decision into specialized sub-tasks.

We also learned the importance of latency budgeting in real-time systems. Every second matters when someone unauthorized is in a restricted zone, so we had to think carefully about what happens in parallel vs. sequentially in the alert pipeline.

Working with ArcFace embeddings and cosine similarity matching gave us hands-on experience with production face recognition beyond just calling an API

What's next for LockDown

- Live RTSP/IP camera integration — replace browser webcams with actual security camera feeds for enterprise deployment.

- Multi-floor support — extend the floor plan system to handle multiple floors with elevator and stairwell tracking.

- Behavioral anomaly detection — use temporal analysis across frames to detect loitering, tailgating, and unusual movement patterns.

- Mobile app — push notifications and live feed access for security personnel on the go.

- Automated authority dispatch — integrate with local emergency services APIs so CRITICAL alerts can trigger real dispatch, not just a notification saying that it's been done.

- Audit trail dashboard — analytics and reporting on incident trends, response times, and zone hotspots over time.

Built With

- claude

- databricks

- elevenlabs

- fastapi

- nemotron

- opencv

- postgresql

- react

- supabase

- twilio

- yolov8

Log in or sign up for Devpost to join the conversation.