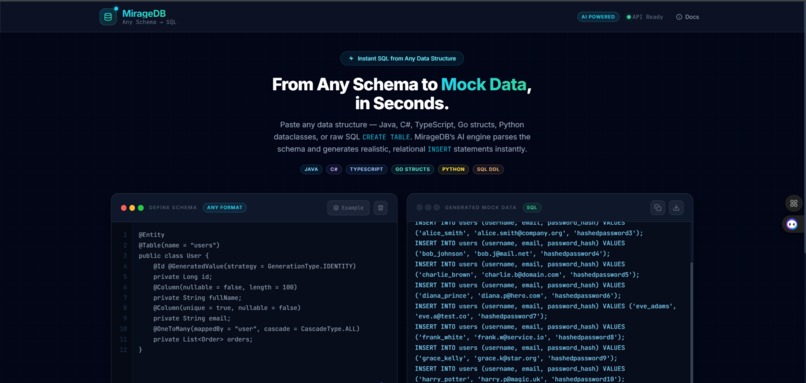

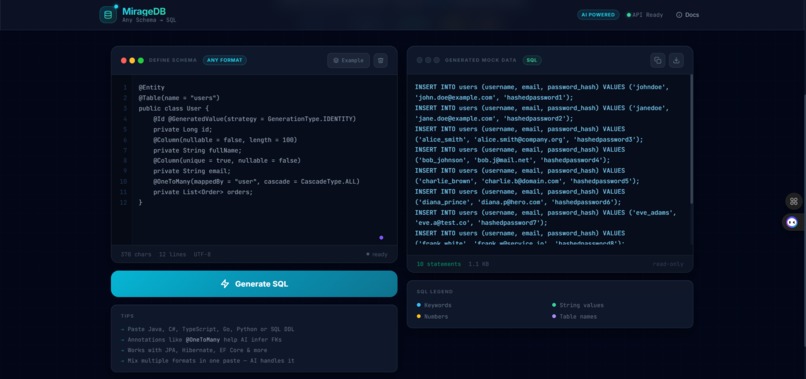

The Inspiration Every developer has experienced the frustration of the "Cold Start" database problem. You spend hours architecting a complex schema with nested relationships, only to realize that testing it requires another three hours of manual data entry or writing fragile, throwaway scripts. Existing mock generators are often context-blind; they provide random strings that do not resemble real-world data and almost always fail to maintain primary and foreign-key integrity. MirageDB was built to bridge the gap between architectural design and functional testing.

How We Built It MirageDB was engineered as a high-velocity, AI-augmented developer tool. The architecture is split into two specialized layers:

- The Core: A robust Spring Boot 3 backend leveraging Java 21. This serves as the orchestration engine that parses raw code—whether it is Java entities, TypeScript interfaces, or SQL DDL—and communicates with the Google Gemini 2.5 Flash API.

- The Intelligence: We developed a specialized context-injection strategy. Instead of requesting isolated tables, we feed the AI the entire relational tree first. This forces the model to understand the database as a singular ecosystem.

- The Interface: A responsive React dashboard styled with Tailwind CSS. To maintain a competitive pace during the build, we used Creao AI to prototype the UI components, allowing us to focus our engineering energy on the complex relational algorithms.

Challenges and Pivots

The most significant technical hurdle was maintaining referential integrity across scale. Ensuring that a generated user_id remains consistent across three different child tables while maintaining realistic data distributions is mathematically complex. We solved this by implementing a deterministic mapping layer in the backend that validates the AI-generated SQL against the original schema constraints.

We also made a critical architectural pivot during development. Initially, we experimented with a reactive programming model, but we discovered that the synchronous nature of structured SQL generation from LLMs required the stability and predictable thread management of a standard MVC model. This change eliminated data corruption issues and improved the reliability of the Gemini API streams.

What We Learned This project was a masterclass in human-AI collaboration. We learned that AI is most effective when treated as a specialized component in the stack rather than just a coding assistant. By integrating Gemini directly into the logic and using Creao AI to handle frontend boilerplate, we achieved in days what typically takes weeks. We also deepened our mastery of Java 21 features and refined our ability to manage complex state transitions in microservice-ready architectures.

Log in or sign up for Devpost to join the conversation.