Presentation

https://docs.google.com/presentation/d/15-3Wi4gJ-Rvh56OLAk2d79UH6sus-VndX71vdpnoPAc/edit?usp=sharing

Inspiration

Ever scroll through TikTok or Instagram and find a super cool outfit, only to wonder where you can get those exact pieces? We noticed that many people face this dilemma daily. Our inspiration for OOTD came from a desire to ungatekeep fashion and make trendy outfits accessible to everyone. We wanted to bridge the gap between seeing a style you love and actually owning it, WITHOUT gatekeepers.

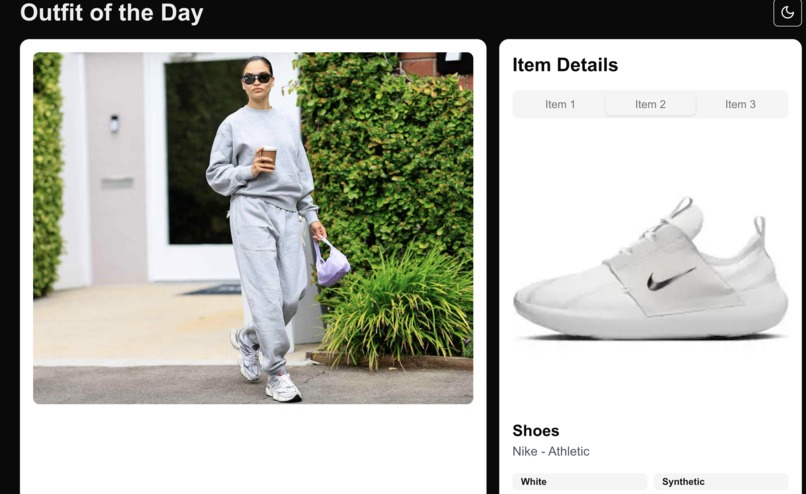

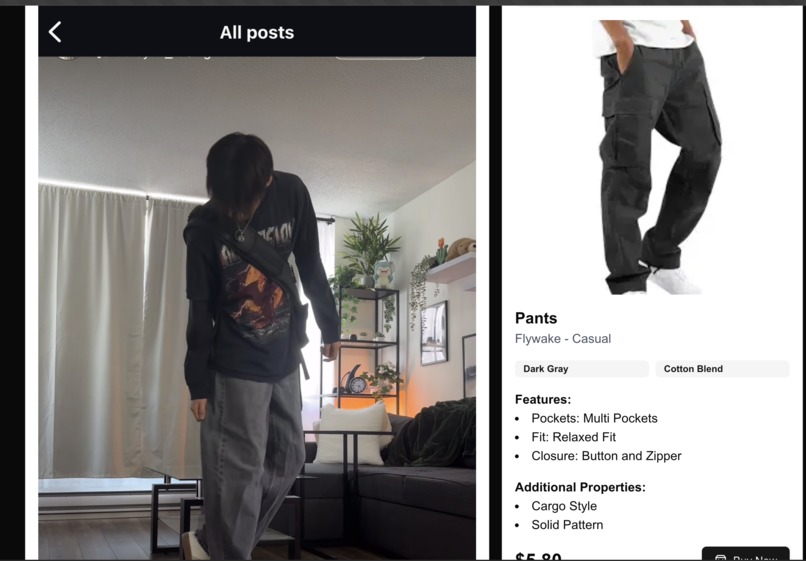

What it does

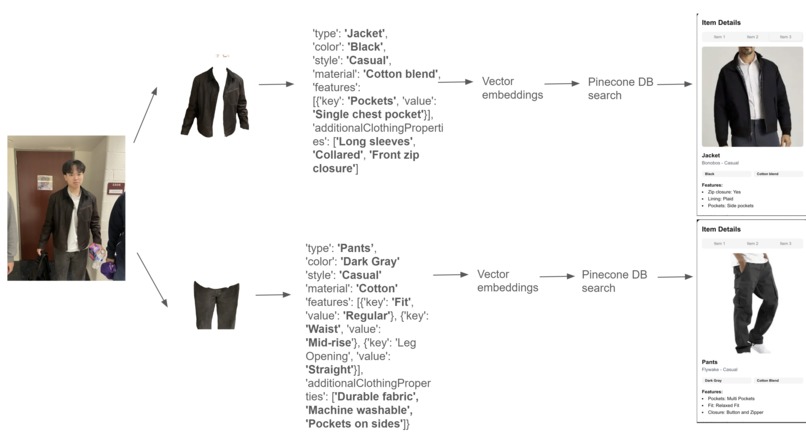

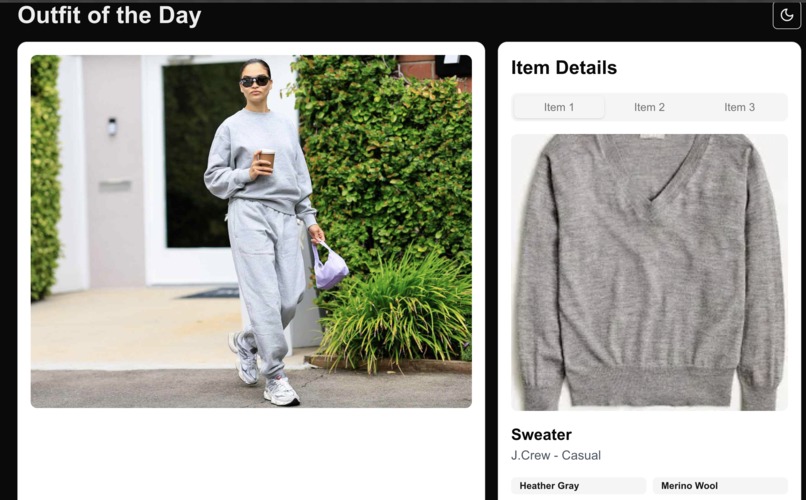

OOTD allows users to upload an image of an outfit they like. Once uploaded, users can click on any piece of clothing within the image, and the app will identify the item and provide information on where to purchase it or find similar styles. Whether it's a unique jacket or a pair of shoes, OOTD helps you find exactly what you're looking for.

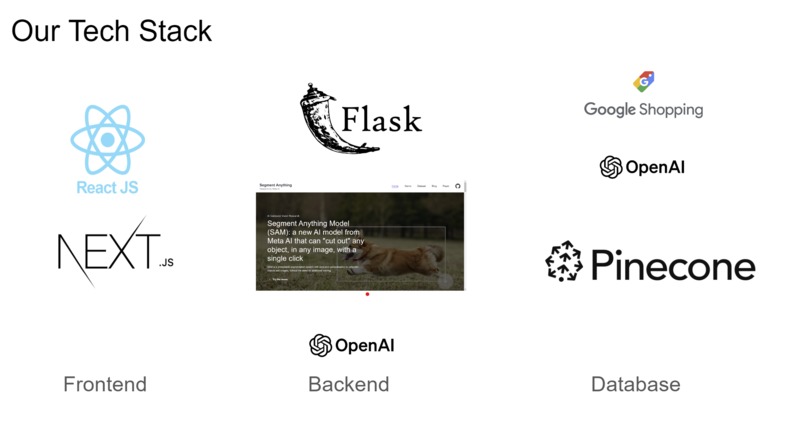

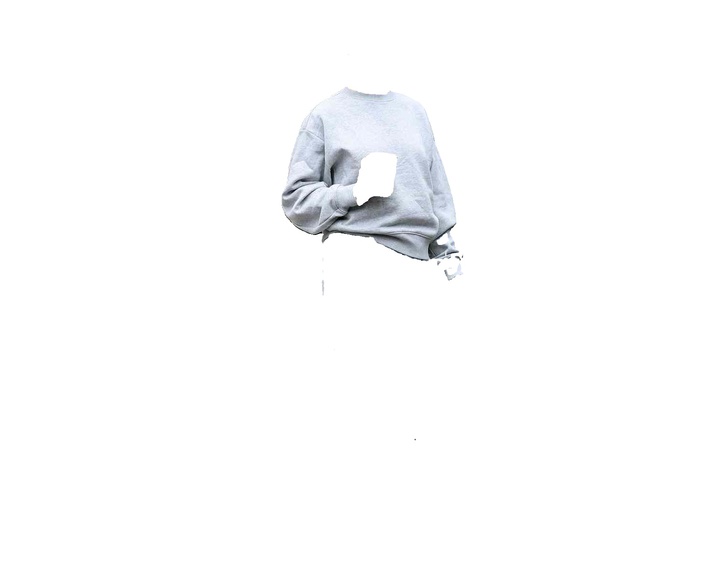

How we built it

Dataset: Google Shopping web scraper -> Computer Vision model -> Semantic Embedding -> Upload to Pinecode Vector DB Backend: We used Flask, Meta's Segment Anything Model (SAM), along with Pinecone DB and OpenAI Embeddings. Frontend: React.js + ShadCN UI Kit + Next.js

Challenges we ran into

One of the main challenges was accurately identifying clothing items from images taken in different lighting conditions and angles. Ensuring a smooth and fast user experience while processing heavy image data was another hurdle we had to overcome.

Built With

- flask

- next.js

- openai

- pinecone

- react.js

- sam

Log in or sign up for Devpost to join the conversation.