Inspiration

Why do we dance? Whether you're groovin' to make the day-to-day routine more enjoyable, bouncing with your friends, or just plain losing yourself without a care in the world, it’s an important part of our self identity and culture. To bring this love for music and dance together, we built a World AR effect that uses Spark AR to foster a fun, shareable and gamified dance experience on Reels. We hope you have fun playing around with this effect and remember to always live your life with a little P.L.U.R.

What it does

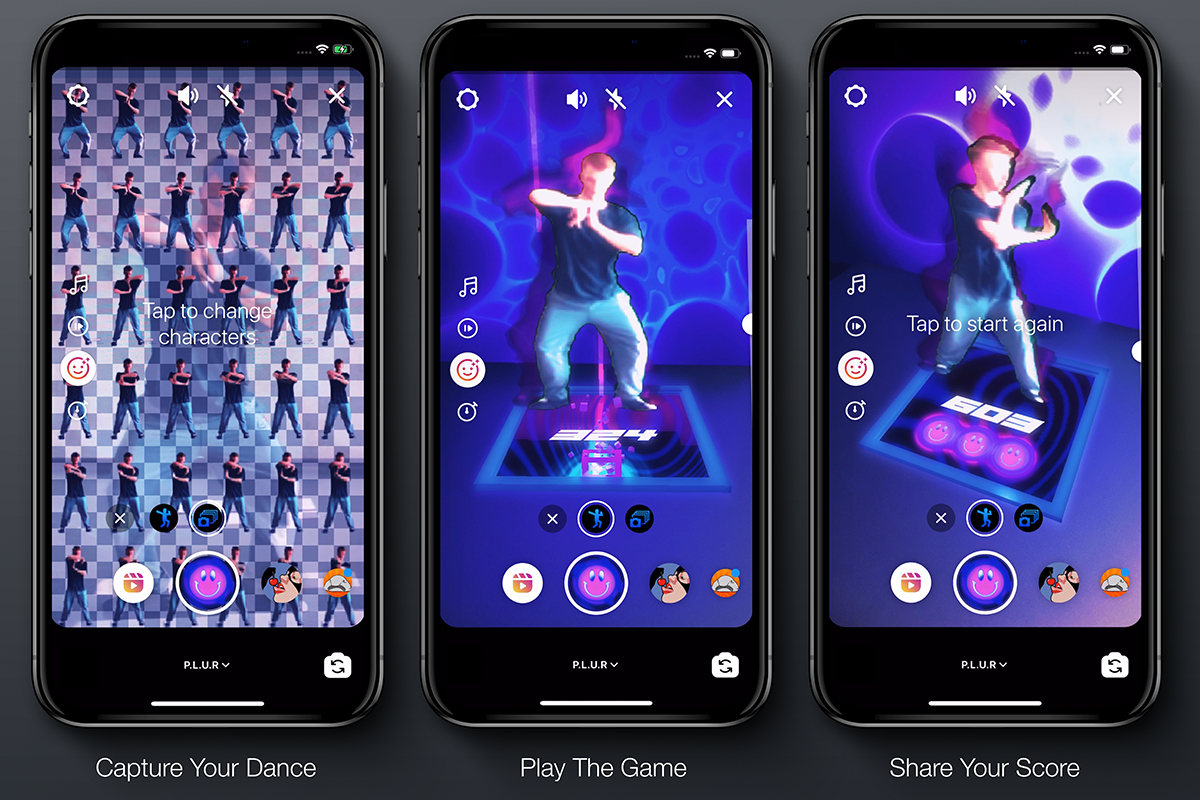

P.L.U.R is a one of a kind AR Dance Arcade. Users can either choose the default character or play themselves by capturing their own freestyle moves with a segmentation sprite sheet tool. Once the character is captured, the audio reactive game begins. A dance floor is placed in your environment and the user controls their character with an on-screen slider making them dance to the beat. As you dance, the effect utilizes processed midi data to generate falling notes that you catch as the beats drop. The more notes you catch, the higher your score gets. Share your game with friends using Reels or use the effect with your own music to record a unique dance experience.

How we built it

Music

Music gives rythamatic movement meaning. It helps build anticipation for that first note, carries you side-to-side with arpeggiated chord progressions, and gives you that enjoyable feeling of unlocking patterns in a musical journey. Team member Riley Deang, aka Monro, created these top vibes using Ableton Live and loads of experience in sound design and music production. Along with the audio track, Riley provided the midi structure which was interpreted into the game structure. Listen to her latest EP.

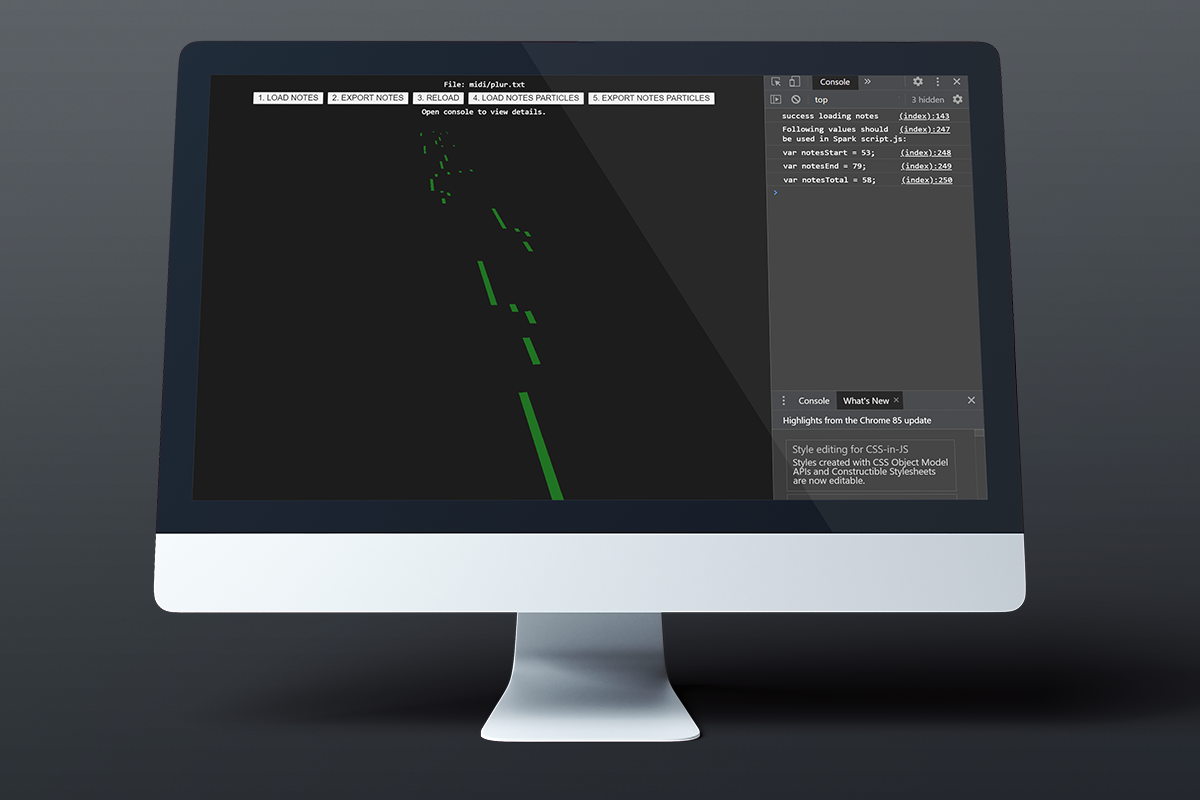

Midi Processing Tool

Once the midi file was recorded, it was processed using a custom processing and export tool. The ThreeJS library was used to extract note data and transform it into cube geometry based on note position and duration. Two files were created. The first geometry structure is used for the visualization of notes and the second was null objects that acted as monitor values for scoring logic. The geometry was then exported to a 3D file format and imported into Spark. Export tool is available on GitHub.

Spark AR

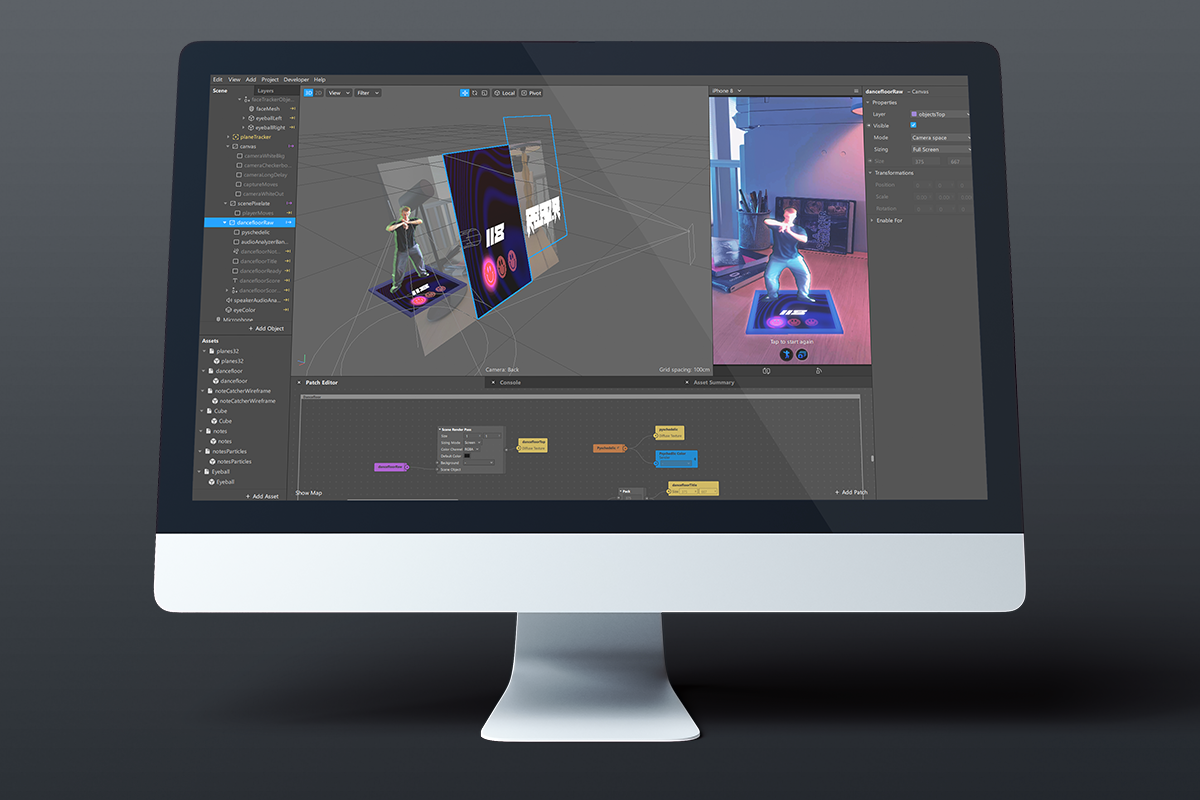

Spark AR is magic unto itself so it didn’t take much to bring all these assets together to develop this unique augmentation. Plane tracking was used to setup the dance floor. Background segmentation and Delay Frame was used to capture users dance moves into a 36 frame sprite sheet. Render Pass also provided the ability to render a canvas layer onto the dance floor. This Render Pass approach can also be used to apply dynamic textures to any mesh surface or even project a pseudo-shadow for an animated 3D object. Finally, a variety of logic was hosted within Patch and Script to coordinate interactivity and animations. Full project available on GitHub.

Challenges we ran into

Anytime you include this level of gamification into an effect, it requires many features and can push some of those features to the edge of their capabilities.

Delay Frame

When using Delay Frame to store the dance moves, it required storing many frames. Currently the effect generates a 36 frame sprite sheet and then uses UV offsets to animate through them. To keep the memory footprint low, the sprite sheet is stored in a single Delay Frame pass that uses a 2x on a screen sizing mode. Unfortunately as the sprite is generated, older sprite images lose some image quality causing pixelation. As features are enhanced around render pass, we hope to enhance the effect with optimized memory management or multiple passes.

Transform Monitoring

Reactive Programming is built for optimized performance but doesn’t lend itself well to consistent frame interval logic. Since notes are falling at a relatively high velocity, this can cause lower-end devices to not count as many scoring opportunities or miss short notes all together. To help the situation we made sure to lengthen note duration while taking game play into consideration, as well as developed an asynchronous function that scores on a 300ms interval to iron out device capabilities.

Script Size

A recent Spark Hub report that larger script files had issues uploading really forced us to organize. When a build is free flowing and not totally clear, it’s easier to use a single script.js but it can quickly become large and unruly. This event gave us the perfect opportunity to re-organize for the better and use a script architecture that we could benefit from moving forward.

What's next for P.L.U.R

We had a ton of fun making this effect, learned many things along the way, and pushed some features to the edge of their current capabilities. Now that we have a fully functioning piece, it’s ready to be released out in the real world. You never know how an effect will be received but it would be nice to eventually enhance with more music and dance features.

Our hope is that future iterations either use full body tracking, or interactive approaches that are similar to Beat Saber, once Facebook Reality Labs has a release of their AR glasses.

Resources

Instagram Effect Link: https://www.instagram.com/ar/330180728028971/?ch=ZDE5ZjI1MmIxNzc1NjFjNzBiNDdiOGUzOTc5ZDcxMTc%3D

GitHub Repo: https://github.com/benursu/Afrosquared-PLUR

Demo Video: https://youtu.be/iHCXFk0-GQU

Built With

- 3dsmax

- ar

- javascript

- sparkar

- three.js

Log in or sign up for Devpost to join the conversation.