Inspiration

Between the four of us, football(soccer) shows up in a lot of different ways. Some of us play. Some of us watch every match we can. Some of us spend more time on Football Manager than we'd like to admit. And one of us follows chess seriously.

What we kept noticing across all of those contexts was the same gap.

When you're playing FM and trying to decide whether a signing is worth it, you're staring at numbers. When you're watching a real match, those numbers disappear. You're left watching something happen and feeling like you either get it or you don't. The tactical layer, why a position is dangerous, why that pass opened everything up, where a team is building something, is almost completely invisible unless you've trained your eye for years.

Chess solved this a long time ago. The eval bar doesn't require you to understand engine lines. It just moves, and suddenly anyone watching can feel the tension building. A casual viewer and a grandmaster are looking at the same screen and both understanding that something is shifting.

Football has never had that. And the more we talked about it, from the fan trying to learn the game, to the coach at a smaller club who wants to understand their team's patterns, to the player who wants to understand their own decisions, the more it seemed like an obvious and exciting problem to go after.

That is where PitchIQ started.

The Opportunity We Saw

Football analytics is not a new idea. But the tools that exist today are almost entirely built for the top of the pyramid.

A Premier League club has a full analytics department, proprietary tracking systems, and dedicated video analysts. Below that level, most coaches are working from broadcast footage, memory, and intuition. And the gap does not stop at the professional level. Academy coaches, university teams, and grassroots setups are making decisions about players and tactics with almost no analytical support.

The insight is already sitting in broadcast footage. Every match is being filmed. PitchIQ pulls that insight out automatically, using the video that already exists, with no special hardware and no data subscriptions. The same depth of analysis that a top club pays for becomes accessible to anyone with a broadcast clip.

That is the gap we are building toward.

What PitchIQ Does

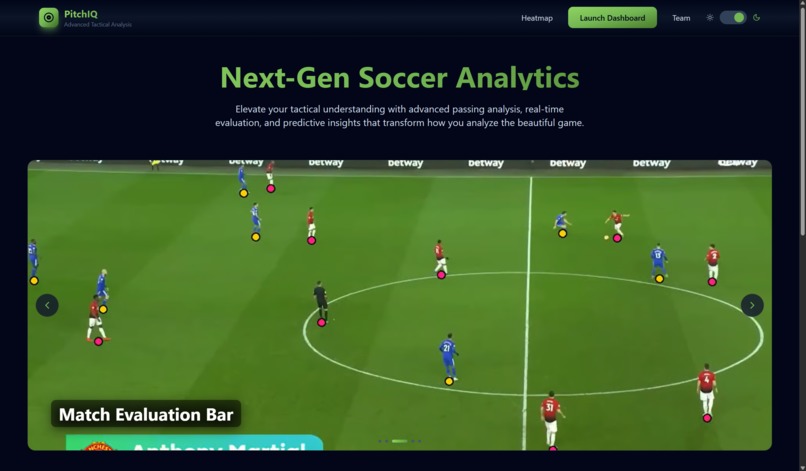

PitchIQ takes standard broadcast footage and produces five layers of tactical intelligence automatically. No sensors. No proprietary feeds. No special camera setup.

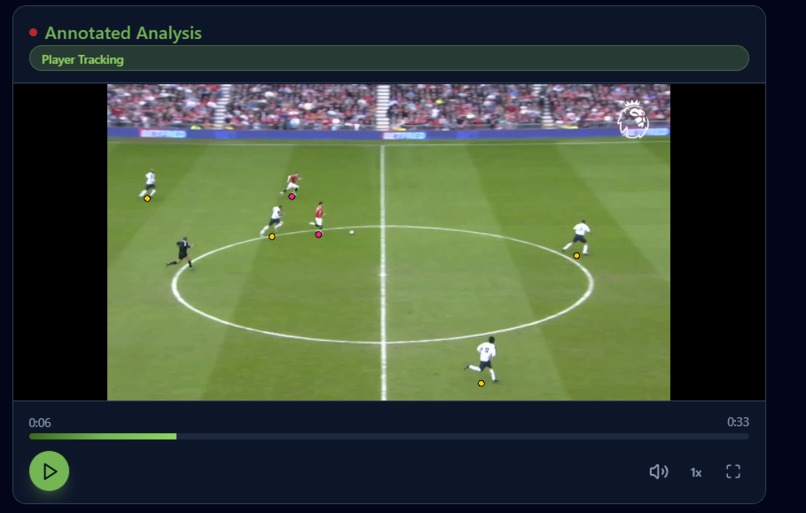

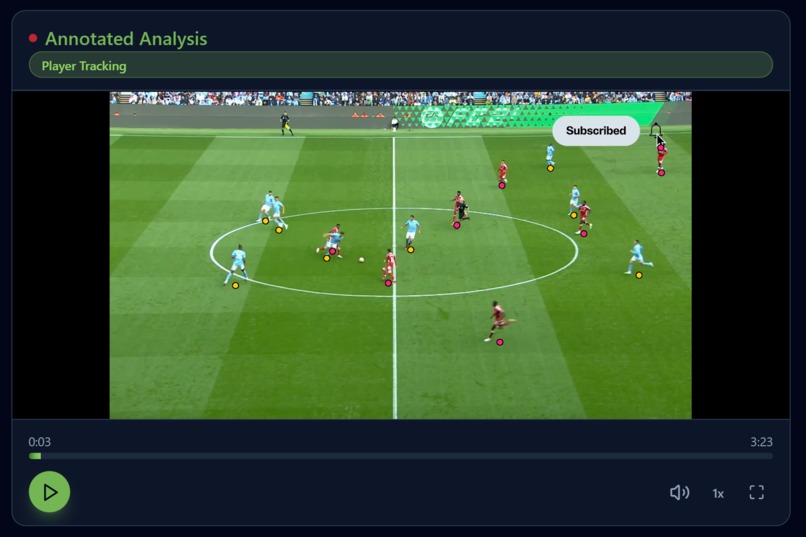

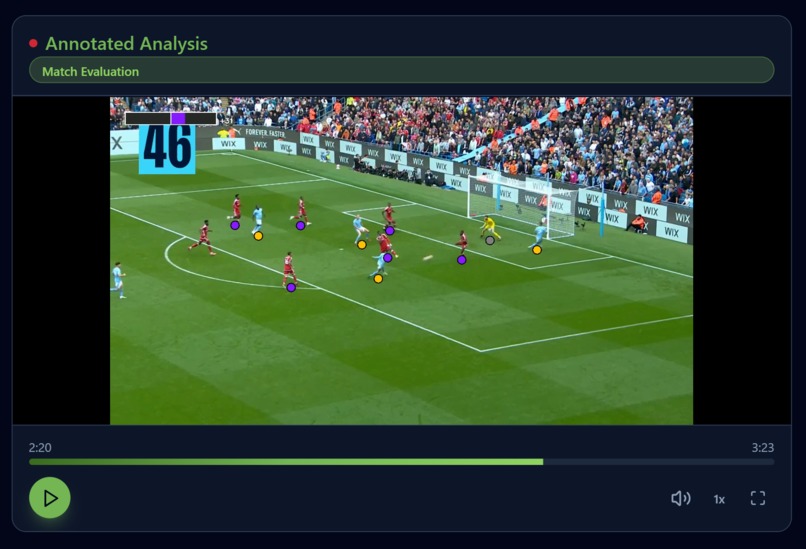

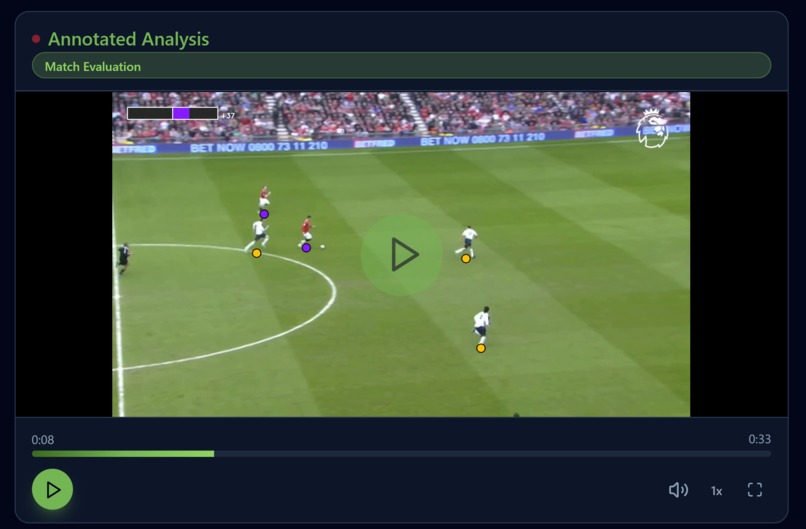

Player Tracking. Every player detected and color-coded by team, consistently across the clip. Referees identified and excluded from all tactical calculations. This is the foundation everything else builds on.

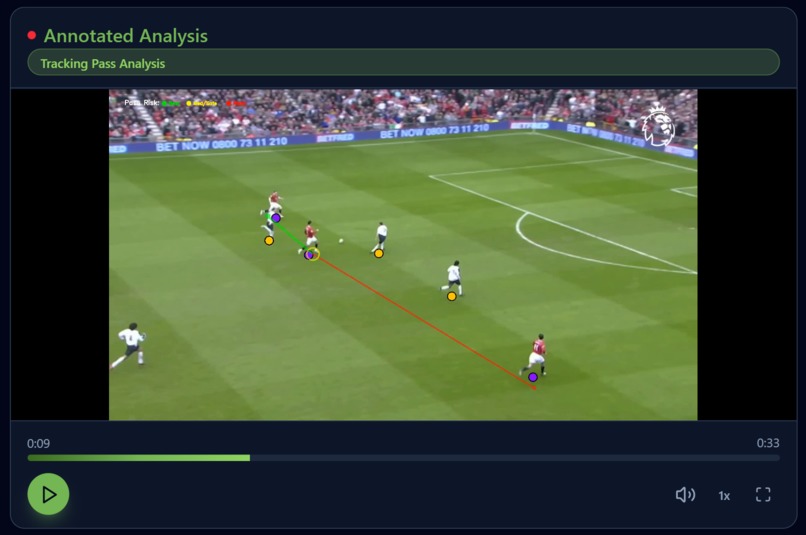

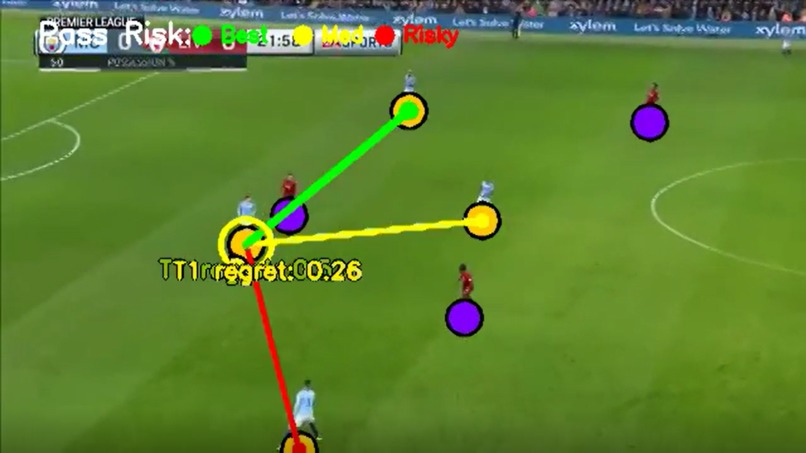

Pass Optimality. For the confirmed ball carrier, every available passing option scored and color-coded in real time. Green is the highest-threat option. Yellow is safe. Red is the conservative choice. Seeing this live is what makes tactical decision-making readable to anyone watching, not just trained analysts.

The Eval Bar. Our signature feature, and the idea that started the whole project. A single number from -100 to +100 showing which team has the tactical advantage and how it is shifting. It responds to threat, space control, possession, pressure, and sustained forward carries into dangerous zones. The same way Stockfish makes a chess position legible to anyone watching, PitchIQ makes a football position legible. When a player drives toward goal, you watch it climb. That is the feeling we were building toward.

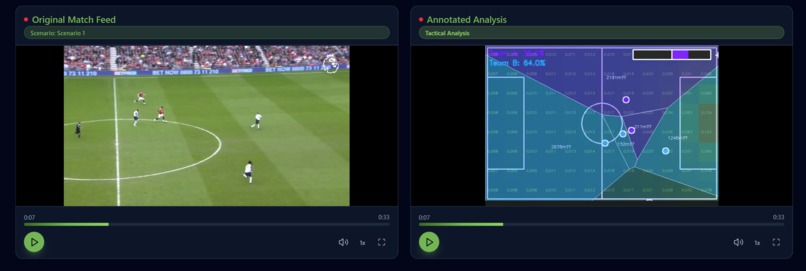

Tactical View. A real-time split-screen with Voronoi space control polygons and an Expected Threat heatmap overlay. Shows which zones belong to which team and where danger is concentrating, updated every frame.

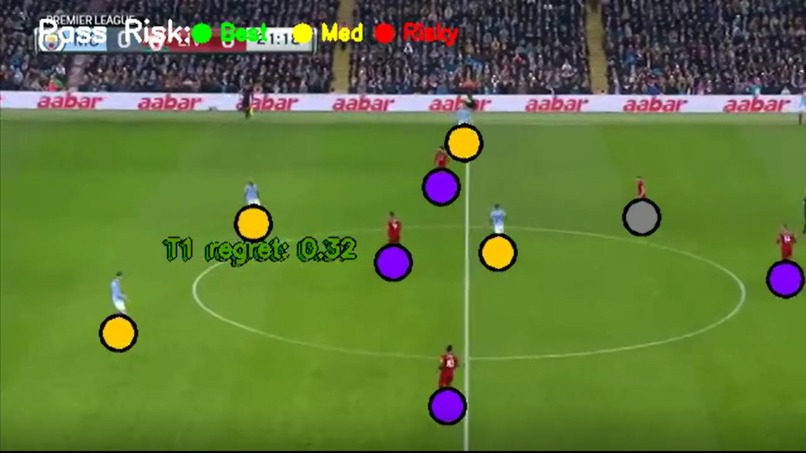

The Regret Heatmap. The feature we are most proud of. After a full match, PitchIQ maps every passing decision and measures the gap between the best available option and what was actually played, zone by zone, across the entire match, broken down by half, with attack direction normalized so both halves are always directly comparable.

The result is a spatial map of decision quality. Where did this team consistently find the best option? Where did they leave value on the table? Coaches and analysts get match-level pattern insight from footage they already have.

How We Built It

We designed PitchIQ as a broadcast-first decision engine.

Understanding the frame. We use YOLOv8 to detect players, goalkeepers, and the ball from broadcast footage, then classify players by team using jersey color analysis with temporal stability so labels stay consistent across the clip. Referees are identified and excluded from tactical calculations automatically.

Mapping broadcast to tactics. We estimate the pitch layout using keypoint detection and project player positions into real-world pitch coordinates. This gives us team shape, space control, ball progression, and attacking threat, the inputs that power the split-screen tactical board.

Scoring decisions, not just events. Once we know the ball carrier and available options, we score each candidate pass using two signals: the threat gained from that pass (Expected Threat / xT from the StatsBomb open grid) and the space available at the receiving position (Voronoi area control). We compare the chosen pass to the best available one and accumulate that gap spatially into the regret heatmap.

A key detail: xT is computed relative to each team's attacking direction. Both teams read their own version of the grid, oriented toward their own attacking goal. This was the single biggest unlock for eval bar accuracy.

Making it watchable. The same clip renders in four synchronized modes so different viewers can inspect the same moment from different angles: tracking, pass optimality, eval bar, and tactical board. The frontend dashboard is built in Figma Make with synchronized dual-panel playback and a live mode switcher.

The system is designed to be conservative and trustworthy. Pass lines only appear from the confirmed ball carrier. Team labels use temporal stability logic. All tactical signals are team-direction aware.

Challenges We Took On

Working from real broadcast footage. We chose to build entirely on standard broadcast video rather than sensor data or proprietary feeds. That meant building a pipeline that holds up on footage with varying camera angles, zoom levels, lighting, and motion. Every scenario we processed made the system more robust.

Ball tracking is a genuinely hard problem. The ball is small, fast, and frequently occluded in real broadcast footage. We built conservative holder-confirmation logic and short-horizon fallback behavior so the system always prioritizes correctness. This constraint directly shaped the design of our pass analysis and made the regret system more principled.

One GPU pod between four people. Processing a full 90-minute match takes 20 to 40 minutes at our sampling rate. That forced us to be disciplined about what to validate, what to render fully, and what to optimize first. Five working analytical layers in 36 hours is a direct result of that discipline.

What We Are Proud Of

In under 36 hours, we built a working broadcast analytics pipeline that goes well beyond detection.

The regret heatmap is the piece we are most excited about. We went looking for an open tool that converts passing decisions into a spatial, match-level decision quality map and could not find one. Accumulating the gap between optimal and actual decisions zone by zone, normalized for attack direction and halftime side-switching, is something we think is genuinely new and genuinely useful.

We also went in with a full feature list and shipped every single item on it. Five analytical layers, four synchronized output modes, a full-match regret pipeline, and a live dashboard. That does not happen often in 36 hours.

What We Learned

We learned Voronoi diagrams, not the textbook version but the version that has to handle real player positions, referee interference, and goalkeeper clearances mid-play.

We learned that scoring the best available pass option is a tractable problem. Scoring which passes were suboptimal and mapping that spatially across a match is a harder and more interesting one. Most of the depth in PitchIQ came from that second question.

We learned that the gap between working on a demo clip and working on real broadcast footage is where every interesting engineering problem actually lives.

What Is Next

The immediate goal is near-real-time processing for live broadcast integration, low enough latency to show the eval bar moving during a match, not just in post-analysis.

Beyond that, PitchIQ should serve three audiences equally:

The casual fan who wants to understand why a position is dangerous before the goal happens The serious analyst or coach who wants zone-level decision quality data across a full season The introductory viewer who's new to football and needs the eval bar to understand what they're watching

Chess figured this out. Millions of casual viewers watch games they would not otherwise follow because the eval bar gives them a way in. Football deserves the same.

PitchIQ is that tool.

The Math Behind it

A key detail in how PitchIQ works is that Expected Threat (xT) is computed relative to each team's attacking direction. Let \( L \) be pitch length and \( x_T(x,y) \) be the base xT grid. We define:

$$x_T^{(k)}(x,y) = \begin{cases} x_T(x,y) & k = 0 \ x_T(L - x, y) & k = 1 \end{cases}$$

Team 0 reads the base grid. Team 1 reads a horizontally flipped version. Both teams always score high xT near their own attacking goal.

Pass Optimality Score

For each candidate receiver ( r ) at time ( t ):

( S_{t,r}^{(k)} = \alpha_s \hat{x}{t,r}^{(k)} + (1 - \alpha_s)\hat{a}{t,r} )

Regret

If the actual pass at time \( t \) goes to receiver \( r_t^* \), instantaneous regret is:

$$\mathcal{R}_t^{(k)} = \max_{r \in \mathcal{O}_t} S_{t,r}^{(k)} - S_{t,r_t^*}^{(k)}$$

If the chosen pass is optimal, regret is zero. Regret accumulates per pitch grid cell \( C_{ij} \):

$$H_{ij}^{(k)} = \sum_{t \in \mathcal{P}^{(k)}} \mathcal{R}_t^{(k)} \cdot \mathbf{1}[(x_t, y_t) \in C_{ij}]$$

The grid is Gaussian-smoothed and capped at the 95th percentile. Halftime side-switch correction ensures both halves are spatially comparable.

Eval Bar

$$E_t = 100 \cdot \tanh\Big(\gamma \, r_t \,(w_1 \Delta\text{xT}_t + w_2 \Delta\text{PitchControl}_t + w_3 \Delta\text{Possession}_t + w_4 \Delta\text{Pressure}_t + b_t^{\text{carry}})\Big)$$

For sustained forward carries, momentum builds exponentially:

$$m_t = \delta m_{t-1} + \max(0, x_{T,t}^{\text{active}} - x_{T,t-1}^{\text{active}})$$

$$b_t^{\text{carry}} = \eta(e^{\beta m_t} - 1) \cdot \text{sgn}(\text{attacking team})$$

This is what makes the eval bar climb continuously during a dangerous dribble run rather than reacting only at the final pass or shot.

Log in or sign up for Devpost to join the conversation.