DEMO VIDEO CONTAINS KISSING SCENE

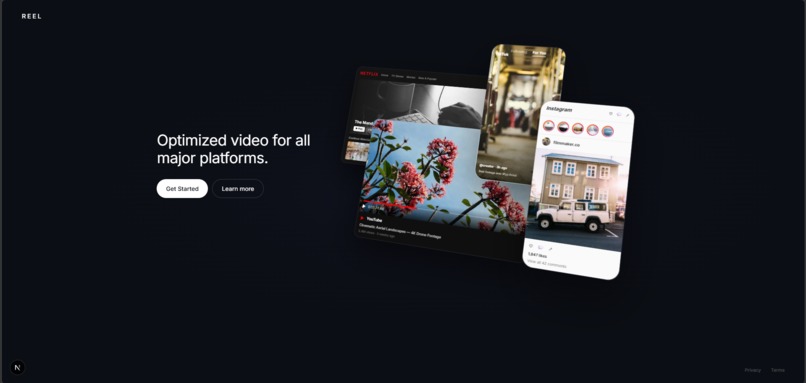

REEL - Rating Enhancement & Editing Layer

Project Inspiration: Why REEL Matters

Media is currently static, but audiences are diverse. A historical archive might be unwatchable for someone with photosensitive epilepsy due to film flicker; a cinematic masterpiece might be inaccessible to a family because of a single scene of profanity or intimacy.

The current solution is a "binary" choice: you either watch the content and risk the trigger, or you don't watch it at all. We believe that in the age of Generative AI, content should be as adaptive as the people watching it. We built REEL to move beyond the "blur" and "mute" buttons. Our motivation was to create a Surgical Inpainting Pipeline that preserves the director's vision while making the world’s media safe, accessible, and inclusive for everyone.

Technology Stack

Languages

- Python 3.11+: Powering our asynchronous orchestration backend.

- TypeScript / JavaScript: Ensuring a type-safe, responsive frontend.

- CSS (Tailwind): For our high-performance "Mission Control" UI.

Frameworks and Libraries

- FastAPI: Our high-throughput backend handles concurrent AI agent tasks.

- Next.js 15 (App Router): Utilizing Turbopack for lightning-fast UI state management.

- Railtracks: Our critical observability layer, used for orchestrating the Sentinel and Forge agents.

- FFmpeg: The "engine" of our project, used for frame-accurate slicing and hardware-accelerated stitching.

Platforms

- Google Cloud Platform (GCP):

- Vertex AI: Hosting our Gemini 2.0 Flash (Sentinel) and VEO 3.1 (Forge) models.

- WE USED OVER $150 IN GEMINI CREDITS GENERATING VIDEOS

Tools

- VSCode + AI: Our primary IDE for rapid prototyping.

- Git Bash: For managing our local Windows development environment.

Product Summary: How it Works

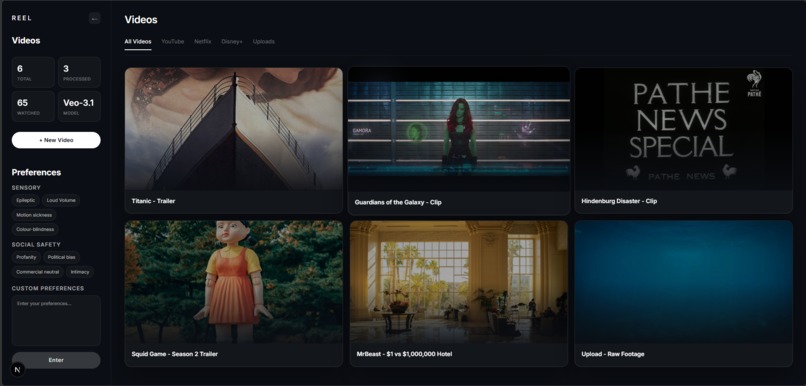

REEL (Real-time Enhancement & Editing Layer) is a surgical AI video sanitization platform. It transforms "static" video into an "adaptive" experience through a three-stage agentic pipeline:

The Sentinel (Scan): Our Gemini 2.0 Flash agent performs a scan based on the user's specific "Safety Toggles" (e.g., Epilepsy, Profanity, Intimacy).

The Forge (Generate): The backend triggers Gemini VEO 3.1 to perform generative inpainting on identified segments. It doesn't just blur the pixels; it replaces them—transforming a middle finger into a thumbs-down or a flickering video into a stabilized stream—all while maintaining original context.

The Assembler (Stitch): Using a multi-threaded FFmpeg pipeline, we surgically "hot-swap" the AI-generated clips into the original video stream with zero audio drift.

Innovation:

The core innovation of REEL is that it doesn't just "block" content—it re-imagines it. Traditional filters are clunky; they just blur the screen or mute the sound, which ruins the movie. Instead, REEL uses a "smart swap" system. While you’re watching, our AI has identified specific parts to change and found suitable replacements that match the original scenes context. By doing this in the beforehand, we create a perfectly seamless video that stays safe without ever breaking the story or making you wait for a loading bar.

AI Use Survey

Was more than 70% of the code generated by AI?

Yes.

Built With

- fastapi

- ffmpeg

- gemini

- nextjs

- python

- railtracks

- tailwind

- typescript

- veo

Log in or sign up for Devpost to join the conversation.