Inspiration

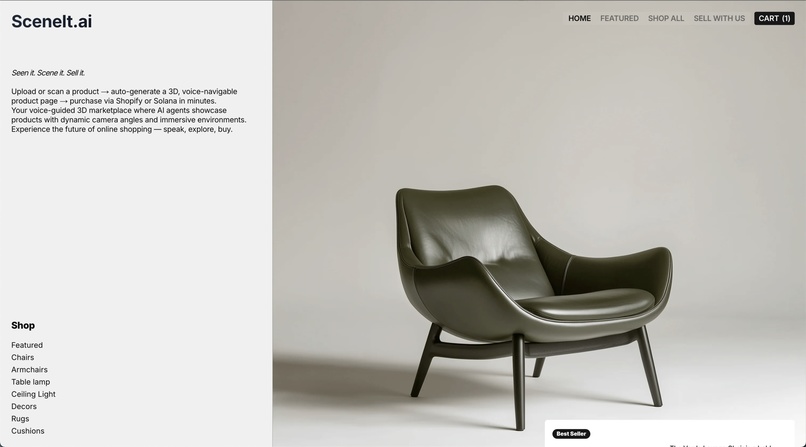

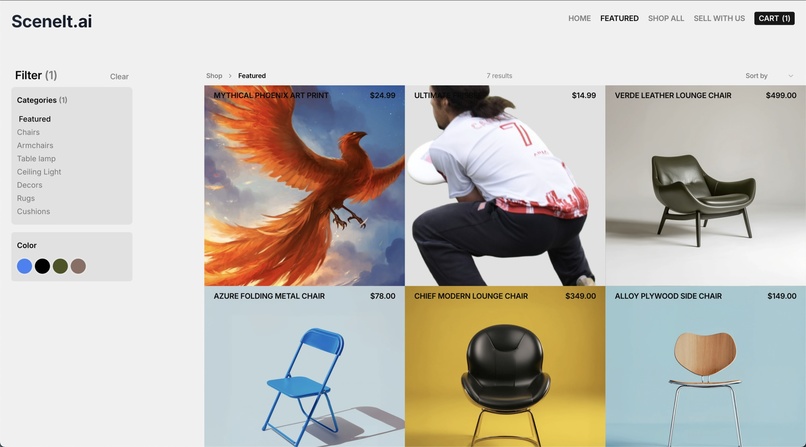

Shopping online still makes buyers do a ton of work: hunting for the right listing, asking basic questions, and trying to guess what an item really looks like from a couple of photos. We wanted to flip that around—let the seller upload one photo, and give buyers a hands-on 3D model plus a conversational sales agent that can answer questions and tour the product for them.

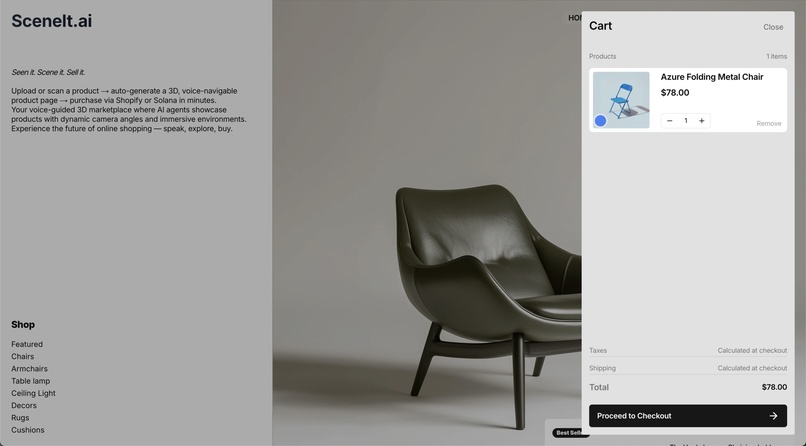

What it does

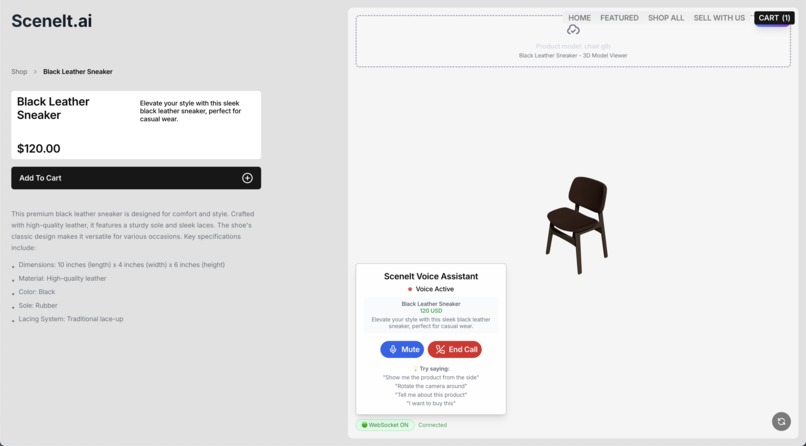

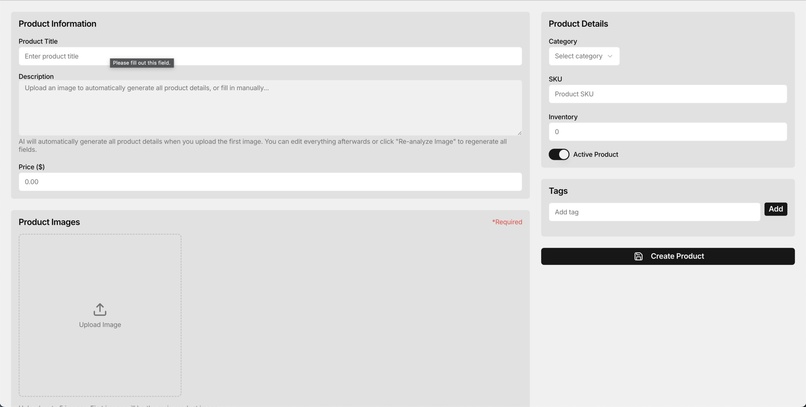

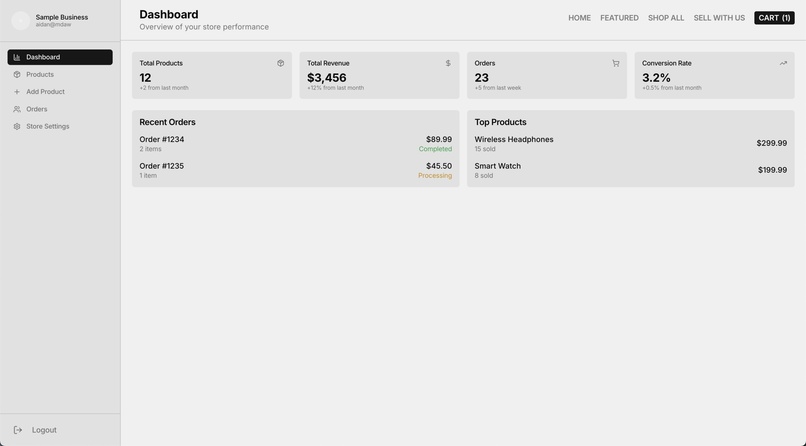

SceneIt takes a single product photo (e.g., a car), reconstructs a 3D mesh, and auto-uploads a the model to our site. Buyers can observe the model and ask a voice sales agent anything—“show me the tires,” “what’s the interior like?”—and the agent responds and drives the 3D view to the right angle.

How we built it

We built the web app in Next.js + TypeScript with a product page that loads the generated 3D model. A Python service runs TripoSR to turn a single uploaded image into a mesh we export as object file and auto-post back to the site. On top, a Vapi conversational agent—built with Vapi’s TypeScript SDK—handles real-time Q&A and can programmatically rotate, pan, and zoom the 3D view based on user requests.

Challenges we ran into

- Making the 3d renderings look good (colour, texture, etc)

- Learning how to use the VAPI sdk

- Integrating different aspects of the project together

Accomplishments that we're proud of

- Getting VAPI to navigate and describe 3d models in detail

- Modyfing the 3d modelling code to display textures and colours more precisely

What we learned

- Computing the textures in a 3d model is the post computationally expensive part!

What's next for SceneIt

- Add capabilities for the voice agent to use the web and find other relevant product in the catalogue for more comprehensive suggestions and feedbacks

- Enhance 3d scanning photogrammetry models for faster and higher definition 3d object generation with more realistic textures

Log in or sign up for Devpost to join the conversation.