Inspiration

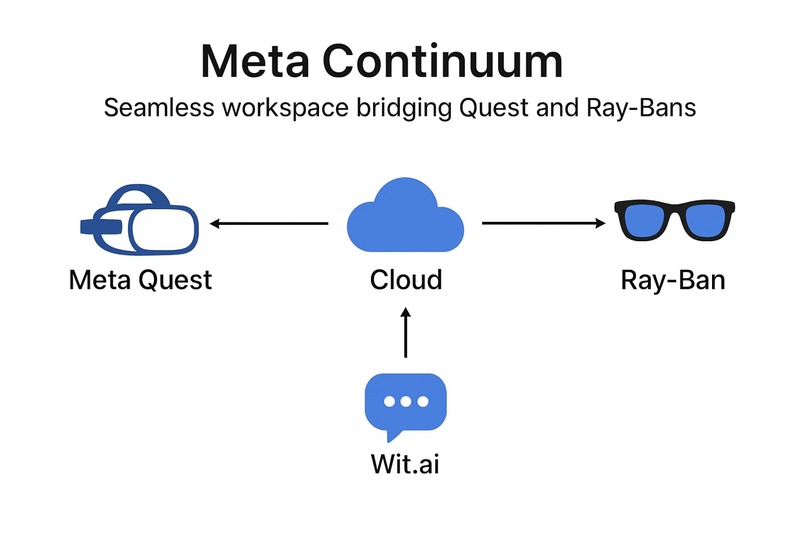

Work doesn’t belong to a screen — it belongs to the world around us. We wanted to dissolve the boundary between physical and digital spaces so collaboration and memory persist everywhere. Meta Continuum was inspired by Meta’s vision for ambient, AI-driven spatial computing across devices like Quest and Ray-Ban smart glasses.

What it does

On Meta Quest, users create sticky notes, whiteboards, and screens anchored in their environment using hand tracking and passthrough. On Ray-Ban smart glasses, users can recall, capture, or create workspace notes through voice commands and AI context. Everything stays synchronized through cloud anchors and shared data, creating a continuous “spatial memory” accessible anywhere.

How we built it

We built the immersive workspace in Unity using Meta’s Presence Platform SDK for hand tracking, passthrough, and spatial anchors. We added a voice command stub that connects to a Node.js relay integrated with Wit.ai for speech understanding from Ray-Ban inputs. Data sync and anchor metadata are stored in a shared cloud backend, making notes and objects retrievable from both devices.

Challenges we ran into

Bridging two very different device SDKs (Quest XR vs. Ray-Bans’ mobile/AI stack). Creating a consistent spatial reference system between virtual and real-world contexts. Designing a lightweight communication protocol for voice commands and cloud anchors. Balancing immersive 3D UX with voice-first interaction models.

Accomplishments that we're proud of

Built a functional Unity XR workspace with hand-tracked sticky notes and persistent anchors. Prototyped cross-device communication between Quest and Ray-Bans using a voice-to-Unity pipeline. Defined a new user paradigm: continuous spatial work, bridging immersive and ambient computing.

What we learned

We learned how to design for continuity across devices — not just porting features, but maintaining context and identity through AI, voice, and spatial persistence. We also deepened our understanding of Meta’s Presence Platform, Graph API, and Ray-Ban ecosystem, seeing how they converge into one spatial computing future.

What's next for Seamless workspace bridging Quest and Ray-Bans

We’ll integrate the Meta Voice SDK for direct on-device processing, expand multiuser collaboration, and explore contextual AI assistants that understand your spatial environment. The goal: make your workspace follow you seamlessly — from headset to glasses to reality itself.

Built With

- metapresenceplatform

- mixedreality

- raybansmartglasses

- spatialcomputing

- unity

- witai

Log in or sign up for Devpost to join the conversation.