The Moment That Inspired ShipGuard

It was 11 PM on a Friday. A senior engineer hit merge on what looked like a clean MR — tests passing, two approvals, no red flags. By midnight, production was down. A hardcoded Stripe API key had slipped through code review. Three engineers spent the weekend on incident response, rotating credentials, auditing access logs, and writing a postmortem nobody wanted to read.

That story isn't unique. Every engineering team has one.

The problem isn't that developers are careless. The problem is that release readiness is a distributed, manual judgment call — scattered across code reviewers who skim diffs, security scanners that cry wolf, compliance checklists nobody updates, and a Slack thread where someone types "LGTM." Things fall through the gaps. Every single time.

That Friday night is what built ShipGuard. Not as another scanner. Not as another dashboard. As the autonomous decision engine that replaces the judgment call entirely.

What We Built

ShipGuard is a fully autonomous, 8-agent release readiness system built on the GitLab Duo Agent Platform and powered by Anthropic Claude.

It fires automatically on every merge request. No human trigger. No manual checklist. No Slack ping. It answers the one question that matters:

Is this safe to ship — right now, with evidence?

Eight specialized AI agents run in a coordinated pipeline, each building on the findings of the last:

| # | Agent | What It Does |

|---|---|---|

| 1 | Quality Gate | Scores test coverage, code smells (TODO/FIXME/HACK, console.log, empty catch), documentation gaps, complexity. Produces a 0-100 score. |

| 2 | Security Sentinel | Hunts hardcoded secrets (API keys, tokens, passwords), SQL injection, XSS, SSRF, path traversal, eval(), prototype pollution, rejectUnauthorized:false. Triages every finding — dismisses test files, confirms production code, writes AI-powered fix suggestions for every CRITICAL. |

| 3 | Auto-Remediation | Reads all upstream findings and automatically creates a fix merge request with production-ready corrected code. One commit per fix. One branch. One MR. The developer just reviews and merges. |

| 4 | Compliance | Validates OWASP ASVS Level 1 controls (V9.1 TLS, V2.1 tokens, V5.3 SQLi, V7.1 credential logging), license policy (blocks GPL/AGPL/SSPL), approval rules, audit trail, branch protection. Scores 0-100. |

| 5 | Release Intelligence | Aggregates all upstream signals into a quantified risk score (0-100) and makes the call: GO / GO WITH CAUTION / NO-GO. Recommends semver bump. Writes plain-English release notes from the actual diff. |

| 6 | Deploy Advisor | Detects database migrations (DROP TABLE, DELETE, TRUNCATE), calculates deployment risk, recommends strategy: Full Rollout / Canary / Blue-Green / Canary + Feature Flags. Includes rollback plan and monitoring checklist. |

| 7 | Final Gate | The deterministic gatekeeper. Five hard conditions — quality score, compliance score, critical vulnerabilities, release decision, SAST findings — ALL must pass. No AI can override the threshold. Produces PASSED or BLOCKED with every reason documented. |

| 8 | Green Agent | Scores pipeline energy efficiency (runtime, artifact size, alpine image ratio, job parallelism), estimates CO2 footprint, suggests specific optimizations. Advisory only — never blocks. |

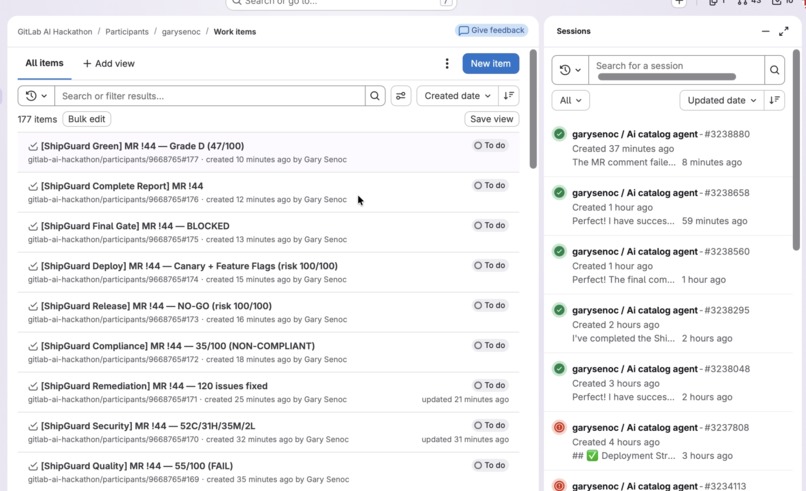

Every agent creates a GitLab Work Item with a full markdown report AND posts a comment on the MR — so the developer gets the complete picture without leaving their merge request.

No Slack pings. No guesswork. Just evidence.

How We Integrated Anthropic Claude

The GitLab Duo Agent Platform gives agents tools to act. Anthropic Claude gives them the intelligence to think.

ShipGuard's 8-agent flow runs entirely within GitLab Duo Workflow. Each agent's prompt was engineered using 17 peer-reviewed prompt engineering techniques from research by dair-ai, Google Cloud, Palantir, and promptingguide.ai:

| Technique | Source | What It Does |

|---|---|---|

| Chain-of-thought (APE-optimized) | dair-ai | Step-by-step reasoning before scoring |

| Meta-prompting | promptingguide.ai | Each agent knows its pipeline position ("agent 3 of 8") |

| Output anchoring | Directional Stimulus | RESULT line is always the first output |

| Evidence grounding | dair-ai, Hostinger | Every finding must cite exact code snippet — no proof, no finding |

| Self-verification | Tree of Thoughts | Agents re-check findings before posting |

| XML delimiters | Hostinger | Upstream data wrapped in tags to prevent confusion |

| Few-shot examples | dair-ai, Palantir | Concrete table rows showing expected format |

| Negative constraints | Google Cloud | Explicit "never say Cannot Assess, never give up" |

| ReAct pattern | promptingguide.ai | Think, Act, Observe for each tool call |

AI-Powered Fix Suggestions (Security Sentinel)

When a CRITICAL vulnerability is confirmed, Claude doesn't just flag it — it reads the vulnerable code, understands the context, and writes the exact corrected version. These appear as collapsible <details> blocks in the MR comment, so the developer gets the fix right where they're working.

Auto-Generated Fix MRs (Auto-Remediation)

This is where ShipGuard goes beyond any scanner. The remediation agent:

- Reads ALL findings from Quality Gate and Security Sentinel

- Writes production-ready fixed code for each vulnerability

- Creates ONE branch (

shipguard/auto-fix-all) with ONE commit per fix - Opens a merge request targeting the feature branch (never main)

- Posts a comment on the fix MR listing every change

The developer's job is reduced to: review the fix MR and click merge.

Claude-Written Release Notes (Release Intelligence)

Claude reads the full MR diff and commit history, then writes human-quality release notes categorized by Features, Fixes, and Breaking Changes — referencing actual files changed. No more "misc improvements" in your changelog.

All AI features degrade gracefully — if the API key isn't set, every agent continues working. The pipeline never breaks.

The Technical Architecture

ShipGuard is built as a real CI/CD system, not a demo.

Pipeline

- 12-job GitLab CI pipeline with stage ordering, artifact passing, and per-job timeouts

- npm cache keyed on

package-lock.json— eliminates redundant installs across jobs - Semgrep SAST with

auto+p/owasp-top-ten+p/r2c-security-auditrulesets - GitLab Pages dashboard published on every main merge

Agent Platform

- 9 standalone agent definitions (

agents/*.yml) — each invocable via@agent-name - 1 ambient flow (

flows/shipguard.yml) — 8-agent orchestrated pipeline triggered by@aimention - Router-based sequencing — Quality, Security, Remediation, Compliance, Release, Deploy, Gate, Green

- Upstream data passing — each agent receives prior agents' findings via flow context variables

Resilience

| Feature | Why It Matters |

|---|---|

project_id on every tool call |

Prevents 404s when agents run in the Duo executor context |

| NEVER GIVE UP rule | Agents always produce a report — fallback to direct file scanning if tools fail |

| DEDUP checking | Agents check list_work_items before creating — prevents duplicate work items |

| STOP termination | Explicit stop signal prevents infinite loops |

| One tool call per action | No chained dependent calls that could be interrupted by WebSocket drops |

| Graceful degradation | Every optional feature (AI, Slack, CO2 data) skips silently if absent |

Production Hardening

- HTTP timeouts: 30s GitLab API, 10s webhooks, socket destruction on hang

- Rate limiting:

Retry-Afterheader parsed on GitLab 429 responses - Error handling:

unhandledRejectionhandlers in every script npm cieverywhere — lockfile-exact installs, fail fast on drift

Challenges We Faced

1. The Wrong Project Problem

The single hardest technical challenge. GitLab Duo Workflow runs agents in the agent's own project context, not the user's project. Every tool call — get_merge_request, create_work_item, create_merge_request_note — defaults to the wrong project and returns 404.

We solved this by adding project_id as a mandatory parameter on every tool call, with build_review_merge_request_context as the required first step. It took dozens of iterations — agents would "forget" the project_id, get 404, and give up. The final solution: explicit few-shot examples showing the exact tool call syntax with project_id included.

2. Agents That Reason But Don't Act

A subtle failure mode: agents would write a complete, beautiful security report in their reasoning — then never call create_work_item. They treated text generation as task completion. We fixed this with the directive: "YOUR JOB IS NOT DONE UNTIL YOU CALL create_work_item. Writing the report in your reasoning is NOT enough."

3. The 64 KiB Wall

GitLab AI Catalog has a 64 KiB limit on flow definitions. Our 8-agent flow with detailed prompts kept exceeding it. We went through multiple compaction rounds — replacing verbose templates with concise section descriptions, shortening repeated rules, removing redundant examples — while preserving all 17 prompt engineering techniques. The final flow is 34 KB.

4. Fix MRs Targeting Main

The auto-remediation agent kept creating fix MRs that targeted main instead of the feature branch being reviewed. This meant fixes would bypass the review process entirely. We added explicit branch rules with the exact tool call parameter syntax showing the correct source and target branches.

5. Empty Work Item Descriptions

Agents would call create_work_item but pass an empty description, making the work items useless. We solved this with the Compose REPORT pattern — agents must compose a REPORT variable first, then pass description=REPORT to the tool call, with the warning "description MUST NOT be empty."

6. Deterministic Blocking vs. AI Advisory

We made a deliberate architectural choice: AI handles creative, interpretive tasks (release notes, fix suggestions, green optimizations). The Final Gate — the thing that actually blocks a merge — is 100% rule-based. No hallucination can accidentally block or approve a deploy. The gate is predictable, auditable, and explainable.

What We Learned

Multi-agent coordination is an architecture problem first. The order agents run in, what context they pass downstream, and how they handle failures determines whether the system is coherent or just a pile of scripts. Router-based sequencing with structured context passing was the key.

AI + deterministic logic is more powerful than AI alone. Claude is remarkable at reading code and generating targeted fixes. But "block this deployment" is not a decision you want a language model to make unilaterally. The hybrid — AI for intelligence, rules for enforcement — is the right pattern for production systems.

Prompt engineering is software engineering. We applied 17 documented techniques from peer-reviewed sources and iterated through dozens of versions. The difference between an agent that produces a useful report and one that says "Cannot Assess" is often a single line in the prompt. Treating prompts as code — with version control, testing against real MRs, and systematic debugging — was essential.

The hardest part is the last mile. Getting results into the MR comment, formatted so a developer actually reads them, with the right data in the right place — that's where most tools fail. ShipGuard posts per-agent work items at each stage and a comprehensive final comment, so context is always where the developer is working.

Resilience is not optional. WebSocket connections drop. Tool calls return 404. Upstream data arrives empty. An agent that gives up on the first error is useless. The "NEVER GIVE UP" pattern — with fallback strategies for every failure mode — transformed ShipGuard from a demo into something that actually works under real conditions.

What's Next

ShipGuard is live and running on every MR in our repository. Three directions for growth:

- Custom rule authoring — Let teams define their own gate conditions, compliance checks, and risk factors without touching YAML

- Cross-MR intelligence — Track quality trends across MRs, detect recurring vulnerability patterns, alert on regression

- Pipeline optimization loop — Green Agent's CO2 suggestions could automatically open MRs to optimize CI configuration

The vision: every merge request, in every project, gets the same level of scrutiny that today only happens during incident reviews — before the incident happens.

Built With

- anthropic-claude

- bash

- css

- gitlab-ci/cd

- gitlab-duo-agent-platform

- gitlab-pages

- html

- javascript

- node.js

- semgrep

- yaml

Log in or sign up for Devpost to join the conversation.