Inspiration

Unsegregated waste disposal causes a lot of problems for humans as well as the environment. Soil contamination is one of the major consequences of this problem. For example, unsegregated plastic enters the soil and releases harmful carcinogens, hence endangering life around it. Therefore, it is crucial that we filter the waste before its disposal. This issue is not only observed at large-scale industries, but also at universities, schools, and homes. Students or employees, in a hurry, are less likely to spend time classifying their trash before disposing it into the dustbin. Due to this, most of the trash gets collected in the garbage section of the dustbin, unfiltered. To ease this process of segregation at the first level, we have designed Smart Trash.

What it does

Our product is a special type of dustbin, which will ‘look’ at the garbage intended to be thrown, and indicate which hole it should be thrown in. Currently, our solution identifies the type of waste shown in the camera into (plastic, paper and metal). This is done via a machine learning model, trained on Google Colaboratory with TensorFlow using python.

Once it is determined the type of garbage the user wants to throw, it is further classified as either biodegradable or non-biodegradable. Based on this, the LED near the relevant hole in the dustbin lights up as a visual indicator. This is done quickly enough so that the user does not have to actively think about.

The result is better segregated waste, without any extra user input.

How we built it

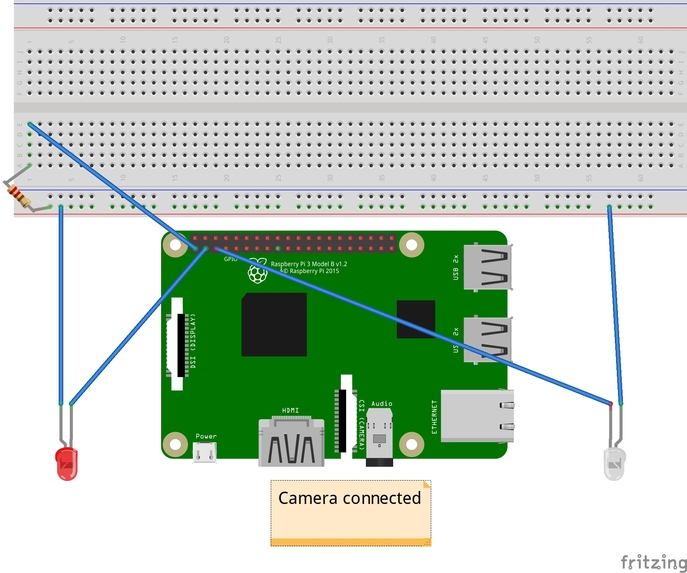

This is the basic circuit for our project

Physical Parts:

- Raspberry Pi 3B+

- Raspberry Pi Camera

- 2 LEDs

The program runs on a Raspberry Pi so that it can easily be retrofitted in existing dustbins, which are already installed.

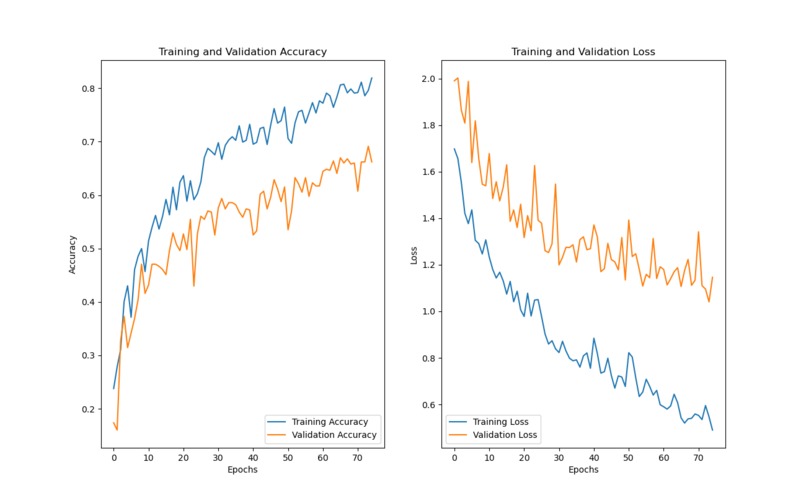

The first step was to acquire a database with different types of garbage. We chose to go with the well-known garbage classification dataset on Kaggle. After that, a Python script was used to create a sequential machine learning model consisting of 4 Conv2D and MaxPooling2D layer sets, 1 Flatten layer and 2 Dense layers. This model was then trained on the dataset, which we manually split into Training and Validation subsets. We chose to run this script on Google Colaboratory, as none of our computers were powerful enough to complete the task in a reasonable time-frame. This machine learning model was then exported to a .h5 file, which was downloaded to the Raspberry Pi to begin writing the prediction script. Here are the results of our model:

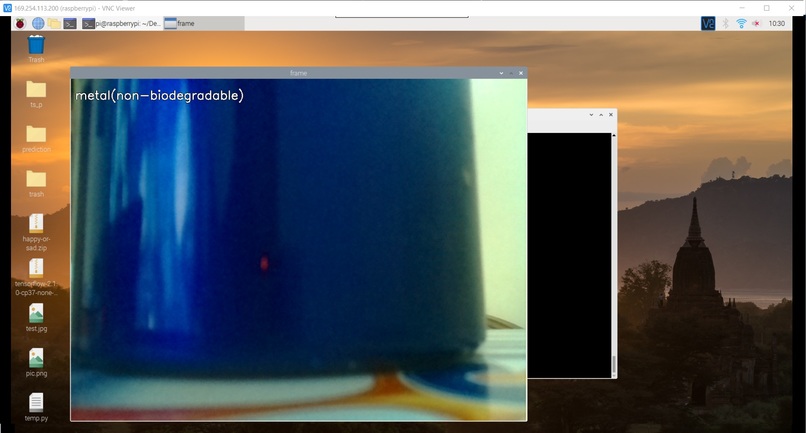

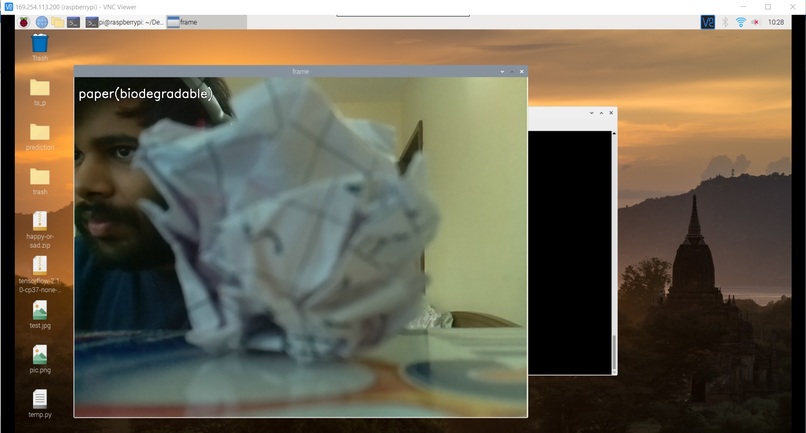

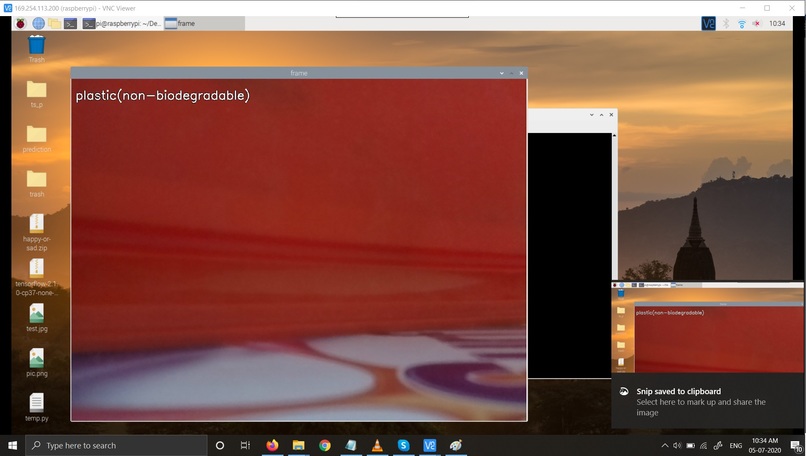

The prediction script uses the OpenCV module for Raspberry Pi to create a video stream using the Raspberry Pi camera. Each frame of this stream is passed through the machine learning model to predict the type of trash. The number of types of trashed was reduced from 6 to 3, due to camera limitations. Once the type of trash is determined, it is classified into biodegradable and non-biodegradable subsets. After that, the appropriate LED is turned on to convey which hole the trash should be thrown in. Simultaneously, the video feed also displays which type of trash is sensed, along with an image of it(see video).

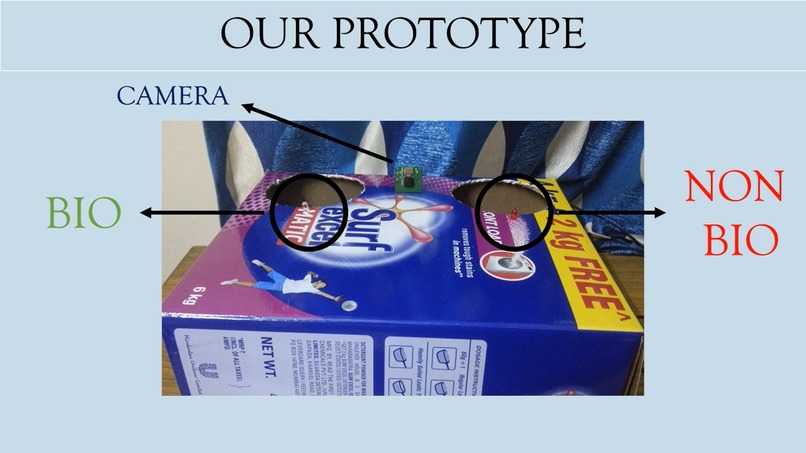

The physical dustbin model consists of a card-board box with two holes, each paired with an LED. The hole near the white LED represents where biodegradable waste would be thrown. The hole near the red LED represents where non-biodegradable waste would be thrown. Each LED lights up when the relevant waste is sensed. Here is the physical model that we built:

Challenges we ran into

- None of the people in the team had experience implementing machine learning hands-on in this manner. Every technology that we used here was learnt in the duration of the hackathon. None of us had experience with openCV and video streams either.

- Another challenge was that our camera was not sensing any materials other than paper, plastic and metal. This meant that we had to remove the functionality of sensing glass and cardboard, as it was interfering with core features of our system.

- Our personal systems were not powerful enough and were taking too long to train the model, hence we offloaded this work to Google’s computers, using Colaboratory.

Accomplishments that we're proud of

- Our first time implementing and using AI and ML in a project.

- Model trained to ~80% accuracy!

- Predicting objects in real-time video as compared to detecting features in static images.

What we learned

- Coding simultaneously online

- Machine Learning training and prediction

- Different types of machine learning layers and their usage.

- Implementation of AI and ML in real life projects

- Working with multiple python libraries to increase UX

- OpenCV

- Improved video editing skills

- Collaboration and teamwork

What's next for SmartTrash

- In the future we plan to control the lids of the dustbin automatically, but due to hardware constraints, we opted for LEDs for now.

- We also plan on training the AI model to increase accuracy and introduce new segregating factors into the classifier.

- As the model becomes more accurate, we will try to adapt to a more realistic version of the dustbin and test it thoroughly in real life scenarios.

Domains Names Registered

- Kushagra - letsnotgo.online

- Charu - 4dimension.space

- Ajeya - allyour.space

Built With

- google-colaboratory

- gpiozero

- keras

- numpy

- opencv

- raspberry-pi

- raspberry-pi-camera

- raspbian

- tensorflow

Log in or sign up for Devpost to join the conversation.