💡 Inspiration

We noticed a critical disconnect in the renewable energy market: while many homeowners want to go green, the transition feels risky and abstract. They often ask, "Will panels ruin the look of my house?" or "Is my roof angle actually good for generation?"

We realized that a Mixed Reality tool is the perfect medium to bridge this gap. We didn't just want to build a calculator; we wanted to build a simulator. SolarScape XR was inspired by the idea that "seeing is believing." By bringing abstract energy data into the user's physical reality, we can remove the guesswork from solar planning and empower users to make confident, eco-friendly decisions.

🔋 What it does

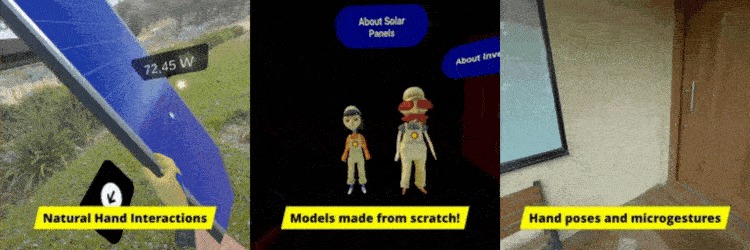

SolarScape XR is an immersive controller-free mixed reality application that allows users to visualize and analyze solar potential in their real-world environment.

- Visual Integration: Users can place 1:1 scale virtual solar panels and inverters onto their physical roofs or backyards.

- Real-Time Data: The app fetches the current sun position based on the user's geolocation and timezone.

- Performance Estimation: It calculates potential power output instantly, updating numbers as users move or rotate the solar panels.

- AI-Powered Appliance Detection: Users can snap photos using the Meta Quest 3, which are analyzed by Nvidia's Grounding Dino model (thanks to Lucas Martinic) to automatically identify home electronics and estimate their wattage.

- Energy Balance Review: A dedicated dashboard compares the total consumption of the user's devices (added via AI or manually) against their solar production, instantly showing if they would be drawing from or contributing to the grid.

- Architectural Integration: Users can toggle between different hardware styles, such as black monocrystalline or blue polycrystalline panels, and switch between roof or ground mounts to find the configuration that best complements their home’s design.

⚙️ How we built it

We developed SolarScape XR using Unity C# and Meta XR SDK, focusing on a controller-free, immersive user experience.

- Astronomical Sun Solver & Wi-Fi Geolocation: Since the Meta Quest 3 lacks native GPS, we implemented a workaround using Android Location Services to obtain approximate coordinates via Wi-Fi triangulation. We feed this location data into our custom C# astronomical algorithm (calculating Julian Date and Ecliptic Longitude) to simulate the exact sun angle for that specific spot on Earth, entirely offline.

- Controller-Free Interaction: We committed to a "hands-first" design using the Meta XR SDK. By leveraging Hand Tracking and custom gesture recognition, users can grab, rotate, and snap virtual solar panels without ever picking up a controller.

- Voice-Powered Guidance: To make the data accessible, we integrated the Meta Voice SDK to power two interactive 3D virtual assistants. These avatars speak directly to the user, explaining energy metrics and guiding them through the simulation using Text-to-Speech.

- AI-Powered Energy Audit: We integrated Nvidia’s Grounding Dino model for object detection. Using the new Passthrough Camera Access, users can take pictures of their environment, the image is analyzed on the server to detect home appliances and estimate their wattage, building a personalized consumption profile.

- The Physics Engine: To generate instant energy estimates, we implemented the photovoltaic power formula directly in our update loop:

$$P = G \times A \times \eta$$

Where:

- G: Solar irradiance (W/m^2) — derived from our custom sun altitude calculation.

- A: Panel area (m^2) — calculated based on the solar panel 3D model 1:1 scale.

- n: Panel efficiency — configurable to simulate different solar panel specs (monocrystalline or polycrystalline).

🚧 Challenges we ran into

- Indoor Tech vs. Outdoor Context: We initially planned to use the Meta Mixed Reality Utility Kit (MRUK) to intelligently spawn our 3D AI assistants so they wouldn't collide with physical objects. However, we hit a wall: MRUK relies on room scene data, but our app requires the user to go outside for solar panel placement. The headset could not generate a scene mesh in an open driveway.

- The Solution: We pivoted to a "camera-relative" UI, shrinking the assistants into miniature floating companions that spawn directly in the user's field of view, ensuring they remain accessible regardless of the environment.

- The "Distant Depth" Problem: We originally wanted panels and inverters to automatically snap to the surfaces. We realized that the Quest 3's depth sensors and plane detection are optimized for room-scale interactions, not for detecting a roof 10 meters away.

- The Solution: Instead of relying on jittery automatic depth detection, we built a robust free-movement system. We trusted the user's perception, giving them full 6-DOF control to manually align panels and "eye-ball" the placement, which ultimately felt more reliable and flexible.

- The "True North" Dilemma: Accurate solar simulations depend entirely on knowing absolute cardinal directions. Since the Quest 3 lacks a built-in magnetometer (compass), the device tracks relative rotation but doesn't know where "North" actually is.

- The Solution: We couldn't automate this, so we implemented a manual calibration sequence. Upon launching the app, the user is prompted to physically face North (verified by their phone or local knowledge) and "lock" the orientation. This sets the initial offset required for our astronomical solver to function correctly.

🏆 Accomplishments that we're proud of

- Math Over Cloud: We successfully replaced latency-prone external APIs with a custom C# astronomical solver. This allows the app to calculate complex sun positioning locally in milliseconds, ensuring the app works perfectly offline.

- Mobile Performance Optimization: We managed to render large arrays of 20-30 virtual solar panels without compromising the Quest 3's frame rate. By prioritizing math and physics over expensive shadow rendering, we ensured a buttery-smooth experience even when the scene gets crowded.

- Bringing Assistants to Life: Integrating the Meta Voice SDK into our custom 3D avatars. It was incredibly satisfying to hear them explain our data back to us, turning a silent tool into an interactive guide.

- Intuitive Spatial UI: Creating a user interface that feels native to Mixed Reality, turning complex kilowatt-hour data into simple, floating gauges that non-technical users can understand at a glance.

🧠 What we learned

We learned that XR is a powerful tool for persuasion and education, not just entertainment. Abstract data (like kilowatts) becomes meaningful when attached to a physical object in your room. We also gained significant experience in handling geospatial data within a 3D coordinate system.

🚀 What's next for SolarScape XR

- Real-Time Budgeting & ROI: Expanding the financial engine to provide a complete "Quote Overview." This will calculate the total cost of all placed panels and inverters and estimate the specific "Time to Payback" based on local grid buy-back rates (net metering).

- Auto-North via Geospatial Alignment: Since the headset lacks a magnetometer, the current setup requires manual North alignment. We plan to integrate Geospatial Anchors (VPS) to recognize the user's physical surroundings and automatically snap the virtual coordinate system to True North.

- Shadow Analysis: Using scene understanding to automatically detect if a real-world tree is casting a shadow on the virtual panels and adjusting the energy output accordingly.

- Shared Spatial Multiplayer: Implementing Shared Spatial Anchors to allow multiple users to join the same session. This would let couples or neighbors stand in the same yard, see the same virtual panels, and collaborate on the layout in real-time.

Log in or sign up for Devpost to join the conversation.