Inspiration

Honestly, the idea for Spark came from a really simple place. You see all this amazing content being created in Adobe Express every day, from social media posts to flyers for small businesses. But it got me thinking: is all this beautiful design accessible to everyone? What about people with visual impairments?

I realized that most creators and small business owners are crazy busy and probably aren't experts in accessibility guidelines like WCAG. It's not that they don't care; it's that it can be complicated and time-consuming. I wanted to build something that would be a friendly sidekick for any creator—an "AI spark" that makes creating inclusive content the easiest and most natural part of their workflow.

What it does

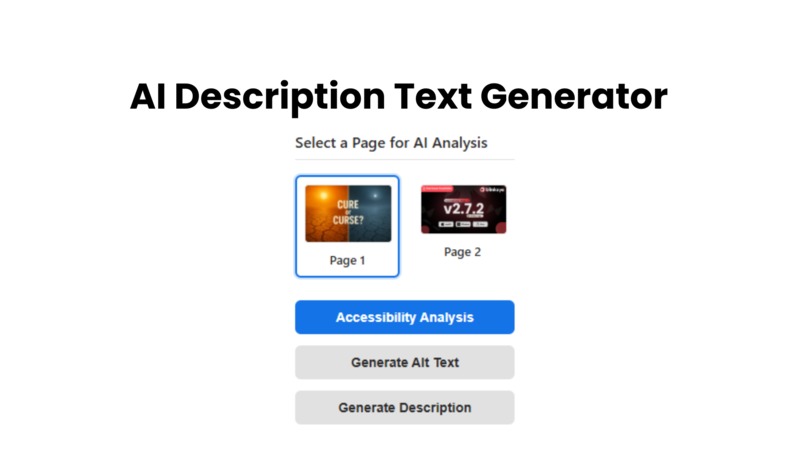

Spark is an Adobe Express add-on that acts as your personal AI accessibility assistant. Right now, it has two main superpowers:

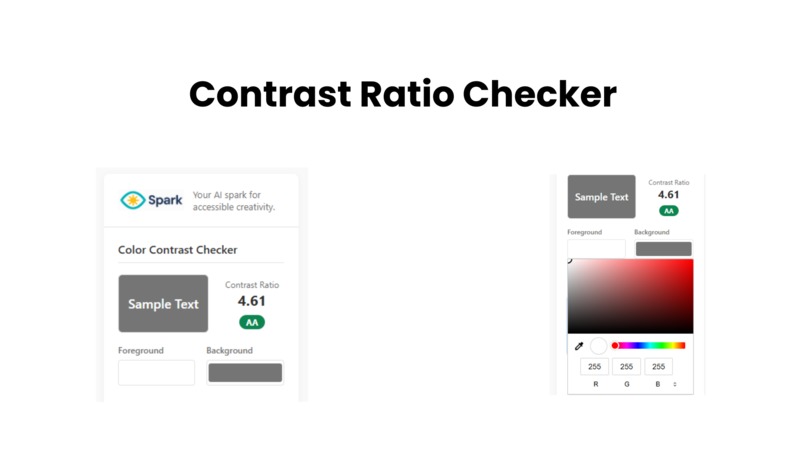

The Color Contrast Checker: This gives you instant, real-time feedback on your color choices. You can use the color pickers to test combinations, and it immediately tells you if you pass the official accessibility standards (AA or AAA), so you know your text is readable for everyone.

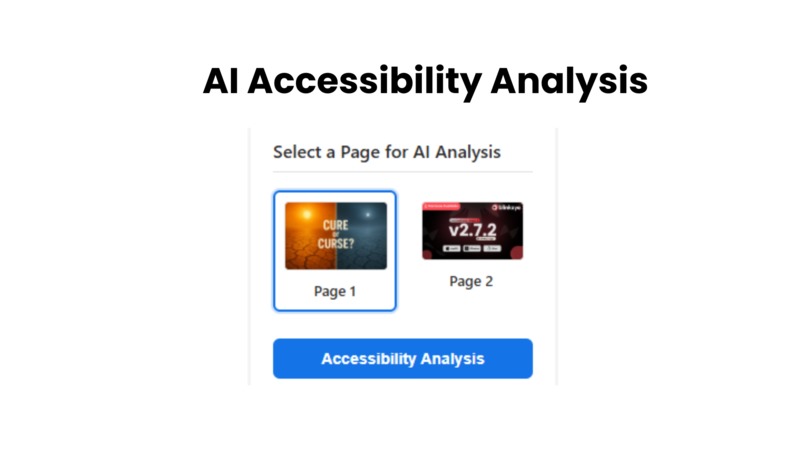

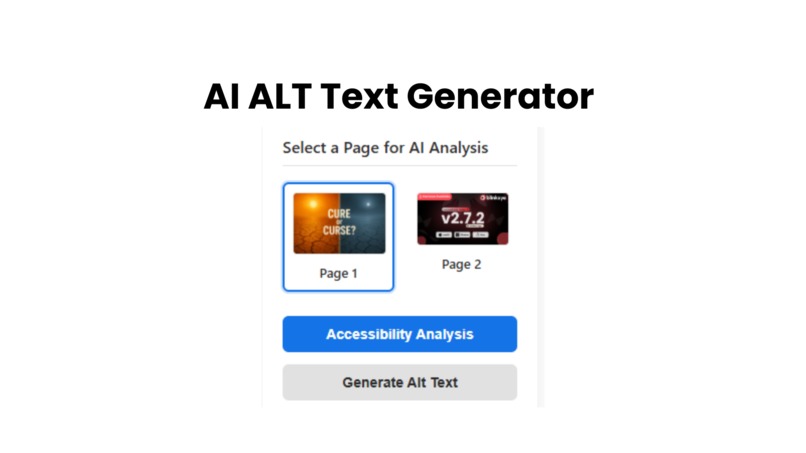

The AI Alt Text Generator: This is the really cool part. You just select a page in your design, hit "Generate," and our AI analyzes the main image and writes descriptive alt text for you. This is a game-changer for making visual content understandable to people using screen readers.

It’s all packed into a simple interface that feels right at home inside Adobe Express, so you don't have to break your creative flow.

How we built it

Spark is built with the classic web trio: HTML, CSS, and vanilla JavaScript. I wanted to keep it lightweight and fast. The whole user interface runs inside the add-on's iframe, and I used the Adobe Express Add-on SDK to understand how the add-on would eventually interact with a user's document.

The "brains" of the operation is the Google Gemini API (specifically, the gemini-1.5-flash model). When a user wants to generate alt text, the following happens:

- The JavaScript fetches the image from the selected page thumbnail.

- It converts that image into a Base64 string.

- It sends this string along with a carefully crafted prompt to the Gemini API, asking for the output in a structured

JSONformat. - The add-on then parses the

JSONresponse and displays the suggested alt text in the UI.

Using a JSON schema in the API call was key to getting reliable, easy-to-handle data back from the AI every time.

Challenges we ran into

Oh, there were definitely a few head-scratchers! The biggest challenge by far was dealing with images. To send an image to the Gemini API, it needs to be in Base64 format. In a local development environment, fetching an image from a URL and converting it immediately runs into CORS (Cross-Origin Resource Sharing) browser security policies. I had to use a CORS proxy service during development just to get the image data. It was a great reminder of how tricky browser security can be!

Another fun challenge was "prompt engineering." Getting the AI to give you good alt text is one thing, but getting it to consistently return clean, predictable JSON is another. It took a lot of tweaking the instructions in my prompt to make sure it always gave me the data in a format the app could use without breaking.

Accomplishments that we're proud of

I'm incredibly proud of the user interface. I spent a lot of time making sure it looks clean, modern, and feels like a natural part of Adobe Express. It's simple, intuitive, and doesn't overwhelm the user.

But the biggest accomplishment is successfully integrating the AI to solve a real problem. Seeing an AI generate genuinely useful, context-aware alt text for an image with just a single button click was a real "wow" moment. It proved that the core idea wasn't just a fantasy—it was totally possible and incredibly powerful.

What we learned

This project was a huge learning experience! First off, I got to dive deep into the Adobe Express Add-on SDK, which is a fantastic piece of tech. I learned how to structure an add-on and how the UI and the document sandbox are designed to communicate.

More importantly, I learned just how powerful modern AI APIs are for building assistive tools. The ability to send both an image and a complex instruction to an AI and get structured data back opens up a universe of possibilities. This project really solidified my understanding of practical AI integration.

What's next for Spark

Spark is just getting started! This hackathon was about proving the concept, but there's a clear roadmap ahead. Here's what's next:

- Full Page Audit: An "Analyze Page" button that gives you a total accessibility score, checking for color contrast, alt text, font readability, and more, all at once.

- Font Readability Checker: An AI-powered tool to analyze your chosen fonts and suggest more readable alternatives if needed.

- Deeper Integration: Moving from prototype thumbnails to interacting directly with the elements on the user's actual Adobe Express page. Imagine clicking a text box and Spark automatically telling you its contrast ratio!

The ultimate vision is for Spark to be an essential, proactive tool that empowers every Adobe Express user to make the world's content more beautiful and more inclusive.

Log in or sign up for Devpost to join the conversation.