Inspiration

At the professional level, every tennis match is carefully analyzed, every rally, every shot, and every pattern is broken down so players and coaches can adjust before the next match. But for most college, club, and competitive players, that kind of detailed analysis simply isn’t available. Coaches don’t have dedicated analytics teams, and players often rely on memory or general feedback to improve. We saw this firsthand through a friend on a college team who kept repeating the same mistakes without clear data to understand why. TennisIQ was built to close that gap by giving both players and coaches access to clear, data-backed match insights that were once only available only at the professional level.

What it does

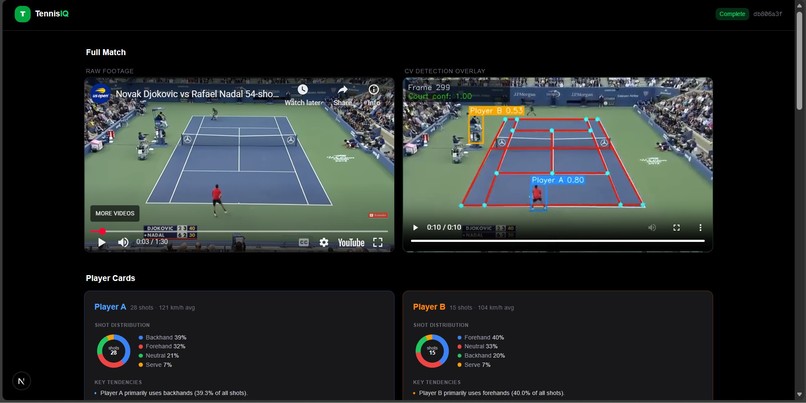

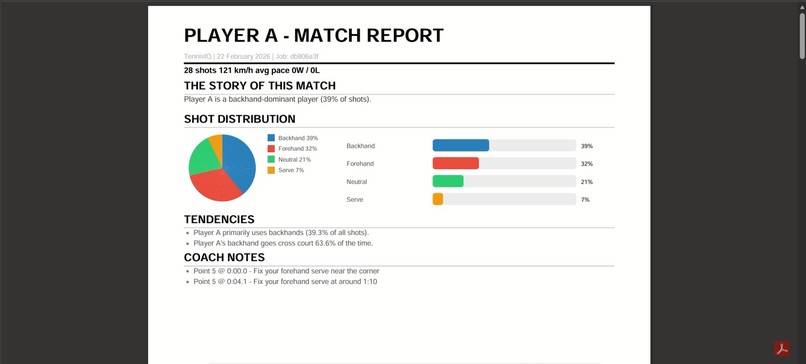

TennisIQ turns match footage into something a coach can actually use. Coach upload's a video or paste a YouTube link, and the system automatically detects the court, tracks the ball and both players, and identifies bounces, hits, and whether each shot landed in or out. It organizes those moments into full points, creates per-point clips, and adds an overlay showing ball trajectory and player positions.

As the coach reviews the clips, they can add comments directly to specific moments for example, pointing out poor footwork, shot selection, or positioning. When the review is complete, the coach can download a PDF report that includes match metrics (like rally length, ball speed, and event counts) along with their written feedback on each clip. This creates one clean, shareable report that combines data and coaching insight in one place.

How we built it

We built the system end-to-end with a Next.js frontend and a FastAPI + SQLite backend, running GPU workloads on Modal, while the vision layer is powered by PyTorch, OpenCV, and Ultralytics. It starts with court understanding: we trained a ResNet50 CNN to perform keypoint regression from a 224×224 frame to 14 court keypoints, outputting 28 values (14×2) using our custom weights file keypoints_model.pth.

Once we detect the court, we compute a 3×3 homography using cv2.findHomography, testing multiple point configurations and selecting the one with the lowest reprojection error, mapping pixels into court space with p′ = Hp, and carrying forward the last stable matrix when detections drop. For ball tracking, we trained a YOLOv5 model (yolo5_last.pt), cleaned the trajectory with outlier removal and interpolation, projected ball centers into court coordinates, and computed motion physics using v = Δs / Δt and a = Δv / Δt, filtering unreliable detections.

Players are detected using YOLOv5 and tracked with ByteTrack, keeping only those whose foot position lies within the court polygon and splitting Player A/B by court side. We then detect events: bounces are identified through velocity reversal and deceleration with temporal NMS, hits through direction changes ≥ 50° with sufficient speed, and in/out decisions by testing bounce locations against the singles court boundary. Finally, we group events into structured points (serve to last bounce or fault), generate per-point clips with overlays and coaching-style stats, and deploy the pipeline by splitting videos into ~10-second segments processed on a Modal A100 GPU, merging results in the backend and serving them seamlessly to the dashboard.

Challenges we ran into

Data There are very few public datasets for tennis court keypoints or ball tracking. The biggest challenge was simply finding a usable dataset. We eventually found one with around 9,000 annotated images, but discovering, validating, and preparing it for our pipeline took significant effort.

Pipeline Complexity Our system has many connected parts court detection, homography, ball tracking, player tracking, and event logic. If one part made a mistake, it affected everything else. Debugging meant tracing issues across the entire pipeline, not just fixing one model.

Compute & Integration Even with Modal GPUs, processing full matches was heavy. We ran into long runtimes, memory issues, and segment merge problems. We had to tune batching, segment size, and error handling to keep the full pipeline stable from raw video to final dashboard.

Accomplishments that we're proud of

Got an end-to-end MVP working. Court detection, ball tracking, player IDs, events, and point segmentation all run in one pipeline even though we hit a lot of issues. Trained our own models for court keypoints and ball detection and got them running on Modal’s A100, despite bottlenecks and errors along the way. Built something a coach can actually use: upload a video or link and get an overlay, basic stats, and coaching-style insights. It’s rough around the edges, but it’s a real MVP we can improve on.

What we learned

How hard it is to get good tennis data and why clear labeling and a pseudolabel → review → train pipeline matter. How much each stage depends on the others (court → homography → ball speed → events) and why debugging means following the whole chain. How to design for cloud GPU: batching, segment length, and error handling so long matches run reliably.

What's next for TennisIQ

Richer coaching: shot recommendations, consistency metrics, and drill suggestions from the same pipeline. Support for more input types (live streams, multiple cameras) and better robustness to different angles and quality. Exploring real-time or near–real-time analysis so coaches can use it during practice, not only in review.

Log in or sign up for Devpost to join the conversation.