Inspiration

We brainstormed our project with the idea of solving any problem, regardless of whether it was big or small. Problems ranged from having unorganized notes to creating fun and interactive ways to cook food. We thoroughly discussed each idea, giving each a rating based on a few key criteria:

- Utility

- Impact

- "Wow" factor (for our presentation)

- Uniqueness

Our group unanimously decided that overall, The Right Direction scored the best in these criteria. We also believed that, more importantly, it would provide the greatest help to those in need.

What it does

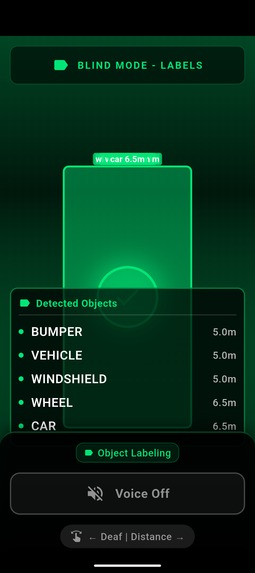

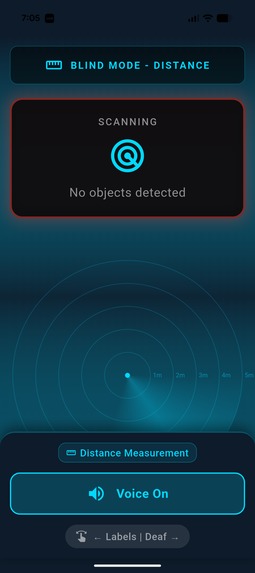

Our app has two modes: The first is to replace the requirement for visually impaired people to use canes to navigate. Our app detects objects and calculates their distance relative to the phone, providing vibration and audio warnings when the user is too close to an obstacle. The second mode is purely for identifying objects with the phone camera, and does not give any warnings.

How we built it

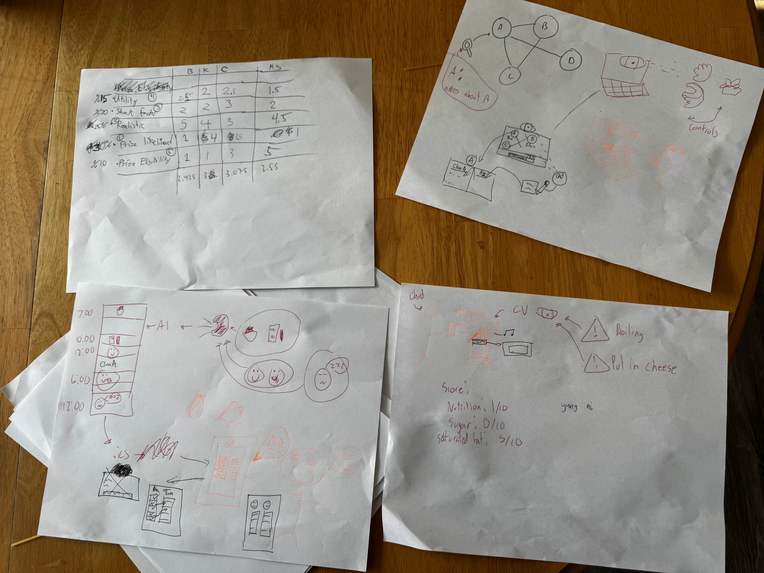

Firstly, we brainstormed the idea with collaborative sketches, where each group member had 3 minutes to draw possible features of the application on a shared piece of paper. We continued iterating until we had an app layout we were satisfied with. Afterwards, we began assigning team members to build features, with each member contributing to different branches. Those branches were then reviewed by the entire team and merged if the feature was implemented well. After this, and many bugfixes, we had a working product.

Challenges we ran into

Initially, we wanted to combine the two "modes" of the app into one, however this proved to be difficult. Distance detection was performed using Google's object detection ML kit. Object labelling was performed using Google's image labelling ML kit. Given the time constraint, we found it difficult to get these libraries to communicate with each other directly with one another. Sometimes incorrect object labels were given (such as "bird", "tire", "fog", when these things were not present). Other times, the distance calculation was highly inaccurate, often giving false positives for warnings. These errors were caused due to a conflict of interest between the two libraries.

We solved this issue by creating an order of operations for the libraries to operate on any given frame. The first "distance detection" mode prioritized the object detection ML kit first, and then allowed objects to be labelled after being detected by the first library. This eliminated the conflict of interest between the two libraries. Afterwards, we realized that some users may want to use the app simply to label objects, so we created the second mode of the app which prioritized the image labelling ML kit, only giving warnings from the object detection kit when an object was too close to be identified.

Accomplishments that we're proud of

Our group is proud that we dove into an entirely new tech stack. Our team members had individual experience with Java/Kotlin and computer vision, but not with Swift, Dart, or the Flutter framework. It was definitely a challenge debugging, but with a lot of persistence, and some help from Claude/Copilot, we were able to get the app up and running.

What we learned

Aside from learning the very powerful Flutter framework, we found the most valuable takeaway from NWHacks 2026 was improving our skills in Git. Our members had experience with git for individual projects, but we did not have any experience working in a collaborative repository since this was our first hackathon. We feel that we not only learned new git commands, but also gained a deeper understanding of what it's like to work on a shared codebase as a whole, something that we believe will be incredibly useful in the future.

What's next for The Right Direction

We plan to expand our app to include more modes. For example, in our initial planning, we wanted to include a sign language to English translator for deaf people to be able to communicate more easily. However, we ran into issues; specifically that a phone's front-facing camera frame was not large enough to capture everything required. Much later into our design, we realized that we could use the rear camera on its 0.5x setting with the screen facing away from the user so the person they are communicating with would be able to read the English translation in real-time. However, by the time we came up with this, it was a bit too close to the deadline to implement. Our team plans on implementing this feature and many more in the future.

Log in or sign up for Devpost to join the conversation.