Inspiration

Automatic tools for digital poster generation are important for poster designers. Designers can save a lot of time with these kinds of tools. Design is hard work, especially for digital posters, which require careful consideration of both utility and aesthetics. Abstract principles about digital poster design can not help designers directly. In contrast, we propose an approach to learning design patterns, and this approach can be used as an assistant tool of digital poster generation to aid the designers.

What it does

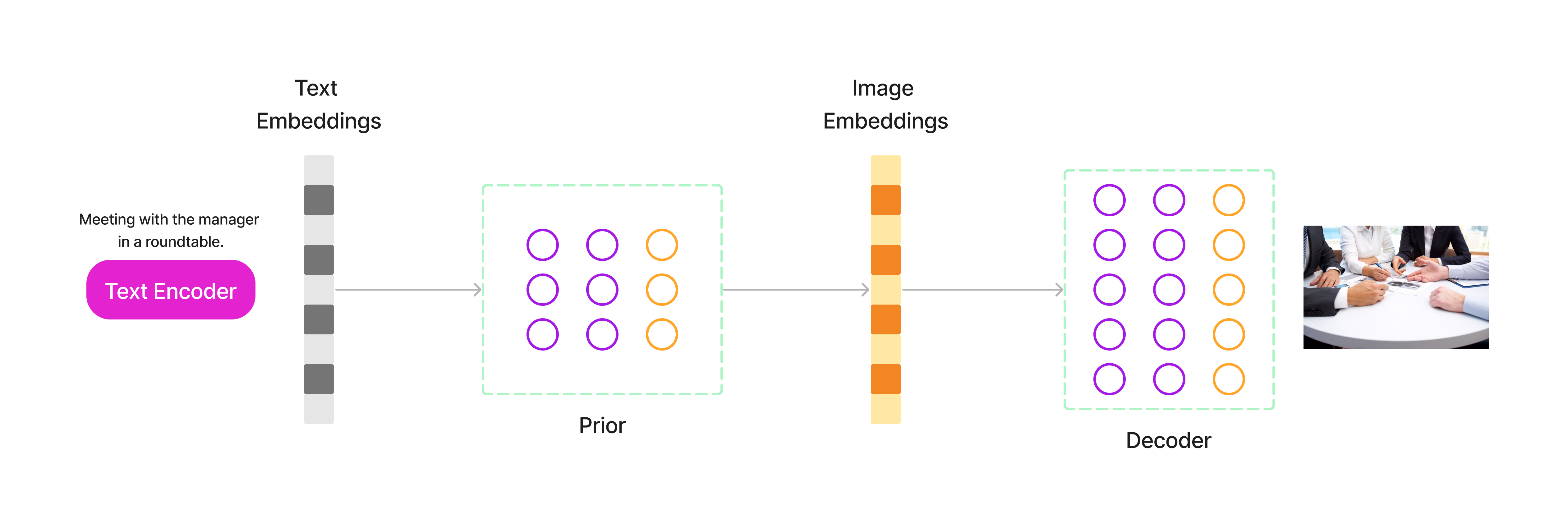

DALL-E is a machine learning model developed by OpenAI to generate digital images from natural language descriptions, called "prompts". DALL-E can generate imagery in multiple styles, including photorealistic imagery, paintings, and emoji. It can "manipulate and rearrange" objects in its images and can correctly place design elements in novel compositions without explicit instruction.

AI generated image for text "hackathon"

AI generated image for text "artificial intellligence"

For summarization we will be using deep learning model and NLP techniques to process the text . We will be using transformers to train state-of-the-art of pretained models. Transformers can be used in text , image , audio classification and processing. Summarization creates a shorter version of a document or an article that captures all the important information. Along with translation, it is another example of a task that can be formulated as a sequence-to-sequence task.

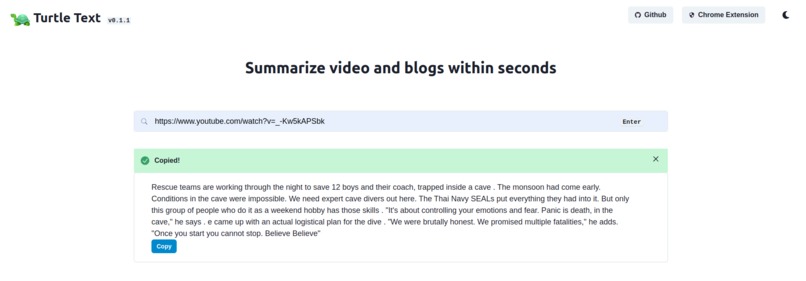

Summarize video : Enter the video url in the input field which you want to summarize . The video will be processed by the NLP model and will send the summarized text as response .

WebApplication

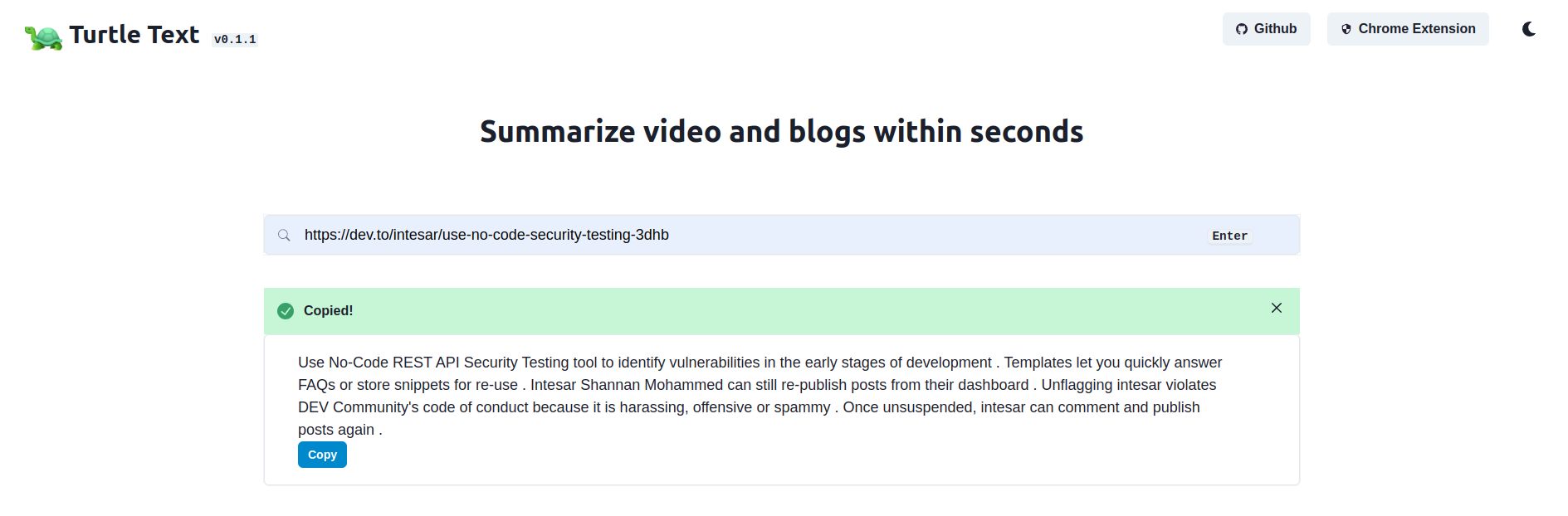

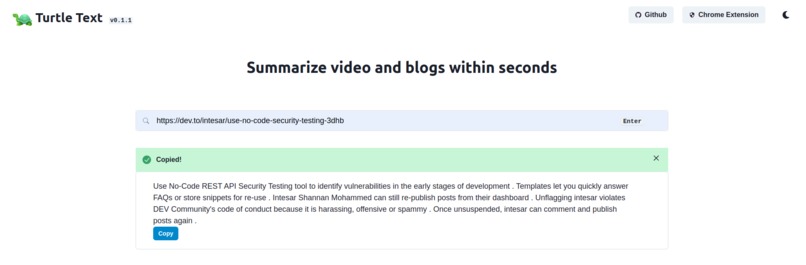

Summarize blogs :

Put the url of the blog you wish to summarise in the input area. The NLP model will analyse the blog and delivers the text-summarized in response.

WebApplication

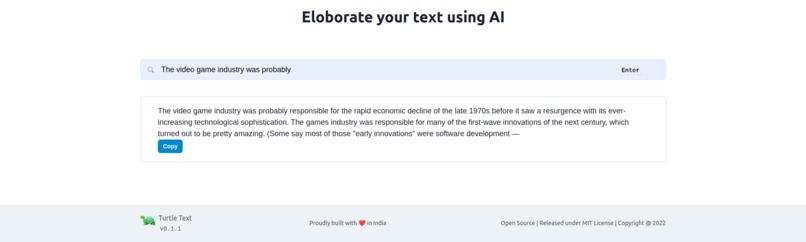

To generate text we will be using GPT2 model which helps to generate large texts .GPT2 (Generative Pre-trained Transformer ) is a state-of-the-art machine learning architecture for Natural Language Processing (NLP).

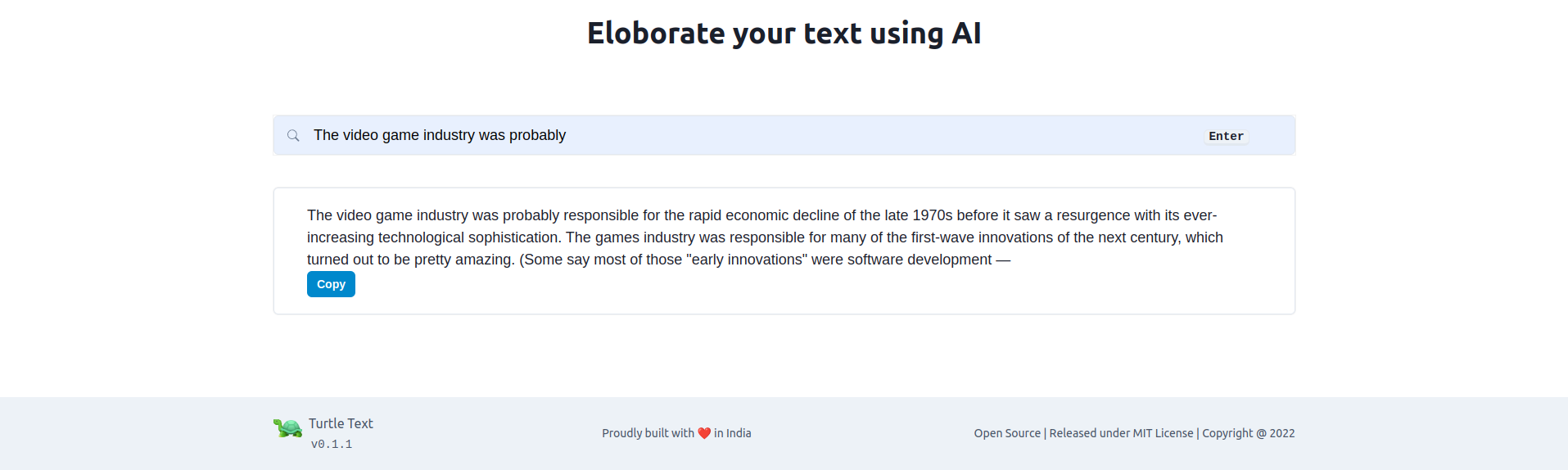

Eloborate texts : Enter a short passage of text that you are familiar with, and our model will analyse it and produce a detailed summary.

WebApplication

Architecture for Text Generation

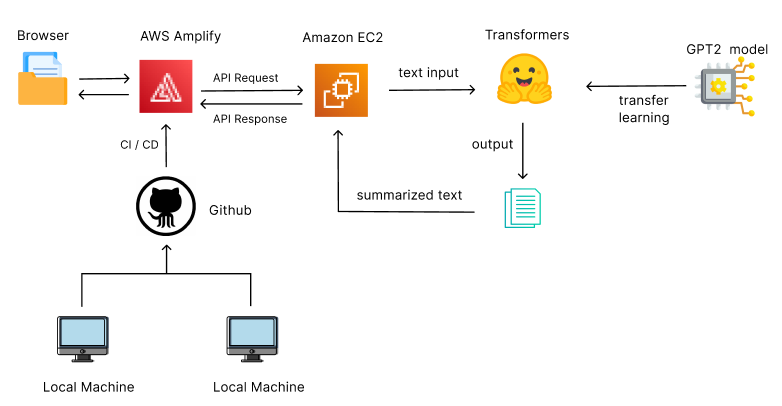

How we built it

In , we have recreated the DALL-E modal. The DALL-E modal had some limitations like it can’t distinguish between “ A Red shirt and a Red colored objects “, but we have recreated the DALL-E modal with features like the ability to differentiate between colors and things. Using the DALL-E modal, we will generate the image based on the meet-up agenda provided, with the image generated using an API we generate a poster. Also using GitHub actions we are automating this task whenever the user provides the meet-up agenda.

To use transformers to synthesize higher-resolution images the semantics of an image must be presented cleverly. Using pixel representation is not going to work as the number of pixels increases quadratically with a 2x increase in image resolution. Therefore, instead of representing an image with pixels, it is represented as a composition of perceptually rich image constituents from a codebook.

Dall-e architecture

NLP and Deep learning approaches can be used to execute operations on text, and they offer improved consistency. Users can input links to their blogs or videos, which our deep learning model will then process and output a text summary of. For processing our text, we use the DistilBERT deep learning model, which was trained on a very large corpus of unlabeled text, including the entirety of Wikipedia (2,500 million words) and the Book Corpus (800 million words). Also, users can input text documents that can be summarised. Furthermore we provide a text eloborating feature where users can input a small text which will be eloborated to large texts. Working with GPT-2 (Generative Pre-trained Transformer) models that were trained using the WebText data set will help us achieve this. We'll offer multiple interfaces, including a web application, a mobile app, and a browser extension, so customers can easily complete their desired actions with a single click

Challenges we ran into

In Dall-E we used GPT-3, to generate images. GPT-3's training data was all-encompassing, it does not require further training for distinct language tasks.

Accomplishments that we're proud of

We have successfully recreated the DALL-E modal with the required features and our project has been hosted successfully on the web so that anyone can use our application. The idea of implementing an API and using the GitHub actions has actually made our job much easier and more time efficient. And the modal is creating great images and images are fetched properly, posters are created successfully by our project.

What we learned

We have learned AWS Amplify using which we were able to scale up our solution and host our project. We learned AWS Lambda using which we were able to develop data processing triggers for our project also provides security. We have also learned GANS Modal using which we were able to generate data instances that resemble our training data. Also, we have learned about hugging face transformers and EC2 AWS service

What's next

As future work, our product can be also applicable to directly learning the general design patterns such as the web-page design, and single-page graphical design, if given the corresponding layout styles. Currently, we do not consider font types of posters which will be addressed in the future.

Built With

- aws-amplify

- aws-ec2

- aws-sage-maker

- dall-e

- fastapi

- github

- gpt2

- transformers

Log in or sign up for Devpost to join the conversation.