codedrones proudly presents unLost How to find your way using ml & CV powered AR

Inspiration

It is an insteresting because challenging problem. Also indoor navigation is not sufficiently solved without preparing the space before hand or the app specifically for the space. We want to achieve this given only already existing information.

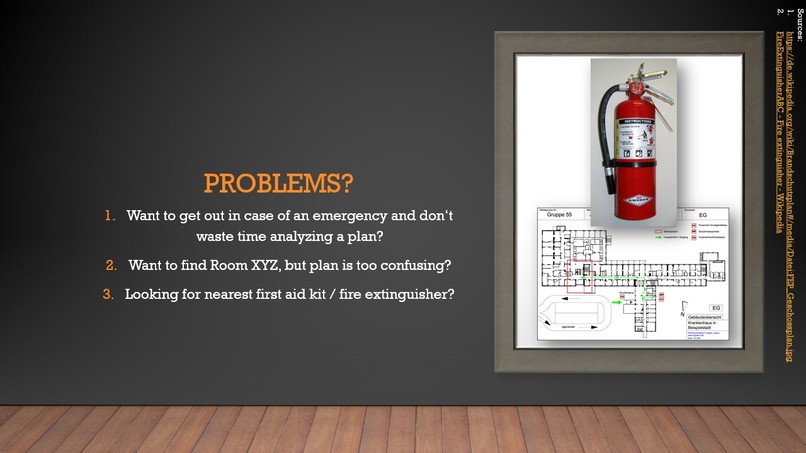

What it does

Helping the user to navigate and find his location indoors. In addition the app can provide useful information in case of an emergency.

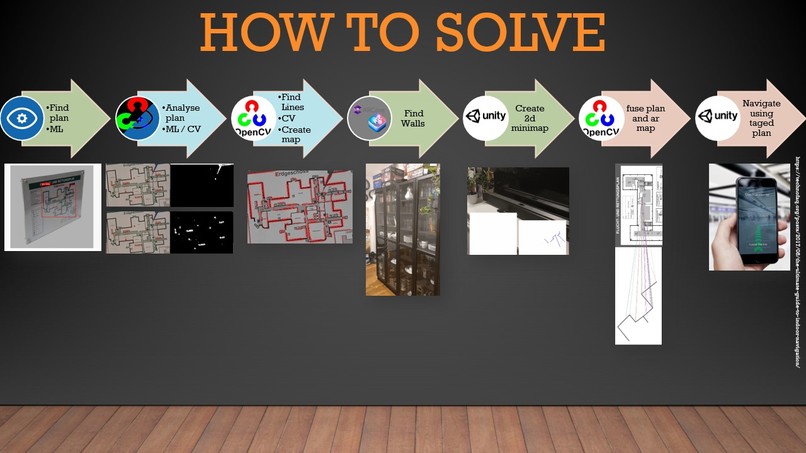

How we built it

We quickly decided to use Unity together with AR Core for the backbone, since one of the team members had experience with it. We trained a computer vision model for object detection to find the floor plan in images. This model was trained using customvision.ai, since we only had a few images to train that we pulled from the web.We tried to export the trained model and use it on device, but had problems with the compatability in Unity. We therefore decided to make the trained model available online. OpenCV via python was used to find objects in the plan, starting with walls. The Unity app itself uses AR Core for recognition of walls. The wall locations are accumulated and transformed to form the minimap that shows the walls relativ to the user while the moves. When a picture is taken, it is send to the Predition web API, which sends the results, containing match probabilities, labels and bounding boxes. This information is used to crop the picture and further analyse it via OpenCV on device. Analysis consits of conversion to grayscale, Gaussian bluring, dynamic thresholding via Otsu's method (https://en.wikipedia.org/wiki/Otsu%27s_method), Canny edge detection and finally probabilistic Hough line detection https://opencv24-python-tutorials.readthedocs.io/en/latest/py_tutorials/py_imgproc/py_houghlines/py_houghlines.html We used the proprietary OpenCV for Unity for the integration https://enoxsoftware.com/opencvforunity/

Challenges we ran into

Unity was a pain point. Starting from the installation, to trying to import the custom vision onnx model to getting opencv running was difficult because of various incombatabilities.

The 3D vector geometry for the minimap creation was challenging but solvable in the end. The analysis of the floor plan to extract walls and other objects is still britle and works much better of device than on device. The fusion of floor plan and minimap as well as the correct mapping of the user location is not solved yet.

Accomplishments that we're proud of

Being able to solve some of the hard technical challenges and integrating the solutions in one running App is definetly something we are proud of. Also that we did not gave up, no matter what frameworks threw at us.

What we learned

A ton about Unity and how to integrate different parts into it, including how to (theoretically) include machine learning models into Unity and to deploy it to mobile plattforms. Hacking remotely!

What's next for codedrones

We will takle the remaining challanges in this app, especially making everything run on device only. Apart from that, we have alot more interesting and challenging ideas we want to explore. Maybe at the next hackathon!

Log in or sign up for Devpost to join the conversation.