Inspiration

As college students, we constantly juggle academics, mental health, and the need to unwind. We wanted a single companion that could adapt to whatever we needed in the moment—whether that's help studying for a brutal CS61B exam, someone to talk to after a rough day, or a vibe-matching DJ to set the mood while we work. We realized AI agents could finally make this possible: one interface, three modes, all personalized to you.

- Slides: https://www.canva.com/design/DAG6wywmrgs/34zkXr0X_K0DCwG7Vr-b_g/edit?utm_content=DAG6wywmrgs&utm_campaign=designshare&utm_medium=link2&utm_source=sharebutton ## What it does

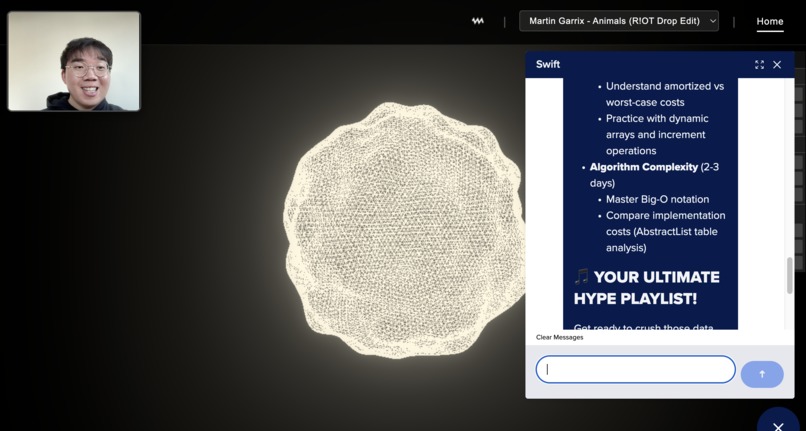

VibeMate is an AI agent with three distinct personalities:

- EDM Artist & DJ: Analyzes your mood in real-time and generates or recommends music to match. Feeling stressed? Here's some lo-fi. Ready to lock in? Time for high-energy beats.

- Counselor: An empathetic listener that detects your emotional state through facial expressions and responds with genuine support and wellness guidance.

- Study Buddy: Your personal tutor with access to your actual class notes. It knows CS61B inside and out and can quiz you, explain concepts, or help you debug your understanding.

- All three modes share context—so if you're stressed about an exam, VibeMate might help you study, check in on how you're feeling, and queue up the perfect focus playlist.

How we built it

- Cline × Cursor for rapid prototyping—AI-assisted coding let us iterate fast and ship features in hours instead of days

- Hume AI for real-time sentiment analysis via computer vision, detecting emotions from facial expressions

- Tadata for MCP integration and multi-agent orchestration, enabling seamless context switching between modes

- DigitalOcean Gradient AI for our custom knowledge base, indexing Spotify metadata and uploaded CS61B course materials as vector embeddings Three.js for immersive 3D visualizations with physics-based animations that respond to music and mood

Challenges we ran into

- Agent coordination: Getting three distinct agent personalities to share context without bleeding into each other was tricky. We had to carefully design the orchestration layer so the DJ doesn't suddenly start giving study advice mid-song.

- Latency: Real-time emotion detection + knowledge base queries + 3D rendering is a lot. We spent significant time optimizing the pipeline so responses feel instantaneous.

- Knowledge base tuning: Making the Study Buddy actually useful for CS61B required careful chunking and embedding of course materials—too granular and it lost context, too broad and it couldn't find specific answers.

Accomplishments that we're proud of

- Built a fully functional multi-agent system in a single hackathon

- Real-time emotion detection that actually influences agent behavior

- VibeMate correctly answers questions about linked lists, asymptotic analysis, and tree traversals from our actual class notes

- The 3D visualizations are genuinely mesmerizing—particles that dance to the beat and shift colors based on detected mood

What we learned

- MCP (Model Context Protocol) is a game-changer for agent orchestration—it made multi-agent coordination far more manageable than we expected

- Emotion-aware AI requires thoughtful UX; we had to be careful not to make users feel surveilled

- Vector databases need careful tuning; garbage in, garbage out applies heavily to RAG systems

- AI-assisted development tools like Cline genuinely 10x productivity when you learn to use them well

What's next for VibeMate

- Collaborative mode: Study sessions with friends where VibeMate can mediate group learning and keep everyone on track

- Deployment on DigitalOcean App Hosting & Tadata MCP Integrations & Connectors

Built With

- cline

- cursor

- digitalocean

- elevenlabs

- hume

- tadata

- three.js

Log in or sign up for Devpost to join the conversation.