Inspiration

Several people in our group have lived the “language barrier on a phone call” problem at full stakes. Daniel's Vietnamese-speaking parents have hit dead-ends with government offices. Leo's Arabic-speaking family has struggled to get timely, accurate help in medical settings. Dylan's Malayalam-speaking parents have been forced into awkward three-way calls, rushed interpretations, or simply giving up when services didn’t provide proper translation. Ivan's Spanish-speaking parents struggling to communicate with large retails stores. We kept seeing the same pattern: when language breaks, human connection breaks, and phone calls become stressful, slow, and error-prone.

At the same time, customer experience research keeps reinforcing that people do not want to be pushed into fully automated experiences. Many customers prefer speaking with a live human and feel they get better outcomes that way.

So we asked: what if AI did not replace the human conversation, and instead removed the language barrier and let people stay human?

What it does

YaGetMe AI is a real-time, bidirectional voice translation system for live phone calls, built to preserve the natural flow of conversation.

- A caller dials a standard phone number.

- Both sides speak normally in their own language.

- YaGetMe AI translates speech in near real time so each person hears the other in their preferred language with super fast, low-latency turn-taking. This keeps tone, pacing, emotion, and trust intact.

- It works across any industry that relies on calls, including healthcare, government services, insurance, legal, hospitality, customer support, education, emergency response, and more.

How we built it

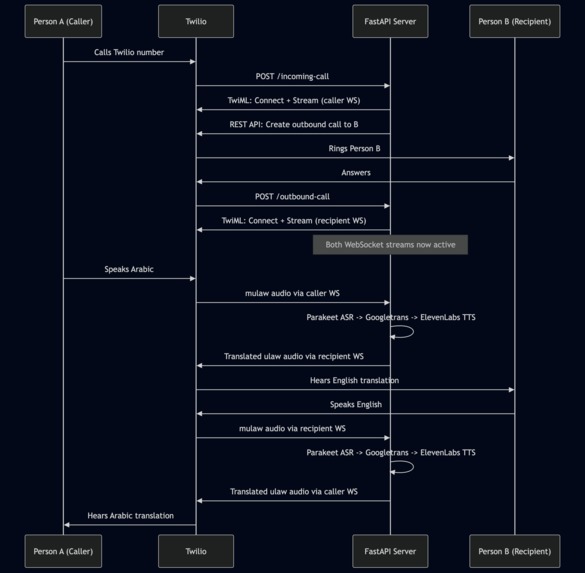

We built an end-to-end streaming pipeline optimized for low latency.

- Twilio Media Streams to bridge two call legs and create persistent WebSocket audio streams for both the caller and the recipient.

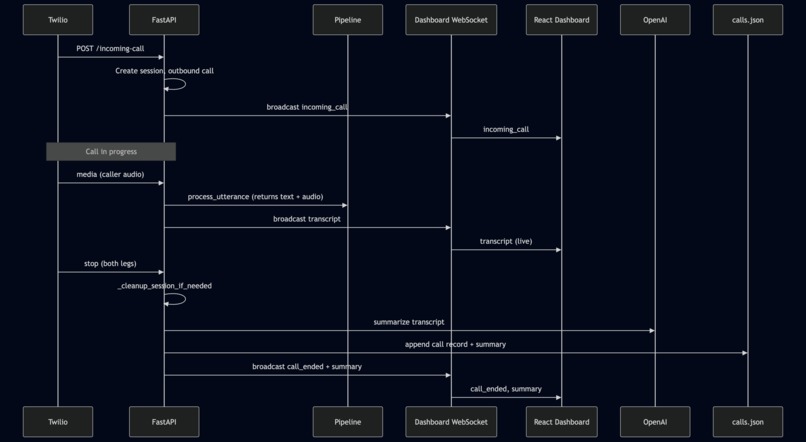

- FastAPI to orchestrate sessions, route audio, manage state, and broadcast live events to our dashboard.

- NVIDIA Parakeet (parakeet-1.1b-rnnt-multilingual-asr) for streaming multilingual ASR in 25 languages.

- Googlelang to detect the caller’s native language and translate both directions:

- caller to recipient’s desired language

- recipient to caller’s detected language

- ElevenLabs TTS to synthesize translated audio and stream it back to the opposite call leg.

- A React dashboard receiving transcripts and call events via a server WebSocket.

- Post-call, OpenAI summarization and persistence into calls.json for structured call records.

Challenges we ran into

- Latency was the boss fight. Real-time translation is not useful if it feels like voicemail. We had to minimize buffering, control chunk sizes, and keep the pipeline responsive end-to-end.

- Model and API hunting. We tested multiple ASR, translation, and TTS options and learned quickly that “high quality” does not always mean “fast enough” for live calls. Finding the right balance of accuracy and responsiveness took iterations.

- Architecture churn. Early designs did not reliably support long-lived, bidirectional audio streaming. We rethought the system multiple times until we landed on Twilio Media Streams, which gave us stable, persistent WebSocket connections for both ends of the call. That reliability is what unlocked continuous audio retrieval and playback.

- State synchronization. Managing two call legs, two streams, real-time transcripts, and dashboard updates required careful session management and cleanup logic.

Accomplishments that we're proud of

- Built a fully working, real-time phone-call translator with two live participants, with no special app required.

- Achieved a low-latency interaction feel that keeps conversations natural instead of robotic or delayed.

- Delivered a clean operator experience: live transcript streaming to a dashboard plus automatic post-call summary.

- Designed it to be industry-agnostic from day one. If you use phone calls, you can use YaGetMe AI.

What we learned

- People do not want AI to replace human support. They want better, more human outcomes. Surveys show many customers still prefer live human interaction and often report better results with humans than with AI-only service.

- Real-time systems are mostly about engineering discipline: streaming constraints, backpressure, jitter, and the “death by a thousand milliseconds” problem.

- Twilio Media Streams is a powerful primitive. Once we had reliable bidirectional audio streams, everything else became a solvable pipeline problem.

What's next for YaGetMe AI

- Hardening for edge cases, including noisy environments, crosstalk, interruptions, low bandwidth, spotty connectivity, and speakers with heavy accents or code-switching.

- Smarter turn-taking, including tighter barge-in handling and adaptive buffering so the system stays fast without sacrificing intelligibility.

- Domain tuning with optional industry vocabulary packs, such as medical, legal, and insurance, to boost ASR and translation accuracy where terminology matters most.

- Trust and compliance features, including configurable retention, redaction, and audit trails for regulated industries, without breaking the real-time experience.

Built With

- elvenlabs

- fastapi

- nvidia

- python

- react

- twilio

- websockets

Log in or sign up for Devpost to join the conversation.