Chenxi Liu1, Siqi Wang2, Matthew Fisher3, Deepali Aneja3, Alec Jacobson1,3

1University of Toronto, 2New York University, Courant Institute of Mathematical Sciences, 3Adobe Research, San Francisco

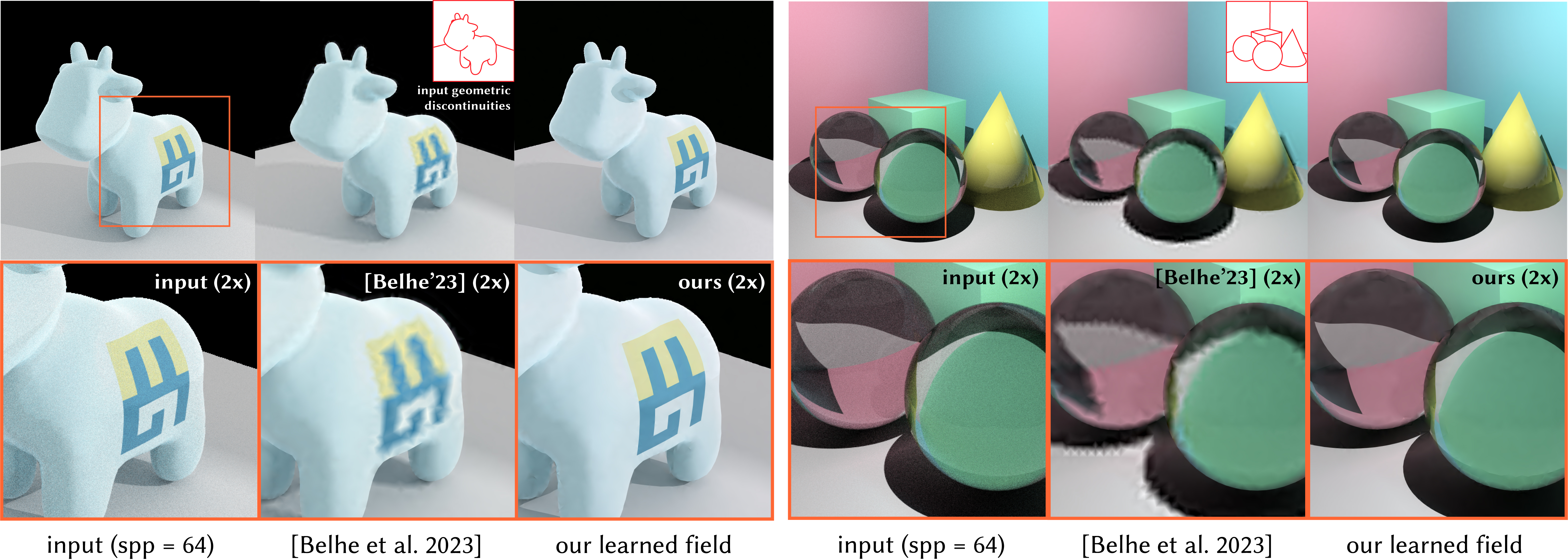

This repository contains the research code for the paper “2D Neural Fields with Learned Discontinuities.” It implements our discontinuous neural field and the end-to-end pipelines to fit images (and depth maps) while recovering unknown discontinuities.

- Linux, NVIDIA GPU recommended

- Python 3.8 (via conda), PyTorch 2.0.1 + CUDA 11.8

- torch-scatter (installed from the PyG wheel index)

- External meshing binary: TriWild

Create and activate the environment:

# From the repo root

conda env create -f environment.yml

conda activate discontinuity2dInstall torch-scatter manually after activating the env:

pip install torch-scatter -f https://data.pyg.org/whl/torch-2.0.1%2Bcu118.htmlTriWild must be discoverable; set an explicit path if not on PATH:

export TRIWILD_PATH=/path/to/TriWildAdd the repo root to PYTHONPATH (or call as a module):

export PYTHONPATH="$(pwd):${PYTHONPATH}"Run the end-to-end pipeline with a PNG (or a depth grayscale image if using --depth). If an SVG is not specified, a default unknown.svg is used:

python launching/run_pipeline.py path/to/your.png --snapshot outputs/run1Optionally provide an SVG explicitly to define discontinuity locations:

python launching/run_pipeline.py path/to/your.png path/to/your.svg --snapshot outputs/run1Useful options (see --help for full list):

--fitselects the representation to optimize:unknown_discontinuity(default): infer edges while fittingdiscontinuity: use known SVG discontinuities (TriWild “known” mode)per_vertex,per_edge: ablations/baselines

--config-json: path to a JSON with overrides merged into defaults. Meshing parameters live here (no extra CLI flags):defgrid_config.triwild_edge_r: TriWild target edge-length ratio (e.g., 0.003–0.02)- To disable remeshing: set

defgrid_config.remesh_epochto 0 (or omit the key)

--depth: treat the input as a depth target (grayscale PNG)--debug: enables intermediate logs/plots. Default runs are quiet but show tqdm progress bars

Example presets live under inputs/ (e.g., inputs/diffusion_curves/diffusion_curves.json).

Final artifacts are saved under --snapshot:

fit_final.png: single-sample render (spp = 1) at input resolutionfit_final_spp.png: anti-aliased render (spp = 16) at input resolutionfit_final_spp_2x.png: anti-aliased render (spp = 16) at 2× upscaled resolution

geometry/— meshing, remeshing, and geometric utilities (TriWild interface, Canny edges)learning/— sampling strategies and data helpersneural/— neural field models and utilitiespipeline/— pipeline stages used by the launcherlaunching/run_pipeline.py— top-level entry pointinputs/— sample data and JSON configs (e.g., artistic, diffusion_curves, rendering)outputs/— example outputs and snapshots

Sample inputs and configs are provided under inputs/. You can point the launcher to your own image (PNG) and optional SVG. For diffusion-generated depth map cleanup, pass --depth and a grayscale PNG. Artistic inputs are by Oscar Chávez (CC BY-NC-SA 2.0): inputs/artistic/009.jpg, inputs/artistic/014.jpg, inputs/artistic/019.jpg. Other inputs are created by us and can be used under CC0.

- If

torch-scatterfails to install, use the PyG index matching your exact Torch/CUDA. - If TriWild is not found, set

TRIWILD_PATHto its binary or add it toPATH. - CUDA mismatches: ensure your driver/runtime align with

pytorch-cuda=11.8in the environment.

This project is licensed under the Apache License, Version 2.0 — see LICENSE for details.

Notices: see NOTICE for attributions and third-party notices.

@article{discontinuities2d,

title={2D Neural Fields with Learned Discontinuities},

author={Liu, Chenxi and Wang, Siqi and Fisher, Matthew and Aneja, Deepali and Jacobson, Alec},

booktitle={Computer Graphics Forum},

lccn={2004233670},

issn={0167-7055},

year={2025},

publisher={North Holland},

volume={44},

year={2025-05},

organization={Wiley Online Library}

}