Abstract

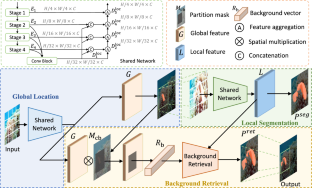

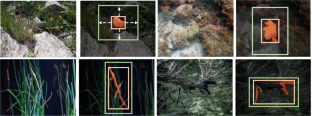

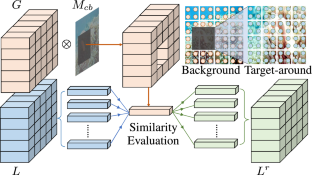

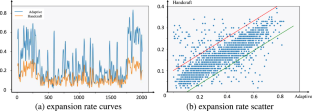

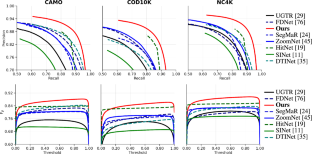

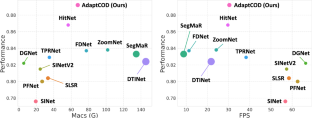

Recent works confirm the importance of local details for identifying camouflaged objects. However, how to identify the details around the target objects via background cues lacks in-depth study. In this paper, we take this into account and present a novel learning framework for camouflaged object detection, called AdaptCOD. To be specific, our method decouples the detection process into three parts, namely localization, segmentation, and retrieval. We design a context adaptive partition strategy to dynamically select a reasonable context region for local segmentation and a background retrieval module to further polish the camouflaged object boundaries. Despite the simplicity, our method enables even a simple COD model to achieve great performance. Extensive experiments show that AdaptCOD surpasses all existing state-of-the-art methods on three widely-used camouflaged object detection benchmarks. Code is publicly available at https://github.com/HVision-NKU/AdaptCOD.

Similar content being viewed by others

References

Bernal, J., Sánchez, F. J., Fernández-Esparrach, G., Gil, D., Rodríguez, C., & Vilariño, F. (2015). Wm-dova maps for accurate polyp highlighting in colonoscopy: Validation vs. saliency maps from physicians. CMIG, 43, 99–111.

Bernal, J., Sánchez, J., & Vilarino, F. (2012). Towards automatic polyp detection with a polyp appearance model. Pattern Recognition, 45(9), 3166–3182.

Chen, G., Liu, S. J., Sun, Y. J., Ji, G. P., Wu, Y. F., & Zhou, T. (2022). Camouflaged object detection via context-aware cross-level fusion. IEEE Transactions on Circuits and Systems for Video Technology, 32(10), 6981–6993.

Cheng, X., Xiong, H., Fan, D.P., Zhong, Y., Harandi, M., Drummond, T., & Ge, Z. (2022). Implicit motion handling for video camouflaged object detection. In: CVPR

Chu, X., Tian, Z., Wang, Y., Zhang, B., Ren, H., Wei, X., Xia, H., Shen, C. (2021). Twins: Revisiting the design of spatial attention in vision transformers. In: NeurIPS

De Boer, P. T., Kroese, D. P., Mannor, S., & Rubinstein, R. Y. (2005). A tutorial on the cross-entropy method. Annals of operations research, 134(1), 19–67.

Devlin, J., Chang, M.W., Lee, K., & Toutanova, K. (2018). Bert: Pre-training of deep bidirectional transformers for language understanding. In: NAACL

Fan, D.P., Cheng, M.M., Liu, Y., Li, T., & Borji, A. (2017). Structure-measure: A new way to evaluate foreground maps. In: IEEE ICCV

Fan, D.P., Gong, C., Cao, Y., Ren, B., Cheng, M.M., & Borji, A. (2018). Enhanced-alignment measure for binary foreground map evaluation. In: IJCAI

Fan, D. P., Ji, G. P., Cheng, M. M., & Shao, L. (2022). Concealed object detection. IEEE TPAMI, 44(10), 6024–6042.

Fan, D.P., Ji, G.P., Sun, G., Cheng, M.M., Shen, J., & Shao, L. (2020). Camouflaged object detection. In: IEEE CVPR

Fan, D.P., Ji, G.P., Zhou, T., Chen, G., Fu, H., Shen, J., & Shao, L. (2020). Pranet: Parallel reverse attention network for polyp segmentation. In: MICCAI

Fan, D. P., Zhang, J., Xu, G., Cheng, M. M., & Shao, L. (2023). Salient objects in clutter. IEEE TPAMI, 45(2), 2344–2366.

Pérez-de la Fuente, R., Delclòs, X., Peñalver, E., Speranza, M., Wierzchos, J., Ascaso, C., & Engel, M. S. (2012). Early evolution and ecology of camouflage in insects. Proceedings of the National Academy of Sciences, 109(52), 21414–21419.

Han, K., Xiao, A., Wu, E., Guo, J., Xu, C., & Wang, Y. (2021) Transformer in transformer. In: NeurIPS

He, C., Li, K., Zhang, Y., Tang, L., Zhang, Y., Guo, Z., & Li, X. (2023) Camouflaged object detection with feature decomposition and edge reconstruction. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 22046–22055

He, R., Dong, Q., Lin, J., & Lau, R.W. (2023). Weakly-supervised camouflaged object detection with scribble annotations. In: AAAI

Hou, Q., Cheng, M. M., Hu, X., Borji, A., Tu, Z., & Torr, P. (2019). Deeply supervised salient object detection with short connections. IEEE TPAMI, 41(4), 815–828.

Hu, X., Wang, S., Qin, X., Dai, H., Ren, W., Luo, D., Tai, Y., & Shao, L. (2023) High-resolution iterative feedback network for camouflaged object detection. In: AAAI

Huang, Z., Dai, H., Xiang, T.Z., Wang, S., Chen, H.X., Qin, J., & Xiong, H. (2023) Feature shrinkage pyramid for camouflaged object detection with transformers. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 5557–5566

Jha, D., Smedsrud, P.H., Riegler, M.A., Halvorsen, P., Lange, T.d., Johansen, D., & Johansen, H.D. (2020) Kvasir-seg: A segmented polyp dataset. In: MMM

Ji, G. P., Fan, D. P., Chou, Y. C., Dai, D., Liniger, A., & Van Gool, L. (2022). Deep gradient learning for efficient camouflaged object detection. MIR. https://doi.org/10.1007/s11633-022-1365-9

Ji, G.P., Zhu, L., Zhuge, M., & Fu, K. (2022) Fast camouflaged object detection via edge-based reversible re-calibration network. PR 123, 108414

Jia, Q., Yao, S., Liu, Y., Fan, X., Liu, R., & Luo, Z. (2022). Segment, magnify and reiterate: Detecting camouflaged objects the hard way. In: IEEE CVPR

Lamdouar, H., Yang, C., Xie, W., & Zisserman, A. (2020) Betrayed by motion: Camouflaged object discovery via motion segmentation. In: ACCV

Le, T. N., Cao, Y., Nguyen, T. C., Le, M. Q., Nguyen, K. D., Do, T. T., Tran, M. T., & Nguyen, T. V. (2022). Camouflaged instance segmentation in-the-wild: Dataset, method, and benchmark suite. IEEE TIP, 31, 287–300.

Le, T. N., Nguyen, T. V., Nie, Z., Tran, M. T., & Sugimoto, A. (2019). Anabranch network for camouflaged object segmentation. CVIU, 184, 45–56.

Le, X., Mei, J., Zhang, H., Zhou, B., & Xi, J. (2020). A learning-based approach for surface defect detection using small image datasets. Neurocomputing

Li, A., Zhang, J., Lv, Y., Liu, B., Zhang, T., & Dai, Y. (2021) Uncertainty-aware joint salient object and camouflaged object detection. In: IEEE CVPR

Li, C., & Jiao, G. (2022). Einet: camouflaged object detection with pyramid vision transformer. JEI, 31(5), 053002.

Li, Z.Y., Gao, S., & Cheng, M.M. (2022) Exploring feature self-relation for self-supervised transformer. arXiv preprint arXiv:2206.05184

Lin, J., Tan, X., Xu, K., Ma, L., & Lau, R. W. (2023). Frequency-aware camouflaged object detection. ACM TMCCA, 19(2), 1–16.

Lin, T. Y., Dollár, P., Girshick, R., He, K., Hariharan, B., & Belongie, S. (2017) Feature pyramid networks for object detection. In: IEEE CVPR

Liu, Y., Cheng, M. M., Fan, D. P., Zhang, L., Bian, J. W., & Tao, D. (2022). Semantic edge detection with diverse deep supervision. International Journal of Computer Vision, 130(1), 179–198.

Liu, Z., Zhang, Z., & Wu, W. (2022) Boosting camouflaged object detection with dual-task interactive transformer. ICPR

Loshchilov, I., & Hutter, F. (2017) Sgdr: Stochastic gradient descent with warm restarts. ICLR

Lv, Y., Zhang, J., Dai, Y., Li, A., Barnes, N., & Fan, D. P. (2023) Towards deeper understanding of camouflaged object detection. IEEE TCSVT

Lv, Y., Zhang, J., Dai, Y., Li, A., Liu, B., Barnes, N., & Fan, D. P. (2021) Simultaneously localize, segment and rank the camouflaged objects. In: IEEE CVPR

Margolin, R., Zelnik-Manor, L., & Tal, A. (2014) How to evaluate foreground maps? In: IEEE CVPR

Máttyus, G., Luo, W., & Urtasun, R. (2017) Deeproadmapper: Extracting road topology from aerial images. In: IEEE ICCV

Mei, H., Ji, G.P., Wei, Z., Yang, X., Wei, X., & Fan, D. P. (2021) Camouflaged object segmentation with distraction mining. In: IEEE CVPR

Mei, H., Xu, K., Zhou, Y., Wang, Y., Piao, H., Wei, X., & Yang, X. (2023). Camouflaged object segmentation with omni perception. International Journal of Computer Vision, 131, 1–16.

Mei, H., Yang, X., Zhou, Y., Ji, G.P., Wei, X., & Fan, D.P. (2023) Distraction-aware camouflaged object segmentation. SCIS

Mondal, A., Ghosh, S., & Ghosh, A. (2017). Partially camouflaged object tracking using modified probabilistic neural network and fuzzy energy based active contour. International Journal of Computer Vision, 122, 116–148.

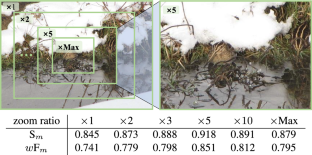

Pang, Y., Zhao, X., Xiang, T.Z., Zhang, L., & Lu, H. (2022) Zoom in and out: A mixed-scale triplet network for camouflaged object detection. In: IEEE CVPR

Paszke, A., Gross, S., Massa, F., Lerer, A., Bradbury, J., Chanan, G., Killeen, T., Lin, Z., Gimelshein, N., & Antiga, L., et al. (2019) Pytorch: An imperative style, high-performance deep learning library. In: NeurIPS

Perazzi, F., Krähenbühl, P., Pritch, Y., & Hornung, A. (2012) Saliency filters: Contrast based filtering for salient region detection. In: IEEE CVPR

Rahman, M. M., & Marculescu, R. (2023) Medical image segmentation via cascaded attention decoding. In: IEEE WACV

Ren, J., Hu, X., Zhu, L., Xu, X., Xu, Y., Wang, W., Deng, Z., & Heng, P. A. (2023). Deep texture-aware features for camouflaged object detection. IEEE TCSVT, 33(3), 1157–1167.

Silva, J., Histace, A., Romain, O., Dray, X., & Granado, B. (2014). Toward embedded detection of polyps in wce images for early diagnosis of colorectal cancer. International Journal of Computer Assisted Radiology and Surgery, 9, 283–293.

Srinivas, A., Lin, T.Y., Parmar, N., Shlens, J., Abbeel, P., & Vaswani, A. (2021) Bottleneck transformers for visual recognition. In: IEEE CVPR

Sun, Y., Chen, G., Zhou, T., Zhang, Y., & Liu, N. (2021) Context-aware cross-level fusion network for camouflaged object detection. In: IJCAI

Sun, Y., Wang, S., Chen, C., & Xiang, T. Z. (2022) Boundary-guided camouflaged object detection. In: IJCAI

Tabernik, D., Šela, S., Skvarč, J., & Skočaj, D. (2020). Segmentation-based deep-learning approach for surface-defect detection. Journal of Intelligent Manufacturing, 31(3), 759–776.

Tajbakhsh, N., Gurudu, S. R., & Liang, J. (2015). Automated polyp detection in colonoscopy videos using shape and context information. IEEE TMI, 35(2), 630–644.

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., & Polosukhin, I. (2017) Attention is all you need. In: NeurIPS

Wang, H., Wang, X., Sun, F., & Song, Y. (2021) Camouflaged object segmentation with transformer. In: ICCSIP

Wang, W., Xie, E., Li, X., Fan, D. P., Song, K., Liang, D., Lu, T., Luo, P., & Shao, L. (2022). Pvt v2: Improved baselines with pyramid vision transformer. CVMJ, 8(3), 415–424.

Wu, F., Li, X., Zhang, Y., & Hu, K. (2022) Transcoop: Cooperation of transformers and cnns for camouflaged object segmentation. In: IEEE ICME

Wu, H., Xiao, B., Codella, N., Liu, M., Dai, X., Yuan, L., & Zhang, L. (2021) Cvt: Introducing convolutions to vision transformers. In: IEEE ICCV

Wu, M., Zhang, X., Sun, X., Zhou, Y., Chen, C., Gu, J., Sun, X., & Ji, R. (2022) Difnet: Boosting visual information flow for image captioning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 18020–18029

Xie, S., & Tu, Z. (2015) Holistically-nested edge detection. In: IEEE ICCV

Xu, B., Liang, H., Liang, R., & Chen, P. (2021) Locate globally, segment locally: A progressive architecture with knowledge review network for salient object detection. In: AAAI

Xu, W., Xu, Y., Chang, T., & Tu, Z. (2021) Co-scale conv-attentional image transformers. In: IEEE ICCV

Yang, F., Zhai, Q., Li, X., Huang, R., Luo, A., Cheng, H., & Fan, D. P. (2021) Uncertainty-guided transformer reasoning for camouflaged object detection. In: IEEE ICCV

Yang, J., Li, C., Zhang, P., Dai, X., Xiao, B., Yuan, L., & Gao, J. (2021) Focal attention for long-range interactions in vision transformers. In: NeurIPS

Yin, B., Zhang, X., Hou, Q., Sun, B. Y., Fan, D. P., & Van Gool, L. (2022) Camoformer: Masked separable attention for camouflaged object detection. arXiv preprint arXiv:2212.06570

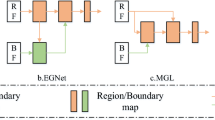

Zhai, Q., Li, X., Yang, F., Chen, C., Cheng, H., & Fan, D. P. (2021) Mutual graph learning for camouflaged object detection. In: CVPR

Zhai, Q., Li, X., Yang, F., Jiao, Z., Luo, P., Cheng, H., & Liu, Z. (2023). Mgl: Mutual graph learning for camouflaged object detection. IEEE TIP, 32, 1897–1910.

Zhai, W., Cao, Y., Xie, H., & Zha, Z. J. (2022) Deep texton-coherence network for camouflaged object detection. IEEE TMM

Zhang, M., Xu, S., Piao, Y., Shi, D., Lin, S., & Lu, H. (2022) Preynet: Preying on camouflaged objects. In: ACM MM

Zhang, Q., Ge, Y., Zhang, C., & Bi, H. (2022) Tprnet: camouflaged object detection via transformer-induced progressive refinement network. TVCJ pp. 1–15

Zhang, X., Sun, X., Luo, Y., Ji, J., Zhou, Y., Wu, Y., Huang, F., & Ji, R. (2021) Rstnet: Captioning with adaptive attention on visual and non-visual words. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 15465–15474

Zheng, D., Zheng, X., Yang, L.T., Gao, Y., Zhu, C., & Ruan, Y. (2023) Mffn: Multi-view feature fusion network for camouflaged object detection. In: WACV

Zheng, H., Fu, J., Zha, Z.J., & Luo, J. (2019) Looking for the devil in the details: Learning trilinear attention sampling network for fine-grained image recognition. In: IEEE CVPR

Zhong, Y., Li, B., Tang, L., Kuang, S., Wu, S., & Ding, S. (2022) Detecting camouflaged object in frequency domain. In: IEEE CVPR

Zhou, Y., Li, Z., Guo, C. L., Bai, S., Cheng, M. M., & Hou, Q. (2023) Srformer: Permuted self-attention for single image super-resolution. In: ICCV

Zhu, H., Li, P., Xie, H., Yan, X., Liang, D., Chen, D., Wei, M., & Qin, J. (2022) I can find you! boundary-guided separated attention network for camouflaged object detection. In: AAAI

Author information

Authors and Affiliations

Corresponding authors

Additional information

Communicated by Diane Larlus.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Yin, B., Zhang, X., Liu, L. et al. Camouflaged Object Detection with Adaptive Partition and Background Retrieval. Int J Comput Vis 133, 4877–4893 (2025). https://doi.org/10.1007/s11263-025-02406-6

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1007/s11263-025-02406-6