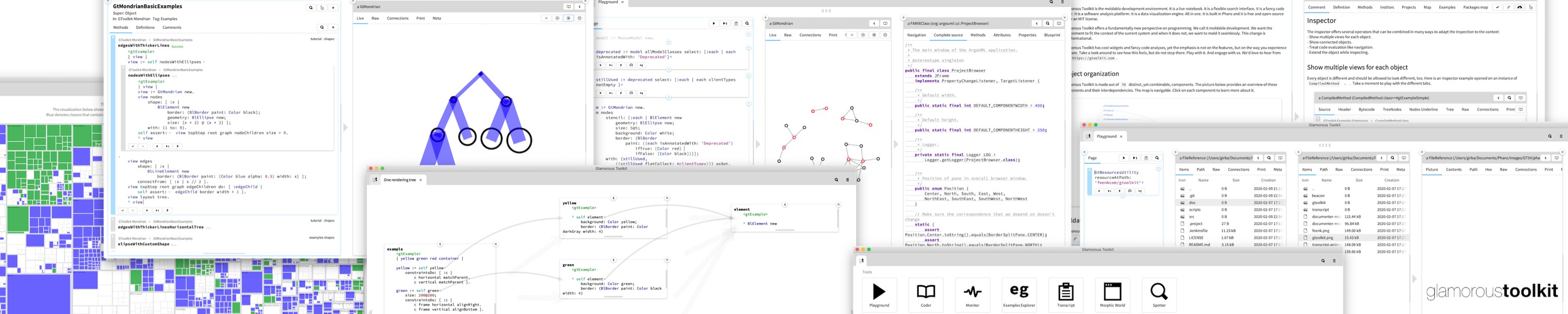

The Rewilding Software Engineering book that Tudor Girba and Simon Wardley are writing is filled with experiments and less obvious lessons like the one below. These lessons are drawn from a long exploration of how to help humans make sense of systems -- an exploration that was validated by creating business value for our customers. The lessons can also be explored in executable form through Glamorous Toolkit, our free and open-source moldable development environment.

In 1968, people talked about a software crisis. That crisis only grew larger. Today, we are creating software super linearly, while being unable to recycle old systems. We are behaving as unsustainably as the plastic industry. We got good at writing code. We can read pieces of it, but we cannot quite make sense of larger systems. This is remarkably similar to functional illiteracy. In an illiterate world, myths abound and magic is praised. We can remedy that by educating our ability to read in this new world we are engineering. LLMs are exciting and useful tools. But they are magic, and they are not the answer to how we read. They can be accelerants of good reading, just like they can be accelerants of myths. It's up to us. In chapter 6 of Rewilding Software Engineering, Simon Wardley and I write about an experiment of asking the LLM for something simple like a graph of dependencies. When we asked it to produce it directly, it confidently produced a remarkable set of components and dependencies. But when we compared with the actual system, we got 5-30% missed entities (shown in red in the attached visualization). Used like that, LLMs produce only opinions not engineering answers. Worse, those opinions can only be evaluated if the evaluator knows the answer, which then leads to an apparent catch-22 situation as people ask the LLM if they do not know the answer. Luckily, there is a way out of this. We did another experiment in which we took the same prompt details, but changed what we asked for: instead of asking for the answer, we asked the LLM to produce the code that we could execute to find the dependencies. It worked remarkably well. Most questions about existing systems require deterministic answers and should be provided by deterministic tools. Whether these tools are built manually or through an LLM is irrelevant. Our aim should be to eliminate the need for magic to get answers about our world. We should be able to explain what the tool does to ensure we know how accurate and representative it is. We should aim to explain both how our systems run our world and how we explain those systems. Once we do that, we will have become literate which will pave the way for enlightenment and for an exit out of an ever larger crisis.