Statistics & Optimization for Trustworthy AI

Our Research

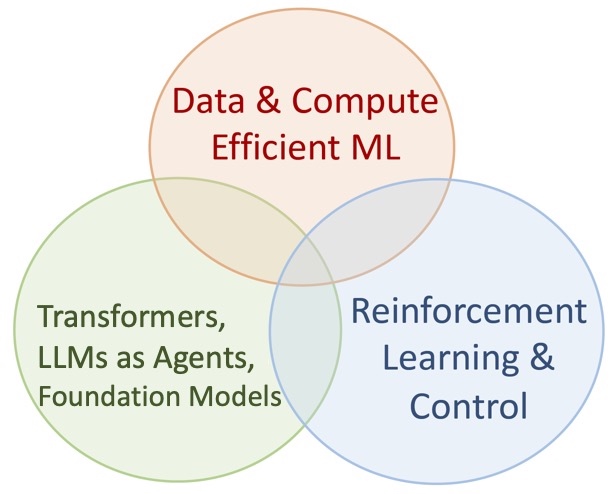

We develop principled and empirically-impactful AI/ML methods

- mathematical foundations for transformers, sequence modeling, and capabilities of language models

- core optimization and statistical learning theory

- language model reasoning and reinforcement learning

- trustworthy language and time-series (foundation) models

News:

- Necmiye Ozay and I have received an ARO grant on “Foundations of Sequence Models for Control”.

- Wei Hu and I have received a grant from Coefficient Giving on “Understanding Phase Transitions in Transformer Training Dynamics”

- Xuechen has successfully defended her thesis on “Efficient Reasoning in Language Models”!

- Serving as a Senior Area Chair for NeurIPS 2026 @ Sydney

- Undergrad/Master projects (recruiting): Reach out to learn more about

- Evolutionary optimization with LLMs such as AlphaEvolve

- Forecasting systems with applications to macroeconomics

- 4 papers are accepted to AISTATS’26 @ Tangier and ICLR’26 @ Rio

- Continuous Chain of Thought Enables Parallel Exploration and Reasoning, ICLR

- SmartChunk Retrieval: Query-Aware Chunk Compression for Efficient Document RAG, ICLR

- Filter, Augment, Forecast: Online Data Selection for Robust Time Series Forecasting, AISTATS

- Retrieval Augmented Time Series Forecasting, AISTATS

- I will be serving as a Senior Area Chair for ICML 2026 @ Seoul.

- 4 papers appeared at NeurIPS 2025:

- Congrats to Yingcong and Xiangyu on receiving their PhDs!

- Recent preprints (both appeared at ICML 2025 workshops):

- Recent papers:

- Gating is Weighting, COLM 2025

- Everything Everywhere All at Once, ICML 2025 spotlight

- Test-Time Training Provably Improves Transformers as In-context Learners, ICML 2025

- High-dimensional Analysis of Knowledge Distillation, ICLR 2025 spotlight

- Provable Benefits of Task-Specific Prompts for In-context Learning, AISTATS 2025

- AdMiT: Adaptive Multi-Source Tuning in Dynamic Environments, CVPR 2025

- New award from Amazon Research on Foundation Model Development

- 2 papers will appear at AAAI 2025

- We are presenting 4 papers at NeurIPS 2024

- Congrats to Mingchen on his graduation and joining Meta as a Research Scientist!

- Congrats to our 2023 interns who will pursue their PhD studies in UC Berkeley, Harvard, and UIUC!

- Two papers at ICML 2024: Self-Attention <=> Markov Models and Can Mamba Learn How to Learn?

- New course on Foundations of Large Language Models: syllabus (including Piazza and logistics)

- New awards from NSF and ONR: We kickstarted two exciting projects to advance the theoretical and algorithmic foundations of LLMs, transformers, and their compositional learning capabilities.

- Two papers at AISTATS 2024

- “Mechanics of Next Token Prediction with Self-Attention”, Y. Li, Y. Huang, M.E. Ildiz, A.S. Rawat, S.O.

- “Inverse Scaling and Emergence in Multitask Representations“, M.E. Ildiz, Z. Zhao, S.O.

- Two papers at AAAI 2024 and one paper at WACV 2024

- Invited talks at USC, INFORMS, Yale, Google NYC, and Harvard on our works on transformer theory

- Two papers at NeurIPS 2023

- Grateful for the Adobe Data Science Research award!

- Our new works develop the optimization foundations of Transformers via SVM connection

- Two papers at ICML 2023: Transformers as Algorithms and On the Role of Attention in Prompt-tuning

- Two papers at AAAI 2023: Provable Pathways and Long Horizon Bandits

We are grateful for our research sponsors

MENU

MENU