Posted by David Gerard

https://pivot-to-ai.com/2026/02/12/saaspocalypse-investors-overspend-badly-on-software-companies-and-blame-ai/

https://pivot-to-ai.com/?p=7134

Today’s word is “SaaSpocalypse”. A pile of overvalued enterprise software companies’ stock price number went down, and they’re blaming AI.

The mini-bubble in software-as-a-service was always going to pop . The trigger was that stock traders were deluded into thinking your boss yelling at Claude Code could replace Salesforce. Yeah, really.

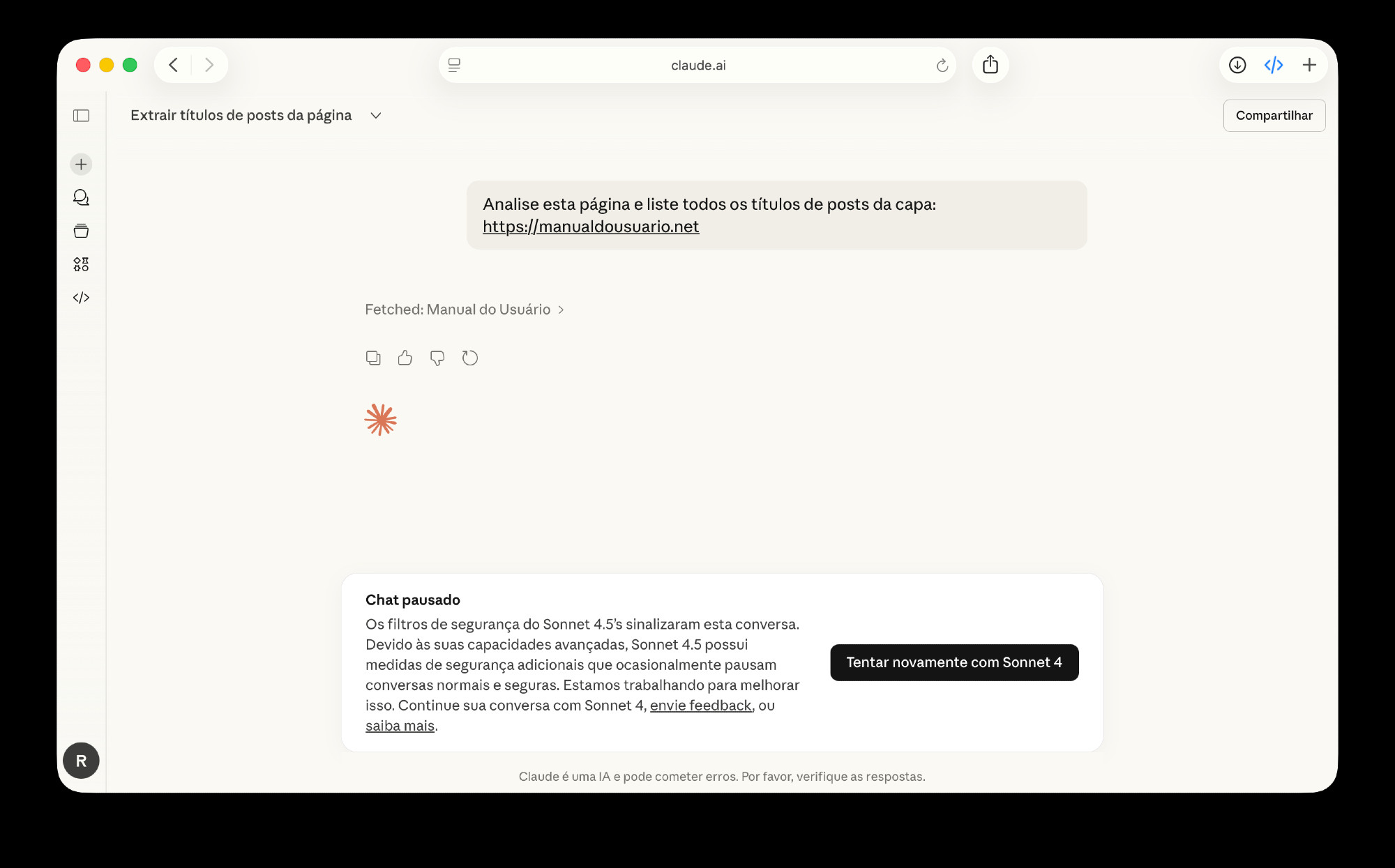

In January, Anthropic launched Claude Cowork. It’s an AI agent designed to be your workplace assistant! Anthropic called Cowork a “research preview,” which means even they didn’t think it worked yet. [Anthropic]

Then on 2 February, Anthropic released a pile of Cowork “skills” for legal offices. These claimed to do all sorts of legal jobs, like contract review. This is the AI stuff that doesn’t work already, and law firms are having to hire more lawyers to clean up after the bots. [Artificial Lawyer; GitHub]

But. this single software release of a research preview was enough to panic investors in companies making software for lawyers. On 3 February, a whole bunch of legal software companies dropped 4% to 12%. The rout spread to non-legal SaaS companies. [Proactive Investors]

A lot of analysts saw the crash coming — they’ve considered the SaaS companies were overvalued for a while. But AI pulled the trigger: [Bloomberg, archive]

“We call it the ‘SaaSpocalypse,’ an apocalypse for software-as-a-service stocks,” said Jeffrey Favuzza, who works on the equity trading desk at Jefferies. “Trading is very much ‘get me out’ style selling.”

Private equity especially got into SaaS big time. In economics, “rentiers” are considered a parasitical drain on a working economy. Because they are. But being the rent-seeking middleman also makes a ton of money!

So SaaS companies were highly regarded, and they got very overvalued. And now private equity is cutting its software exposure as fast as possible.

The traders seem to hold the notion that AI can just replace your enterprise software spend. An AI assistant at your desk, or Claude Code writing your business software for you!

Neither of these is even slightly possible. But tell the traders that. They’ve been hearing nothing but “AI, AI, AI is coming!” for the past three years:

“The draconian view is that software will be the next print media or department stores, in terms of their prospects,” said Favuzza at Jefferies.

There’s just one problem — for all the continuously blasting hype, AI agents don’t work. They literally don’t work. They can’t work. You can tell a chatbot agent what to do, and it’ll try to do it! And it’s a hallucinating chatbot, so it’ll mess up after a time.

The vendors want to sell you on the vision, and teach you to make excuses for the bot that can’t work. Next model, bro, it’ll be amazing. This is the future! Though it sure isn’t the present.

That doesn’t matter, though. Because Anthropic sold a big promise — that agents and coding bots could get you out from under the thumb of enterprise software. Which every customer of it hates. And that includes the traders and analysts.

Renting a company the machinery their business runs on pulls in an absolute bundle! And the vendors don’t even have to make the software any good. So they … just don’t. It’s buggy, it sucks, and the users hate it. And they don’t have a choice.

So there’s a lot of resentment. Anthropic’s selling into that market.

But the promise is not possible. You can’t vibe code enterprise software if you have any requirement for accuracy or compliance.

And I do mean vibe coding. This isn’t about experienced software developers using a chatbot as an autocomplete. This is telling managers anyone can vibe code an app. They’ll think it’s 95% done when the web page looks nearly right. Then they’ll hand it off to their remaining software developer to build the actual functionality.

But the resentment at the sewage-tier quality of enterprise software is vast. The customers want nothing more than to make these parasites go away.

Unfortunately, the robot is not in fact up to the job. And the bridge troll business model is odious, but it’s also a pretty solid cash flow. The software stocks are already recovering a bit. [NYT, archive]

The monthly fee model supports a lot of software products that would otherwise not get support. But mostly it’s the part of modern life where you get nickel and dimed all day every day, and in this case it’s for rotten software that doesn’t even work well.

Everyone wants to be the bridge troll and invest in the bridge troll. But making your customers hate you this much is not a stable situation.

https://pivot-to-ai.com/2026/02/12/saaspocalypse-investors-overspend-badly-on-software-companies-and-blame-ai/

https://pivot-to-ai.com/?p=7134