This is not a comprehensive review, nor an user guide. It's a step-by-step account of my initial impressions while trying the new MySQL XProtocol and the document store capabilities. In fact, I am barely scratching the surface here: more articles will come as time allows.

MySQL 5.7 has been GA for several months, as it was released in October 2015. Among the many features and improvements, I was surprised to see the MySQL team emphasizing the JSON data type. While it is an interesting feature per se, I failed to see the reason why so many articles and conference talks were focused around this single feature. Everything became clear when, with the release of MySQL 5.7.12, the MySQL team announced a new release model.

Overview

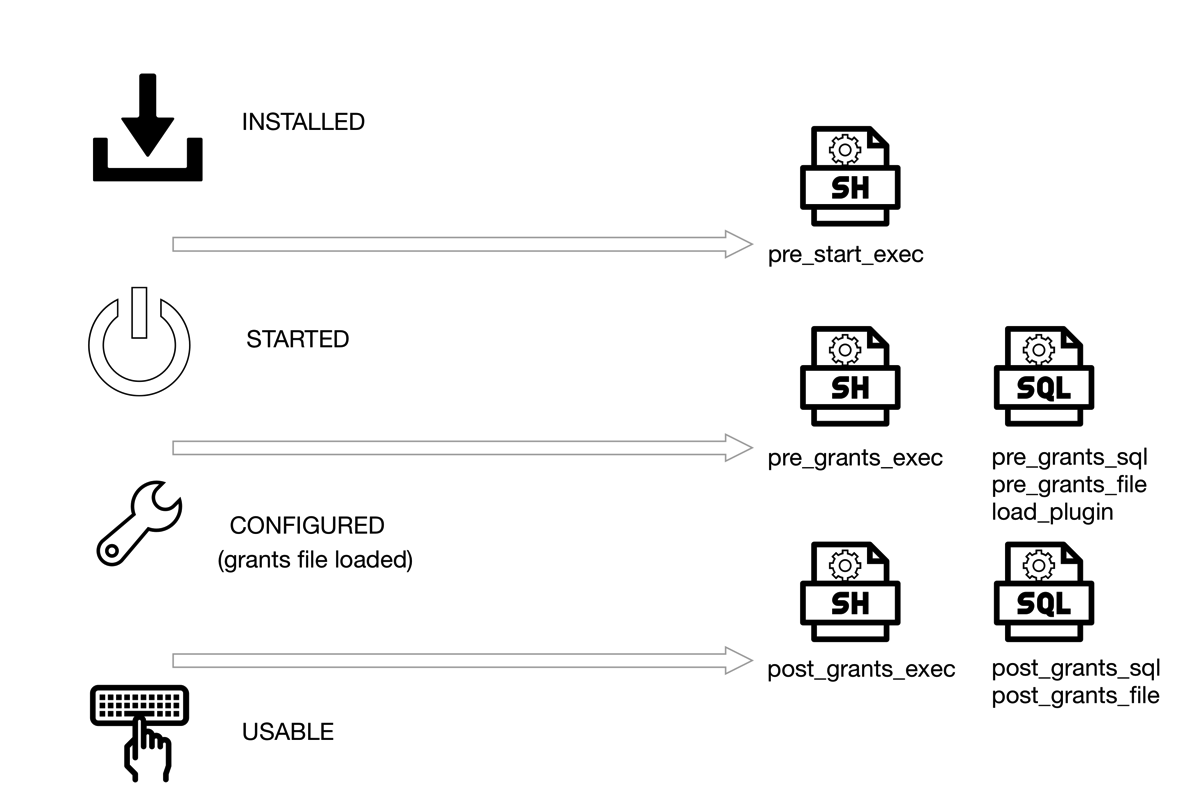

In MySQL 5.7.12, we get the usual MySQL server, which shouldn't have new features. However, in an attempt to combine the stability of the server with a more dynamic release cycle, the server ships with a new plugin, unimaginatively named X-Plugin, which supports an alternative communication protocol, named X-Protocol.

In short, the X-Protocol extends and replaces the traditional client/server protocol, by allowing asynchronous communication to the server, using different API calls, which are available, as of today, in Javascript, Python, C#, and Java, with more languages to come.

The reason for this decision is easy to see. Many developers struggle with relational tables and SQL, while they understand structures made of arrays and associative arrays (maps.) This is also one of the reasons for the recent popularity of NoSQL databases, where schemas and tables are replaced by collections of documents or similar schema-less structures. With this new release, MySQL wants to offer the best of two worlds, by allowing developers to use the database with the tools they feel most comfortable with.

To use the new plugin, you need two components:

- The plugin itself, which ships with the server package, but is not enabled by default;

- The MySQL shell, a new command line tool that you have to download and install separately and will allow you to use Javascript or Python with the database.

As a QA professional, I am a bit concerned about this mix of GA and alpha features (The MySQL shell is defined as alpha software. and the shell itself says development preview in its help). Theoretically, the two worlds should be separated. If you don't install the plugin, the server should work as usual. But practice and experience tell me that there are dangers waiting for a chance to destroy our data. If you want a single piece of advice to summarize this article, DON'T USE the new MySQL shell with a production server. That said, let's start a quick tour.

Installation

You need to install the shell, which comes in a package that is different from the rest of MySQL products. The manual shows how to install it on OSX or Linux. The only mention that this product could be dangerous to use is a note reminding the user to enable the MySQL Preview Packages when installing from a Linux repository. The procedure, on any operating system, will install library and executables globally. Unlike the server package, it is not possible to install it in a user-defined directory, like you install the server with MySQL Sandbox. In this context, the standard Oracle disclaimer may have a meaning that goes beyond a regular CYA.

Next, you need to enable the plugin. You can do it in three ways:

(1)

$ mysqlsh --classic -u youruser -p --dba enableXProtocol

mysqlx: [Warning] Using a password on the command line interface can be insecure.

Creating a Classic Session to youruser@localhost:3306

Enter password:

No default schema selected.

enableXProtocol: Installing plugin mysqlx...

enableXProtocol: done

(2)

Start the server with --plugin-load=mysqlx=mysqlx.so. This will enable the plugin, although it does not seem to work the way it should.

(3)

Enable the plugin with a SQL command.

mysql> install plugin mysqlx soname 'mysqlx.so';

I prefer method #3 because is the only one that does not have side effects or misunderstanding. The issue that hit me when I tried method #1 for the first time is that calling mysqlsh --classic uses the client/server protocol on port 3306 (or the port that you defined for the database) while subsequent calls will use the X-Protocol on port 33060.

Alternatives. Using Docker

If what I said previously has made you cautious and you have decided not to use the shell in your main computer (as you should), there are alternative ways. If you have a data center at your disposal, just fire a virtual machine and play with it. However, be aware that the MySQL shell does not install in Ubuntu 15.04 and 16.04.

A lightweight method to try on the new shell without endangering your production server is to use a Docker image for MySQL, or a combination of MySQL Sandbox and Docker.

In Docker, the MySQL shell does not ship together with the server. It requires a separate image. A quick guide is available in a recent article. I don't like the current approach: having two images is a waste of space. It would be acceptable if the images were based on a slim Linux distribution, such as Alpine. Since they run on OracleLinux, instead, you need to download two beefy images to start testing. With a fast internet connection this should not be a problem, but if you live in a place where 3 MBPS is the norm or if you are traveling, this could become an annoyance. Once you have pulled the images, you can use them at will, even without internet connection.

The above mentioned quick guide suggests using docker run --link to connect the two containers. I recommend a different approach, as the link option is now considered legacy.

$ docker network create mynet

edcc36be21e54cdb91fdc91f2c320efabf62d36ab9d31b0142e901da7e3c84e9

$ docker network ls

NETWORK ID NAME DRIVER

a64b55fb7c92 bridge bridge

0b8a52002dfd none null

cc775ec7edab host host

edcc36be21e5 mynet bridge

$ docker run --name mybox -e MYSQL_ROOT_PASSWORD=secret -d --net mynet mysql/mysql-server:5.7.12 \

--plugin-load=mysqlx=mysqlx.so

ecbfc322bb17ec0b1511ea7321c2b10f9c7b5091baee4240ab51b7bf77c1e424

$ docker run -it --net mynet mysql/shell -u root -h mybox -p

Creating an X Session to root@mybox:33060

Enter password:

No default schema selected.

Welcome to MySQL Shell 1.0.3 Development Preview

Copyright (c) 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type '\help', '\h' or '\?' for help.

Currently in JavaScript mode. Use \sql to switch to SQL mode and execute queries.

mysql-js>

The first command creates a network (called mynet).

The second command creates the server container, which is launched using the network mynet and with the plugin-load option (which seems to work well with the docker image). When you use a docker network, the container name is recognized by the network as an host name, and can be called by other members of the network. This is much cleaner than using --link.

The third command runs the MySQL shell, using the same network. This allows us to use the container name (mybox) without any other options.

Running the MySQL Javascript shell

My favorite setup for this test is a mix of MySQL Sandbox for the server and Docker for the shell. This way I can use the alpha shell without polluting my Linux host and use a feature rich MySQL Sandbox to control the server.

Here is what I do:

$ make_sandbox 5.7.12 -- --no_show -c general_log=1 -c general_log_file=general.log

I start a sandbox with MySQL 5.7.12 (tarball expanded and renamed into /opt/mysql/5.7.12), with the general log enabled. We need this to peek under the hood when we use the document store.

Next, we load the sample world_x database from the MySQL documentation page.

$ ~/sandboxes/msb_5_7_12/use -e 'source world_x.sql'

Finally, we enable the plugin.

$ ~/sandboxes/msb_5_7_12/use -e "install plugin mysqlx soname 'mysqlx.so'"

Now we can connect the shell:

$ docker run -it --net host mysql/shell -u msandbox -pmsandbox world_x

mysqlx: [Warning] Using a password on the command line interface can be insecure.

Creating an X Session to msandbox@localhost:33060/world_x

Default schema `world_x` accessible through db.

Welcome to MySQL Shell 1.0.3 Development Preview

Copyright (c) 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type '\help', '\h' or '\?' for help.

Currently in JavaScript mode. Use \sql to switch to SQL mode and execute queries.

mysql-js>

What have we done? We use the network named 'host', which is a standard Docker protocol that lets a container use the host environment. We don't need to specify a port, since the shell assumes 33060 (enabled by the X-Plugin). The username and password are the usual ones for a sandbox. We enter inside a Javascript shell, where we can communicate with the database server using an alternative syntax. Let's see what we have:

- We have an "X-Session" using port 33060 and working on database world_x;

- There is a help, same as in the MySQL client;

- The database world_x is accessible through the variable db.

- Note: all the commands used below are the same for Python and Javascript. There are differences only when using the language extensively.

With the above elements, we can try getting data from the database.

mysql-js> db.collections

{

"CountryInfo": <Collection:CountryInfo>

}

mysql-js> db.tables

{

"City": <Table:City>,

"Country": <Table:Country>,

"CountryLanguage": <Table:CountryLanguage>

}

What does it mean? Let's abandon the Javascript shell and look at the traditional client:

mysql [localhost] {msandbox} (world_x) > show tables;

+-------------------+

| Tables_in_world_x |

+-------------------+

| City |

| Country |

| CountryInfo |

| CountryLanguage |

+-------------------+

4 rows in set (0.00 sec)

Here we see 4 tables, while the Javascript console lists only 3. However, the fourth table has the same name as the "collection." Let's have a look:

mysql [localhost] {msandbox} (world_x) > desc CountryInfo;

+-------+-------------+------+-----+---------+------------------+

| Field | Type | Null | Key | Default | Extra |

+-------+-------------+------+-----+---------+------------------+

| doc | json | YES | | NULL | |

| _id | varchar(32) | YES | | NULL | STORED GENERATED |

+-------+-------------+------+-----+---------+------------------+

2 rows in set (0.00 sec)

mysql [localhost] {msandbox} (world_x) > show create table CountryInfo\G

*************************** 1. row ***************************

Table: CountryInfo

Create Table: CREATE TABLE `CountryInfo` (

`doc` json DEFAULT NULL,

`_id` varchar(32) GENERATED ALWAYS AS (json_unquote(json_extract(`doc`,'$._id'))) STORED

) ENGINE=InnoDB DEFAULT CHARSET=utf8

1 row in set (0.00 sec)

Look what we got! A JSON column with a dynamic index implemented as a virtual column. Now we can appreciate why the JSON data type was such an important thing.

Back to the Javascript shell, let's get something from the database. (You can get all the commands I am using, and much more, from the manual.)

mysql-js> db.collections.CountryInfo.find("_id='USA'")

[

{

"GNP": 8510700,

"IndepYear": 1776,

"Name": "United States",

"_id": "USA",

"demographics": {

"LifeExpectancy": 77.0999984741211,

"Population": 278357000

},

"geography": {

"Continent": "North America",

"Region": "North America",

"SurfaceArea": 9363520

},

"government": {

"GovernmentForm": "Federal Republic",

"HeadOfState": "George W. Bush"

}

}

]

1 document in set (0.00 sec)

Apart from the feeling of being back in the good old times when MySQL was still playing with IPO dreams (look at the HeadOfState field in the above data), this record is a straightforward JSON document, where data that should belong to different normalized tables are bundled together in this unified view. So, we are really querying a Table that contains JSON data associated with an _id. We know because the general log lists what happens after our simple query:

SELECT doc FROM `world_x`.`CountryInfo` WHERE (`_id` = 'USA')

Let's try a more complex query. We want all countries in Oceania with a population of more than 150,000 people, and whose Head of State is Elisabeth II. The query is a bit intimidating, albeit eerily familiar:

mysql-js> db.collections.CountryInfo.find("government.HeadOfState='Elisabeth II' AND geography.Continent = 'Oceania' AND demographics.Population > 150000").fields(["Name", "demographics.Population","geography.Continent"])

[

{

"Name": "Australia",

"demographics.Population": 18886000,

"geography.Continent": "Oceania"

},

{

"Name": "New Zealand",

"demographics.Population": 3862000,

"geography.Continent": "Oceania"

},

{

"Name": "Papua New Guinea",

"demographics.Population": 4807000,

"geography.Continent": "Oceania"

},

{

"Name": "Solomon Islands",

"demographics.Population": 444000,

"geography.Continent": "Oceania"

}

]

4 documents in set (0.00 sec)

Here is the corresponding SQL query recorder in the general log:

SELECT JSON_OBJECT(

'Name', JSON_EXTRACT(doc,'$.Name'),'demographics.Population', \

JSON_EXTRACT(doc,'$.demographics.Population'),'geography.Continent', \

JSON_EXTRACT(doc,'$.geography.Continent')

) AS doc

FROM `world_x`.`CountryInfo` \

WHERE (

((JSON_EXTRACT(doc,'$.government.HeadOfState') = 'Elisabeth II') \

AND (JSON_EXTRACT(doc,'$.geography.Continent') = 'Oceania')) \

AND (JSON_EXTRACT(doc,'$.demographics.Population') > 150000)

)

I am not sure which one I prefer. The SQL looks strange, with all those JSON functions, while the Javascript command seems more readable (I had never thought I would say what I have just said!)

Enough with reading data. I want to manipulate some. I'll start by creating a new collection.

mysql-js> db.createCollection('somethingNew')

<Collection:somethingNew>

And the general log shows what should not be a surprise, as we have seen a similar structure for CountryInfo:

CREATE TABLE `world_x`.`somethingNew` (doc JSON, \

_id VARCHAR(32) \

GENERATED ALWAYS AS (JSON_UNQUOTE(JSON_EXTRACT(doc, '$._id'))) \

STORED NOT NULL UNIQUE

) CHARSET utf8mb4 ENGINE=InnoDB

Now, to the data manipulation:

mysql-js> mynew=db.getCollection('somethingNew')

<Collection:somethingNew>

The variable mynew can access the new collection. It's a shortcut to avoid db.collections.somethingNew

mysql-js> db.collections

{

"CountryInfo": <Collection:CountryInfo>,

"somethingNew": <Collection:somethingNew>

}

mysql-js> mynew.find()

Empty set (0.00 sec)

As expected, there is nothing inside the new collection. Now we enter a very minimal record.

mysql-js> mynew.add({Name:'Joe'})

Query OK, 1 item affected (0.01 sec)

mysql-js> mynew.find()

[

{

"Name": "Joe",

"_id": "e09ef177c50fe6110100b8aeed734276"

}

]

1 document in set (0.00 sec)

The collection contains more than what we have inserted. There is an apparently auto-generated _id field. Looking at the general log, we see that the data includes the new field.

INSERT INTO `world_x`.`somethingNew` (doc) VALUES ('{\"Name\":\"Joe\",\"_id\":\"e09ef177c50fe6110100b8aeed734276\"}')

As you can see, an _id field was added automatically. We could override that behavior by providing our own value:

mysql-js> mynew.add({_id: "a dummy string", Name:"Frank", country: "UK"})

The data inserted now includes the _id filed with our manual value. The general log says:

INSERT INTO `world_x`.`somethingNew` (doc) VALUES ('{\"Name\":\"Frank\",\"_id\":\"a dummy string\",\"country\":\"UK\"}')

The value of _id, however, must be unique, or the engine will generate an error:

mysql-js> mynew.add({_id: "a dummy string", Name:"Sam", country: "USA"})

MySQL Error (5116): Document contains a field value that is not unique but required to be

If all this gives you a sense of deja-vu, you're right. This feels and smells a lot like MongoDB, and I am sure it isn't a coincidence.

Synchronizing operations

As our last attempt for the day, we will see what happens when we manipulate data in SQL and then retrieve it in Javascript or Python.

We leave the JS console open, and we do something in SQL

mysql [localhost] {msandbox} (world_x) > drop table somethingNew;

Query OK, 0 rows affected (0.01 sec)

How does it look like on the other side?

mysql-js> db.collections

{

"CountryInfo": <Collection:CountryInfo>,

"somethingNew": <Collection:somethingNew>

}

mysql-js> db.getCollections()

{

"CountryInfo": <Collection:CountryInfo>,

"somethingNew": <Collection:somethingNew>

}

Oops! mysqlsh didn't get the memo! It still considers somethingNew to be available.

mysql-js> db.collections.somethingNew.find()

MySQL Error (1146): Table 'world_x.somethingNew' doesn't exist

We need to refresh the connection. Unlike the SQL client, you need to specify the connection parameters.

mysql-js> \connect msandbox:msandbox@localhost:33060/world_x

Closing old connection...

Creating an X Session to msandbox@localhost:33060/world_x

Default schema `world_x` accessible through db.

mysql-js> db.collections

{

"CountryInfo": <Collection:CountryInfo>

}

We can see the same happening when we create a new table in SQL. The session in mysqlsh keeps showing the cached contents, and we need to refresh the session to see the changes. Looking at the general log, there are no changes when we issue commands asking for metadata, such as db.collections or db.tables. Instead, when we refresh the session, we see this:

SELECT table_name, COUNT(table_name) c FROM information_schema.columns \

WHERE ((column_name = 'doc' and data_type = 'json')

OR (column_name = '_id' and generation_expression = 'json_unquote(json_extract(`doc`,''$._id''))')) \

AND table_schema = 'world_x' GROUP BY table_name HAVING c = 2

SHOW FULL TABLES FROM `world_x`

The first query lists all tables that contain a JSON document and a generated _id (these are the collections). The second one lists all tables. Then the shell removes from the table list all the ones that were in the collections list.

Given the way it is done, we can cheat the system easily by creating something that looks like a collection, but has extra fields:

CREATE TABLE strangedoc (doc JSON, \

_id VARCHAR(32) \

GENERATED ALWAYS AS (JSON_UNQUOTE(JSON_EXTRACT(doc, '$._id'))) \

STORED NOT NULL UNIQUE,

secret_stash varchar(200),

more_secret_info mediumtext

) CHARSET utf8mb4 ENGINE=InnoDB;

mysql [localhost] {msandbox} (world_x) > insert into strangedoc (doc,secret_stash,more_secret_info) values \

('{"_id": "abc", "name": "Susan"}', \

'and now for something completely different', \

'Lorem ipsum dolor sit amet, consectetur adipisicing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.');

Query OK, 1 row affected (0.00 sec)

mysql [localhost] {msandbox} (world_x) > select * from strangedoc\G

*************************** 1. row ***************************

doc: {"_id": "abc", "name": "Susan"}

_id: abc

secret_stash: and now for something completely different

more_secret_info: Lorem ipsum dolor sit amet, consectetur adipisicing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

1 row in set (0.00 sec)

And the Javascript console will be unaware of the extra material:

mysql-js> db.collections

{

"CountryInfo": <Collection:CountryInfo>,

"strangedoc": <Collection:strangedoc>

}

mysql-js> db.strangedoc.find()

[

{

"_id": "abc",

"name": "Susan"

}

]

1 document in set (0.00 sec)

We can add contents to the collection in Javascript, and the database server won't protest (provided that the extra fields are nullable or have a default value). Is it a bug or a feature?

Parting thoughts

As I have said at the beginning, this is a very simple exploration. More work is required to test the full potential of the new model. My impressions are mildly positive. On one hand, it's an exciting environment, which promises to expand to better usefulness with more programming languages and possibly better coordination between shell and server software. On the other hand, there are many bugs, and the software is still very green. It will require more iterations from the community and the development team before it could be trusted with important data.