The previous post described a new system that allows rendering rich surfaces we call "meta-materials" using low-resolution geometry. Meta-materials cover ranges from 100 meters to 10 meters. What about anything more distant than that, that is, the range from tens of kilometers down to 100 meters?

It turns out the same system applies. You can think of this as "meta-meta-materials", we just do not call them that because one "meta" in a name is already too much. We have multiple objects that do fit that description. A terrain biome is one example.

In this post, you can see the results of applying this method to biome objects. All images are in faux solid color, which we use to make sure feature placement is correct.

Here is a single biome and the amount of geometry it takes to represent it:

In order to capture the detail, this biome also uses 1024x1024 texture maps for diffuse color, normals and other maps required for physically based rendering. Terrain voxels, which are generated on the fly, emit UV coordinate pairs which link the voxel's position in the world with the right section of these texture maps.

Here you can see multiple biomes in the same image, again in faux color, covering an area of approximately 3000 square kilometers:

Since most detail is contained by textures, it is possible to use a much coarser geometry. The following images show that we can crank up the mesh simplification and still obtain fairly good looking features:

As a creator of worlds, this feature is entirely transparent to you. These detail textures are automatically generated. Actually, all the content in these images was generated by our procedural algorithms, but if you had custom made maps, you would not need to be concerned about creating and maintaining the detail textures.

Like I said in the previous post, this is a technique frequently used in modern polygon-based terrain. The key here is this is now working on voxel terrains. These environments can be modified in real time by players. They can harvest materials, make trenches, even blow out entire craters in real time.

Following one man's task of building a virtual world from the comfort of his pajamas. Discusses Procedural Terrain, Vegetation and Architecture generation. Also OpenCL, Voxels and Computer Graphics in general.

Showing posts with label Procedural Terrain. Show all posts

Showing posts with label Procedural Terrain. Show all posts

Thursday, February 2, 2017

Monday, January 30, 2017

Prettier, Faster Terrains

We will be updating the terrain systems in Voxel Farm soon. Hopefully, it will get a lot prettier and faster. It is not often you get improvements in these two, for the most part, opposite directions. In this case, it seems we got lucky.

It was thanks to a synergy between two existing aspects of the engine that get to play together really well. One is UV-mapped voxels, the other is meta-materials.

Here is how it works: A single meta-materials describes a type of terrain. For instance, mountain cliff. Within this single meta-material you may find different materials. In the case of a cliff that could be exposed rock, mossy rock, grass, dislodged stones, dirt, etc. An artist gets to create how the meta-material surface is broken down into these sub-materials. The meta-material also has a volumetric definition, which is a displacement map and can be carefully tied to the sub-material map.

When you are close to the meta-material's surface, it must be rendered as full geometry. This is because features in the meta-material, let's say a rock that sticks out, can measure up to dozens of meters. This content must be made of actual voxels so it can be harvested, destroyed, etc. It is not just a GPU displacement trick.

As you are farther away from these features, using geometry to capture detail becomes expensive. You face the hard choice of keeping a high geometry density or dial down geometry and loose detail.

The new terrain system can dial down geometry, but keep the appearance of detail by using automatically generated textures for the metamaterial. For the close range, it still uses geometry to capture detail, but at a certain distance the meta-material displacement can be represented with just a normal map. High resolution sub material textures for grass, rock, etc. are not needed anymore. A single color map is able to capture the look of the metamaterial from this distance. These are only a few extra maps that can be reused anywhere in the scene where the meta-material appears.

The following image shows a single meta-material that uses geometry for the close range and texture maps for the medium-far ranges:

It was thanks to a synergy between two existing aspects of the engine that get to play together really well. One is UV-mapped voxels, the other is meta-materials.

Here is how it works: A single meta-materials describes a type of terrain. For instance, mountain cliff. Within this single meta-material you may find different materials. In the case of a cliff that could be exposed rock, mossy rock, grass, dislodged stones, dirt, etc. An artist gets to create how the meta-material surface is broken down into these sub-materials. The meta-material also has a volumetric definition, which is a displacement map and can be carefully tied to the sub-material map.

When you are close to the meta-material's surface, it must be rendered as full geometry. This is because features in the meta-material, let's say a rock that sticks out, can measure up to dozens of meters. This content must be made of actual voxels so it can be harvested, destroyed, etc. It is not just a GPU displacement trick.

As you are farther away from these features, using geometry to capture detail becomes expensive. You face the hard choice of keeping a high geometry density or dial down geometry and loose detail.

The new terrain system can dial down geometry, but keep the appearance of detail by using automatically generated textures for the metamaterial. For the close range, it still uses geometry to capture detail, but at a certain distance the meta-material displacement can be represented with just a normal map. High resolution sub material textures for grass, rock, etc. are not needed anymore. A single color map is able to capture the look of the metamaterial from this distance. These are only a few extra maps that can be reused anywhere in the scene where the meta-material appears.

The following image shows a single meta-material that uses geometry for the close range and texture maps for the medium-far ranges:

The colors in the wireframe view show where each method is applied. Just by comparing the triangle densities you can see this saves a massive amount of geometry:

This method is not new in terrain rendering, however, it is quite new in a voxel terrain. It is all possible thanks to the fact our voxels can have UV coordinates. Voxels output by the terrain component in the green area have UV coordinates. These coordinates make sure the normal, diffuse and other maps created to render the meta-material at this distance match the volumetric profile and sub-material patterns in the meta-material up close.

The beauty of it is that this work with any type of terrain topology, not just heightmaps. If you are doing caves, cave walls, ceilings and ground are very distinct meta-materials and they would all benefit from this method. And it should be all automatic, we can turn this system on, and it won't require artists to create any new assets.

We are still figuring out how to solve some kinks in the system, but so far I am very pleased with the results. I will be posting more pictures and videos eventually.

Tuesday, January 17, 2017

When Mountains Move

There is an aspect of procedural generation I do not see discussed often. What happens when you have layers of procedural content, add hand-made content on top of it, and then go on to improve the procedural generation?

Last week we did a release of our tools that make it really simple to create terrain biomes. You can see it in action here:

This system can produce fairly good looking terrain with just a few clicks, however, there are still some aspects of it I wanted to improve.

One key issue that you can see in this video, is that it tends to produce straight lines. There is still some "diamond" symmetry we need to address the core algorithm. The image below shows a really bad case, where the produced heightmap has parallel features forming an almost perfect square:

A simple fix or improvement like this leaves us with bigger questions: How do we make this backward compatible? Do we make it backward compatible at all, or just apologize and ask human creators to relocate their data?

We could provide an option to turn this improvement off. That would be the most diplomatic approach. But then what about any other improvements we have planned for the future, do they all get their own setting? At this point, it seems we would be complicating the UI with many options that are meaningless to new users. These would be just switches to make algorithms behave in more primitive forms.

Whenever algorithms create content along with humans it really becomes a muddy, gray area for me. I still haven't figured this one out.

Last week we did a release of our tools that make it really simple to create terrain biomes. You can see it in action here:

One key issue that you can see in this video, is that it tends to produce straight lines. There is still some "diamond" symmetry we need to address the core algorithm. The image below shows a really bad case, where the produced heightmap has parallel features forming an almost perfect square:

At the left, you can see the generated heightmap. The right panel shows a render of the same heightmap.

This did not take long to fix. Instead of running the generation algorithm on a grid made of squares, switching to irregular quadrilaterals is enough to break most of the parallel lines. The following image shows the results after the fix:

We could provide an option to turn this improvement off. That would be the most diplomatic approach. But then what about any other improvements we have planned for the future, do they all get their own setting? At this point, it seems we would be complicating the UI with many options that are meaningless to new users. These would be just switches to make algorithms behave in more primitive forms.

Whenever algorithms create content along with humans it really becomes a muddy, gray area for me. I still haven't figured this one out.

Friday, July 15, 2016

Intelligent Terrain Synthesis

Don't you hate it when your favorite TV series puts out an episode that is just clips of stuff that happened in earlier episodes? This post has some of that but hopefully will provide you with a better idea of how we see Procedural Generation in the near future.

This video shows the new procedural terrain system to be released in Voxel Farm 3:

In case you want to find out more about what is happening under the hood, these previous posts may help:

Geometry is Destiny Part I and Part II

Introducing Pollock

Terrain Synthesis

The idea is simple. Instead of asking an artist to labor over a hundred of different assets, either by hand or by using complex generation tools like World Machine, we now have a synthetic entity that can do some of that work through a mix of AI and simulation. You do not have to be an expert or initiated at all in the arts of procedural generation to get a satisfactory outcome.

Why are AI and simulation important? After working for a while in procedural generation, it became clear to me there was no workaround to the entropy problem. This I believe can be stated like this: Viewers of procedurally generated content will perceive only the "seed" information, not the "expanded" data. Yes, you may have a noise function that can output terabytes of data, but all this data will be compressed by the human physique to the few bytes that take to express the noise function itself. I posted more in detail about this problem here:

Uncanny Valley of Procedural Generation

Procedural Information

Evolution of Procedural

This does not mean all procedural generation is bad. It means it must produce information in order to be good. Good Procedural Generation is closer to simulation, automation and AI. You cannot have information without work being done, and if work is to be done better leave it to the machine.

The video at the top shows our first attempt at having AI that can do procedural generation, stay tuned because more is coming.

This video shows the new procedural terrain system to be released in Voxel Farm 3:

In case you want to find out more about what is happening under the hood, these previous posts may help:

Geometry is Destiny Part I and Part II

Introducing Pollock

Terrain Synthesis

The idea is simple. Instead of asking an artist to labor over a hundred of different assets, either by hand or by using complex generation tools like World Machine, we now have a synthetic entity that can do some of that work through a mix of AI and simulation. You do not have to be an expert or initiated at all in the arts of procedural generation to get a satisfactory outcome.

Why are AI and simulation important? After working for a while in procedural generation, it became clear to me there was no workaround to the entropy problem. This I believe can be stated like this: Viewers of procedurally generated content will perceive only the "seed" information, not the "expanded" data. Yes, you may have a noise function that can output terabytes of data, but all this data will be compressed by the human physique to the few bytes that take to express the noise function itself. I posted more in detail about this problem here:

Uncanny Valley of Procedural Generation

Procedural Information

Evolution of Procedural

This does not mean all procedural generation is bad. It means it must produce information in order to be good. Good Procedural Generation is closer to simulation, automation and AI. You cannot have information without work being done, and if work is to be done better leave it to the machine.

The video at the top shows our first attempt at having AI that can do procedural generation, stay tuned because more is coming.

Monday, July 11, 2016

Geometry is Destiny Part 2

This is a continuation of an earlier post. That post ended in a literal, virtual cliffhanger. We generated continent shapes and we used tectonic plate simulation to compute where mountain ranges would appear.

The remaining step was to assign biomes. We wanted biome placement to be believable so we did a bit of research on what biomes are where and why they appear.

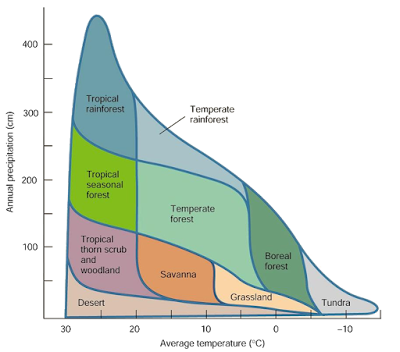

Scientists have distilled this to a very convenient set of rules, which are captured by the following chart:

I stole this particular image from Mugan's Biology Page, but you will find it pretty much everywhere occurrence of biomes on Earth is discussed. This is a classification made by Robert Whittaker based on annual precipitation and average temperate. His study suggests temperature and humidity are enough to determine biome occurrence.

This made it simple for us. We only needed to compute two parameters across the continent: temperature and humidity.

Let's start with the easiest, which is temperature. We figured out some of this would have to be user input. as we did not know the latitude for the continent we would not be able to determine how cold or hot it was. Instead of asking how much sun the landmass was getting (which is what latitude means in this case), we chose to ask temperature values for each corner of the map:

To get the temperature for any position within the map we just do a linear interpolation from these four corner values.

Temperature also changes with elevation. This ranges from -6 to -10 degrees Celcius for each extra kilometer in altitude. We had a rough elevation map from the tectonic plate simulation, with this additional piece we are able to compute a fairly good temperature value for any point on the map. This is the horizontal axis of the Whittaker chart.

The vertical axis is rainfall or humidity percentage as other biome charts have it. This one is trickier. In real life, water evaporates from large water masses like the sea into clouds. Air rises when it meets higher elevation. There it cools down losing some of its ability to hold water. The water excess becomes rainfall.

We chose to simulate exactly that process. We knew the continent would be surrounded by ocean water. This would be the main source of water. The next step would be to determine wind direction. Continents are exposed to different wind systems depending on where they are on the planet. Global wind simulation was out of scope for us, so we chose again to ask the user:

The input is quite simple, just a wind direction for each map of the corner. With this, we would be able to produce a wind vector field for the entire map.

If you remember the previous post, a key aspect of this simulation framework was the use of a mesh instead of a regular 2D grid:

This came handy for simulating water transfer. Each node is seeded with an initial amount of water. Nodes over the ocean would get 100% and nodes over the landmass would get zero.

Then we perform multiple simulation steps. Each step looks at each pair of connected nodes and figures out how much water moved from one node to the next and how much was lost as precipitation.

Assume two connected nodes, A and B. The dot product between the wind vector at A, and the vector that goes from A to B will tell us how able the wind is to carry water from A to B. Then based on the water already contained in A and B, and the temperature changes, we can compute how much water moved and how much rainfall there is.

After sufficient simulation steps to cover the node graph, the following pattern emerges:

Here the wind comes from the south. The grayscale shows humidity. The red areas show where the mountains are. As you can see most of the moisture carried by the wind precipitates right after entering the continent, leaving most of the land behind very dry.

If we switch wind direction to the north, a very different pattern emerges:

Once both temperature and humidity are known, assigning biomes is trivial. You can think of the Whittaker chart as a 2D matrix. Humidity determines which row you would use and temperature the column:

The remaining step was to assign biomes. We wanted biome placement to be believable so we did a bit of research on what biomes are where and why they appear.

Scientists have distilled this to a very convenient set of rules, which are captured by the following chart:

I stole this particular image from Mugan's Biology Page, but you will find it pretty much everywhere occurrence of biomes on Earth is discussed. This is a classification made by Robert Whittaker based on annual precipitation and average temperate. His study suggests temperature and humidity are enough to determine biome occurrence.

This made it simple for us. We only needed to compute two parameters across the continent: temperature and humidity.

Let's start with the easiest, which is temperature. We figured out some of this would have to be user input. as we did not know the latitude for the continent we would not be able to determine how cold or hot it was. Instead of asking how much sun the landmass was getting (which is what latitude means in this case), we chose to ask temperature values for each corner of the map:

To get the temperature for any position within the map we just do a linear interpolation from these four corner values.

Temperature also changes with elevation. This ranges from -6 to -10 degrees Celcius for each extra kilometer in altitude. We had a rough elevation map from the tectonic plate simulation, with this additional piece we are able to compute a fairly good temperature value for any point on the map. This is the horizontal axis of the Whittaker chart.

The vertical axis is rainfall or humidity percentage as other biome charts have it. This one is trickier. In real life, water evaporates from large water masses like the sea into clouds. Air rises when it meets higher elevation. There it cools down losing some of its ability to hold water. The water excess becomes rainfall.

We chose to simulate exactly that process. We knew the continent would be surrounded by ocean water. This would be the main source of water. The next step would be to determine wind direction. Continents are exposed to different wind systems depending on where they are on the planet. Global wind simulation was out of scope for us, so we chose again to ask the user:

The input is quite simple, just a wind direction for each map of the corner. With this, we would be able to produce a wind vector field for the entire map.

If you remember the previous post, a key aspect of this simulation framework was the use of a mesh instead of a regular 2D grid:

Then we perform multiple simulation steps. Each step looks at each pair of connected nodes and figures out how much water moved from one node to the next and how much was lost as precipitation.

Assume two connected nodes, A and B. The dot product between the wind vector at A, and the vector that goes from A to B will tell us how able the wind is to carry water from A to B. Then based on the water already contained in A and B, and the temperature changes, we can compute how much water moved and how much rainfall there is.

After sufficient simulation steps to cover the node graph, the following pattern emerges:

Here the wind comes from the south. The grayscale shows humidity. The red areas show where the mountains are. As you can see most of the moisture carried by the wind precipitates right after entering the continent, leaving most of the land behind very dry.

If we switch wind direction to the north, a very different pattern emerges:

Once both temperature and humidity are known, assigning biomes is trivial. You can think of the Whittaker chart as a 2D matrix. Humidity determines which row you would use and temperature the column:

This could be generalized to other biome types by providing a different matrix, but I have not paid too much attention to other-worldly biome systems. I have not found any good examples of biome attribution beyond temperature and humidity.

Once you know the biome for each node in the mesh, obtaining a 2D map is a matter of drawing each triangle in the mesh to a bitmap. We had a software rasterizer for occlusion culling that came pretty handy here.

I leave you with a few examples of what happens when you play with wind directions and base temperatures:

Monday, June 27, 2016

Introducing Pollock

Pollock is the code-name for our new terrain generation system. Why Pollock? This system went rogue for a couple of days and started producing things that did not look like terrain and more like Jackson Pollock paintings:

It seems mad randomness at first, just like Pollock, but there is a lot of order in this chaos (and also what appeared to be a buffer overrun error somewhere in the code.)

Here are some images of the system when it behaves as expected:

The colors you see in these renders are not the final landscape colors. Each color identifies a different layer of more detailed material that will go there. These are placeholder materials Pollock is creating for you.

Pollock's main input is photographs, which you provide to suggest the geography of each biome. In case you want to create a full continent, Pollock will ask you some additional basic facts about elevation, temperature and wind direction.

In continents, you will get nice surprises like a desert appearing on one side of a mountain range:

While the other side of the same range is all made of fertile land:

This has happened due to all the moisture coming from the sea precipitating over one side and having only dry air go over the mountains.

It takes around five minutes to set this up from scratch. The system will so some pre-processing for a few minutes (usually less than five) and that's it. In less than 15 minutes you can complete the creation of an entire continent that spans over a dozen different biomes.

We are in the last stages of completion for this system. There are two main features missing: the addition of forests, rocks, etc. and plugging this with the lake generator to get inner lakes. Right now the system only does ocean.

This system will be included in the Voxel Farm 3 release.

It seems mad randomness at first, just like Pollock, but there is a lot of order in this chaos (and also what appeared to be a buffer overrun error somewhere in the code.)

Here are some images of the system when it behaves as expected:

The colors you see in these renders are not the final landscape colors. Each color identifies a different layer of more detailed material that will go there. These are placeholder materials Pollock is creating for you.

Pollock's main input is photographs, which you provide to suggest the geography of each biome. In case you want to create a full continent, Pollock will ask you some additional basic facts about elevation, temperature and wind direction.

In continents, you will get nice surprises like a desert appearing on one side of a mountain range:

While the other side of the same range is all made of fertile land:

This has happened due to all the moisture coming from the sea precipitating over one side and having only dry air go over the mountains.

It takes around five minutes to set this up from scratch. The system will so some pre-processing for a few minutes (usually less than five) and that's it. In less than 15 minutes you can complete the creation of an entire continent that spans over a dozen different biomes.

We are in the last stages of completion for this system. There are two main features missing: the addition of forests, rocks, etc. and plugging this with the lake generator to get inner lakes. Right now the system only does ocean.

This system will be included in the Voxel Farm 3 release.

Subscribe to:

Posts (Atom)